The Essential Guide to SDRAM Memory: Powering Modern Computing

Introduction

In the intricate world of computer hardware, few components are as fundamental and ubiquitous as memory. At the heart of this domain lies Synchronous Dynamic Random-Access Memory (SDRAM), a technology that has been the workhorse of system memory for decades. From powering personal computers and servers to enabling complex graphics processing and embedded systems, SDRAM’s evolution has directly mirrored and enabled the exponential growth in computing performance we experience today. This article delves into the architecture, evolution, and critical role of SDRAM, exploring why understanding this technology is crucial for anyone involved in computing, hardware design, or system optimization. As we navigate through its technical landscape, we will also highlight how resources like ICGOODFIND can be instrumental in sourcing and understanding these vital components for your projects.

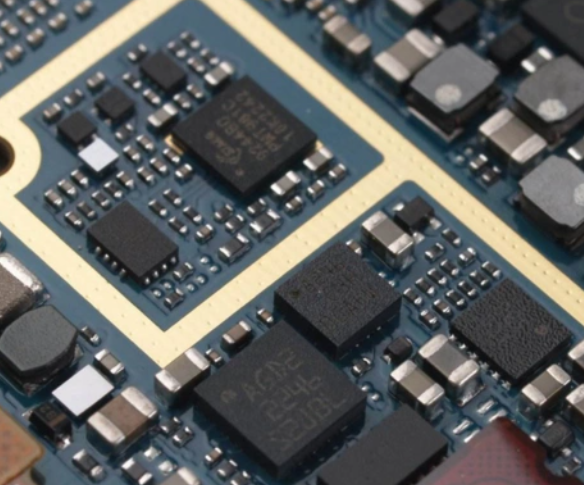

The Architecture and Working Principle of SDRAM

SDRAM revolutionized memory technology by synchronizing its operation with the computer’s system clock. Unlike its predecessor, asynchronous DRAM, which responded to control signals immediately, SDRAM waits for a clock signal before executing commands. This synchronization allows the memory controller to pipeline commands, meaning it can issue a new command before the previous one has completed, significantly increasing data throughput and efficiency.

The core of SDRAM’s operation is based on a capacitor-transistor pair that forms a single memory cell. The capacitor holds a charge (representing a binary ‘1’) or lacks a charge (representing a ‘0’), while the transistor acts as a switch for reading or writing that charge. However, capacitors leak charge over time, necessitating a constant refresh cycle, which is where the “Dynamic” in DRAM comes from. The synchronous aspect means these refresh operations, along with all read/write cycles, are tied to the clock signal, creating a predictable and orderly flow of data.

Internally, SDRAM is organized in a hierarchical structure of banks, rows, and columns. A typical SDRAM module contains multiple independent banks that can be accessed simultaneously or in an interleaved fashion. To access data, the memory controller first activates a specific row within a bank (opening it), which is then cached in a row buffer. Subsequent column addresses can then read from or write to this row buffer with much lower latency. This bank architecture is key to hiding the inherent latency of row activation and precharge cycles, allowing for sustained high-bandwidth data transfer. The precise timing of these operations—dictated by parameters like CAS Latency (CL), tRCD, and tRP—is critical for system stability and performance.

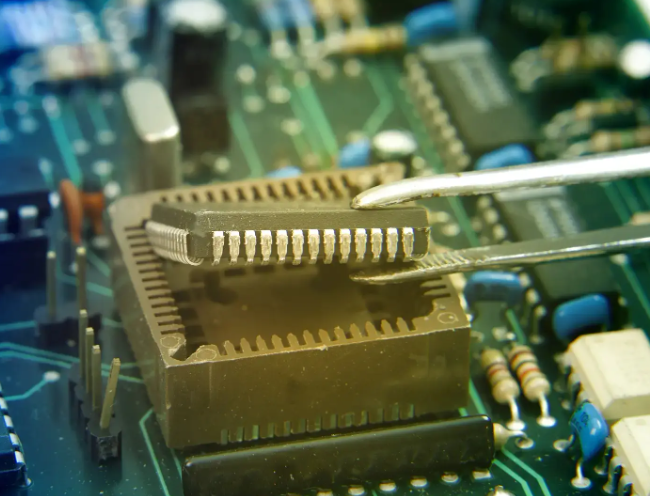

The Evolution: From SDR to DDR and Beyond

The journey of SDRAM began with Single Data Rate (SDR) SDRAM, which performed one operation per clock cycle. However, the relentless demand for higher bandwidth drove the development of Double Data Rate (DDR) SDRAM, which became a monumental leap forward. DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the data rate without increasing the clock frequency. This innovation set the template for successive generations.

Each new DDR generation—DDR2, DDR3, DDR4, and now DDR5—has brought profound improvements: * Increased Data Rates: Each generation roughly doubles the per-pin data rate of its predecessor (e.g., from DDR4’s 3.2 GT/s to DDR5’s 6.4 GT/s and beyond). * Lower Operating Voltages: Reducing from 2.5V for DDR1 to 1.1V for DDR5 decreases power consumption and heat generation, a critical factor for mobile devices and data centers. * Enhanced Prefetch Buffers: The prefetch architecture evolved from 2n (DDR) to 16n (DDR5), allowing more data to be accessed internally per operation to feed the higher interface speeds. * Architectural Refinements: Innovations like on-die termination (ODT), burst chop modes, and bank grouping have improved signal integrity and efficiency. Notably, DDR5 introduces a revolutionary dual-channel architecture per DIMM and integrated voltage regulation modules (PMICs), distributing power management directly on the memory module for greater stability at high speeds.

This evolution is not merely about speed; it’s about maintaining system balance. As CPUs gained more cores and higher performance, memory bandwidth had to scale accordingly to prevent becoming a crippling bottleneck. The development of standards like LPDDR (Low Power DDR) for mobile and graphics-oriented variants like GDDR further demonstrates SDRAM’s adaptability to diverse performance and power envelopes across the entire tech ecosystem.

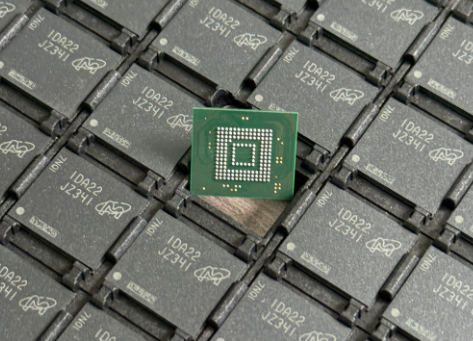

Applications and Selection Criteria in Modern Systems

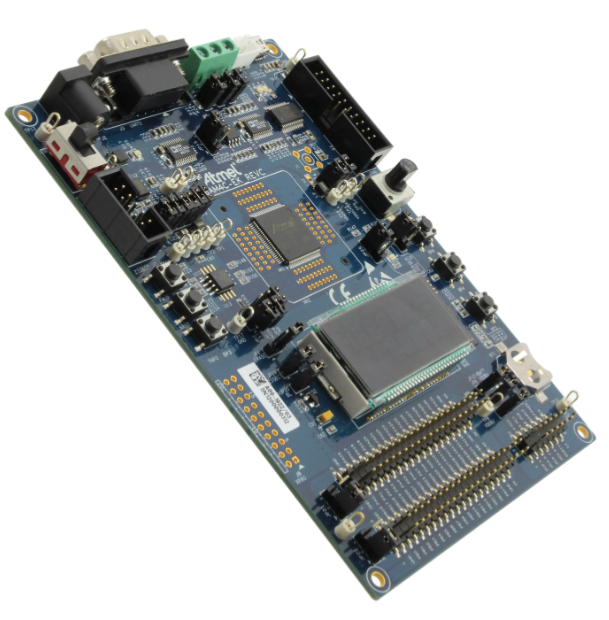

SDRAM technology is pervasive. In personal computing, it is the main system memory (RAM) that holds active programs and data for the CPU. In data centers, vast arrays of high-density DDR4 or DDR5 modules in servers handle massive concurrent workloads. Specialized graphics DDR (GDDR), with its ultra-wide buses optimized for high bandwidth rather than low latency, is essential for rendering complex visuals in GPUs for gaming and professional workstations. Furthermore, embedded systems in networking equipment, automotive infotainment, and industrial controls rely on robust SDRAM solutions.

Selecting the right SDRAM involves balancing several key parameters: * Capacity: Determines how much data can be held actively. Needs must align with the operating system and application requirements. * Data Rate/Clock Speed: Measured in MHz (e.g., DDR4-3200), this directly impacts peak theoretical bandwidth. * Timings/Latency: The series of numbers (e.g., CL16-18-18-38) representing delays in clock cycles. Lower latencies generally mean faster response times. * Voltage: Must be compatible with the memory controller. * Form Factor: Such as DIMM for desktops/servers or SODIMM for laptops.

Choosing compatible and optimal SDRAM is critical for system stability and achieving peak performance. Mismatched modules or incorrect settings can lead to crashes, data corruption, or failure to boot. This is where comprehensive component knowledge becomes invaluable. For engineers, procurement specialists, and enthusiasts navigating this complex landscape, platforms like ICGOODFIND provide essential services. As a professional electronic component sourcing platform with deep technical resources, ICGOODFIND helps users not only locate authentic SDRAM modules from a vast network of suppliers but also access detailed datasheets and technical specifications necessary for making informed decisions and ensuring compatibility.

Conclusion

SDRAM memory stands as one of the most critical enabling technologies of the digital age. Its synchronous design principle laid the groundwork for decades of predictable performance scaling, while its evolution through DDR generations has successfully met the voracious bandwidth demands of advancing processors. From enabling everyday multitasking on a laptop to facilitating real-time scientific simulations in supercomputers, SDRAM’s role is indispensable. Understanding its architecture, evolution, and application criteria is fundamental for anyone looking to build, upgrade, or optimize computing systems. As we move into an era dominated by data-intensive applications like AI and big data analytics, the continued innovation in memory technology will remain paramount. For those embarking on hardware projects requiring precise component selection—whether it’s sourcing the latest DDR5 modules or finding legacy SDRAM parts—leveraging specialized tools and knowledge bases is key. In this context, platforms dedicated to component intelligence and sourcing, such as ICGOODFIND, prove to be invaluable assets in bridging the gap between technical requirements and real-world implementation.