MCU Memory: The Critical Engine for Embedded Intelligence

Introduction

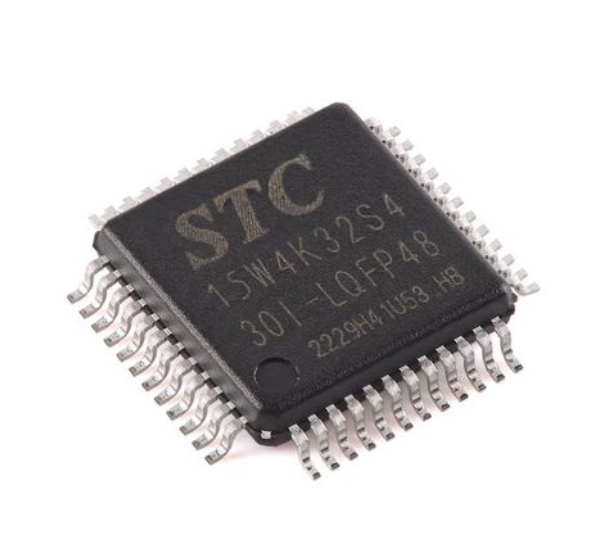

In the vast and intricate world of embedded systems, the Microcontroller Unit (MCU) stands as the silent, ubiquitous brain. From the thermostat regulating your home’s temperature to the advanced sensor array in a modern automobile, MCUs execute predefined tasks with precision. However, the intelligence of any MCU is fundamentally constrained and defined by its memory architecture. MCU memory is not a monolithic entity but a carefully orchestrated hierarchy of different technologies, each serving a distinct purpose to balance performance, cost, power consumption, and reliability. Understanding MCU Memory is paramount for developers, engineers, and businesses aiming to optimize their embedded designs. This article delves deep into the types, functions, and strategic considerations of memory within microcontrollers, highlighting why it is the cornerstone of efficient embedded system design.

Main Body

Part 1: The Hierarchical Architecture of MCU Memory

Unlike general-purpose computers, MCUs integrate memory directly onto the same chip as the processor core. This integration is key to their compact size, low power usage, and deterministic performance. The memory hierarchy typically consists of three primary layers:

-

Flash Memory (Program Memory): This is the non-volatile workhorse where the application code (firmware) resides permanently. Even when power is cycled, the program remains intact, ready for execution. Flash memory’s reliability and reprogrammability make it indispensable for field updates and long-term product deployment. Modern MCUs often feature enhanced flash technologies with faster read speeds and lower power consumption during execution, directly impacting the MCU’s performance ceiling.

-

SRAM (Data Memory): Static Random-Access Memory is the MCU’s volatile, high-speed workspace. It is used for storing runtime variables, the system stack, heap data, and intermediate computation results. The speed of SRAM is critical for real-time performance, as slow access can bottleneck the core processor. However, SRAM is power-hungry and occupies significant silicon area, making its size a major cost driver. Designers must meticulously manage SRAM usage to avoid overflow and ensure system stability.

-

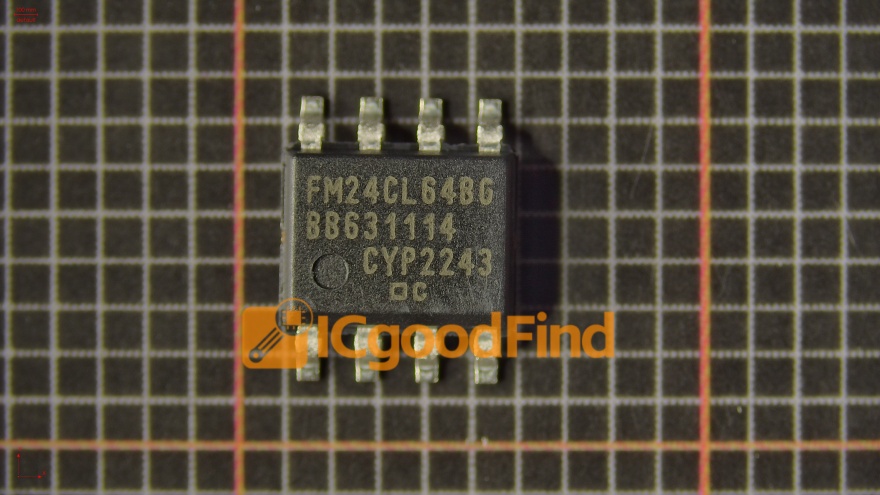

EEPROM and Other Non-Volatile Data Memory: While flash stores the program, Electrically Erasable Programmable Read-Only Memory serves a different purpose. It is designed for storing small amounts of data that must survive power cycles but may need frequent updates—such as calibration constants, device configuration parameters, or user settings. EEPROM offers fine-grained byte-by-byte erase/write capabilities, unlike flash which is often erased in larger blocks, making it more durable for small, frequent data changes.

This hierarchical design ensures that frequently accessed code and data are available at the fastest possible speeds (from SRAM or cache), while bulk code resides in denser, cheaper flash.

Part 2: Key Performance Metrics and Design Trade-offs

Selecting an MCU based on memory specifications requires evaluating several interconnected metrics:

-

Density vs. Cost: More memory (both flash and SRAM) increases functionality but also raises chip cost and physical size. Optimizing code density through efficient programming practices is essential to control costs. Over-provisioning memory “for the future” can be a prudent strategy to enable feature updates without hardware changes.

-

Access Speed and Latency: The time taken to read an instruction from flash or write data to SRAM directly affects the MCU’s Millions of Instructions Per Second (MIPS) capability. Techniques like pre-fetch buffers, cache memories, and execute-in-place (XIP) from flash are employed to mitigate speed disparities between the core and memory. Slower memory can stall a fast core, wasting cycles and energy.

-

Power Consumption: Memory is a significant contributor to an MCU’s power budget, especially SRAM in active mode. Low-power designs often feature multiple sleep modes that selectively power down different memory blocks to conserve energy. The choice of memory technology and access patterns is crucial for battery-powered Internet of Things (IoT) devices.

-

Endurance and Data Retention: Flash and EEPROM cells wear out after a finite number of write/erase cycles (endurance). Similarly, data retention defines how long stored information remains reliable without power. For industrial or automotive applications operating in harsh environments over decades, specifying memory with high endurance and guaranteed long-term retention is non-negotiable. These factors impact product lifespan and warranty considerations.

Navigating these trade-offs demands a clear understanding of the application’s requirements. A simple sensor node has vastly different memory needs than a motor control unit or a graphic display controller.

Part 3: Advanced Trends and Future Directions

The evolution of MCU memory is driven by demands for greater intelligence at the edge:

-

Integration of Specialized Memories: We are seeing the inclusion of tightly coupled memory (TCM) for critical time-sensitive code, and non-volatile RAM (NVRAM) technologies like Ferroelectric RAM (FRAM) or Resistive RAM (RRAM). These offer near-SRAM speeds with non-volatility**, blurring the traditional hierarchy and enabling new application paradigms with instant-on capability and zero leakage power for data retention.

-

Enhanced Security Features: Memory is the first line of defense against cyber threats. Modern MCUs incorporate hardware-based memory protection units (MPUs), read-out protection (ROP), and secure bootloaders that authenticate code stored in flash before execution. These features prevent unauthorized access, firmware extraction, or injection of malicious code.

-

AI at the Edge: Running even lightweight machine learning models on MCUs (TinyML) requires innovative memory solutions. This includes managing large neural network weights stored in flash and streaming them efficiently into SRAM for computation. Architectures are evolving to minimize data movement—the primary consumer of energy in AI workloads—through more intelligent memory controllers and cache hierarchies.

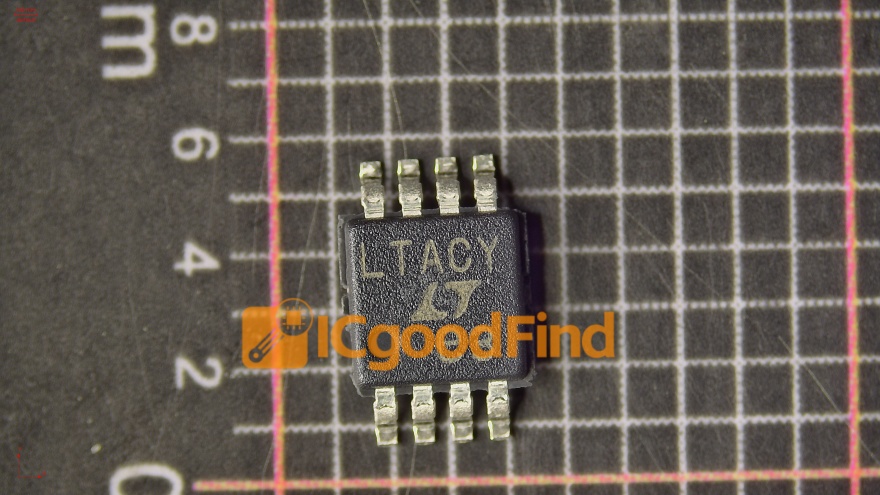

For professionals navigating this complex landscape of components and specifications, finding the right MCU with the optimal memory configuration can be daunting. This is where specialized resources become invaluable. For instance, platforms like ICGOODFIND provide critical market intelligence, component search tools, and supply chain analytics that can dramatically streamline the process of sourcing MCUs with specific memory characteristics, ensuring designers can match their precise architectural needs with available components efficiently.

Conclusion

MCU memory is far more than just a specification on a datasheet; it is the foundational framework that determines what an embedded system can achieve. From the hierarchical interplay of Flash, SRAM, and EEPROM to the critical trade-offs in speed, power, and cost, every aspect of memory design influences performance and capability. As embedded systems grow more complex and intelligent—driven by IoT connectivity, real-time analytics, and edge AI—the role of advanced, secure, and efficient memory architectures will only become more pronounced. By mastering the principles of MCU Memory, engineers can unlock new levels of innovation, creating devices that are not only smarter and more responsive but also more reliable and power-efficient. The journey toward optimal embedded design begins with a deep understanding of this critical engine.