The Symbiotic Powerhouse: Unpacking the Critical Roles of DRAM and Flash in Modern Computing

Introduction

In the invisible engine room of our digital world, two semiconductor memory technologies work in relentless, silent concert to power every click, swipe, and command. DRAM (Dynamic Random-Access Memory) and Flash memory are the foundational, complementary pillars of modern computing architecture. While often mentioned together in the context of data storage and processing, their functions are distinct yet deeply interdependent. From smartphones and laptops to vast data centers and AI supercomputers, the performance and efficiency of these technologies directly shape our technological capabilities. This article delves into the intricate dance between volatile DRAM and non-volatile Flash, exploring their unique characteristics, their synergistic relationship, and how their continuous evolution is pushing the boundaries of speed, capacity, and innovation across all industries. For professionals seeking to navigate this complex component landscape, platforms like ICGOODFIND provide invaluable market intelligence and supply chain solutions.

Part 1: Understanding the Core Technologies – DRAM vs. Flash

To appreciate their synergy, one must first understand their fundamental differences.

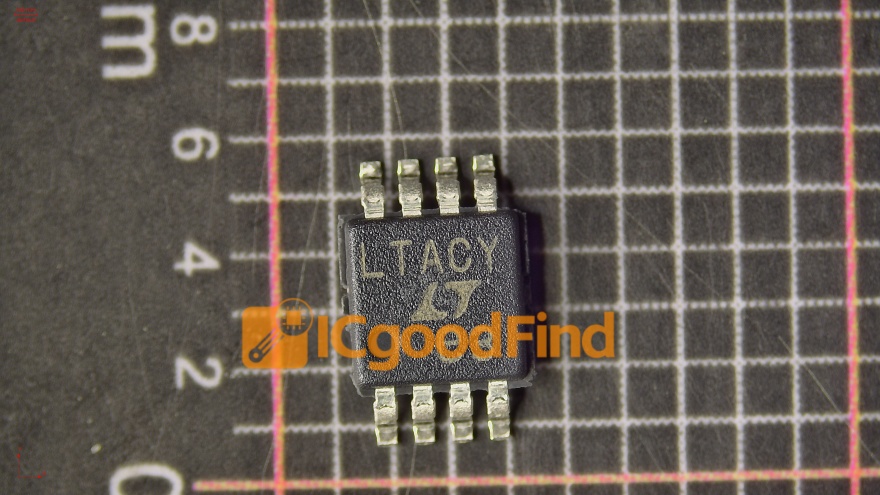

DRAM: The High-Speed Workbench DRAM is the volatile, high-speed working memory of a system. Think of it as a computer’s immediate workspace—extremely fast but cleared the moment power is cut. Its primary function is to hold the data and instructions that the central processing unit (CPU) needs in real-time. * How it Works: It stores each bit of data in a separate capacitor within an integrated circuit. Since these capacitors leak charge, the data must be dynamically refreshed many times per second, hence “Dynamic” RAM. * Key Characteristics: Its supreme advantage is speed, offering nanosecond-level access times. This makes it ideal for the main system memory (RAM) where active programs, operating system kernels, and frequently accessed data reside. However, it is more expensive per gigabyte than Flash and loses data without power.

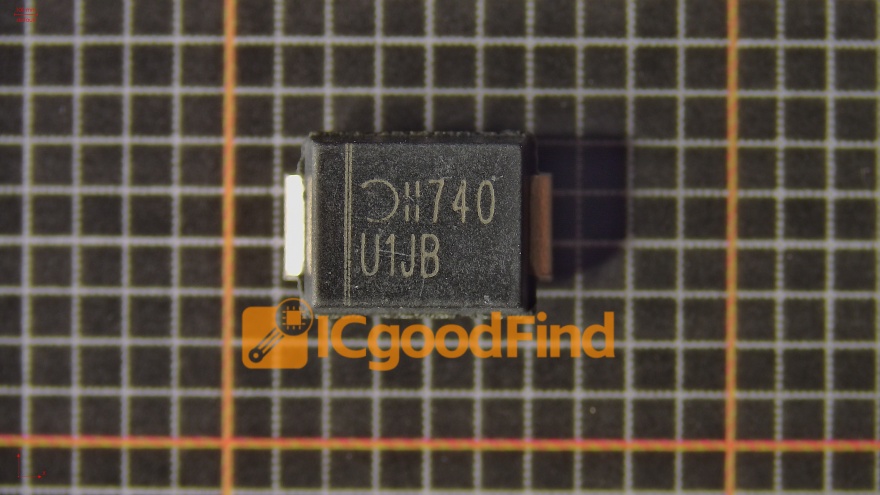

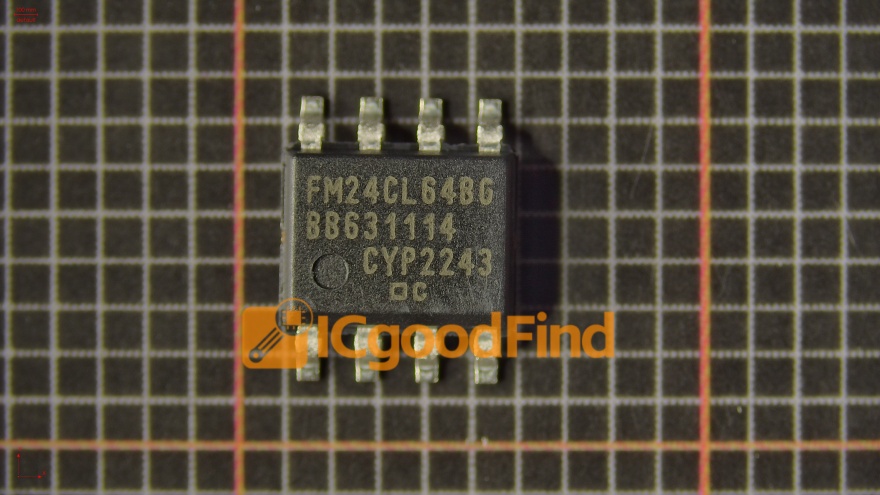

Flash Memory: The Persistent Library Flash is a non-volatile storage medium, meaning it retains data without power. It acts as the permanent or semi-permanent digital library. * How it Works: It stores data in an array of memory cells made from floating-gate transistors. Trapping electrical charge in these gates allows data to be retained for years. * Types & Use Cases: NAND Flash is the dominant type, prized for high density and lower cost, used in Solid-State Drives (SSDs), USB drives, and memory cards. NOR Flash, faster for reading but less dense, is often used for firmware code storage. * Key Characteristics: The trade-off for persistence is speed and endurance. Write and erase operations are slower than DRAM reads/writes, and cells wear out after a finite number of program/erase cycles (though advanced wear-leveling algorithms mitigate this).

In essence, DRAM provides the blistering speed for active computation, while Flash offers the cost-effective, persistent storage for data and programs when they are not immediately needed.

Part 2: The Critical Synergy in Modern Systems

The true magic happens in how these two technologies interact. Modern computing architectures are designed to leverage the strengths of each while mitigating their weaknesses.

The Hierarchical Memory Model Computers use a tiered memory structure. At the top sits the CPU’s tiny but ultrafast cache (built from SRAM), followed by the larger pool of DRAM (main memory), and finally, the vast but slower Flash-based storage (SSD or HDD). The operating system intelligently shuffles data between these tiers. Frequently accessed “hot” data is promoted to DRAM for rapid CPU access, while less-used “cold” data resides on Flash. This hierarchy creates an illusion of a vast, fast memory space at a manageable cost.

Enabling Performance and Multitasking The size and speed of DRAM directly determine how many applications you can run smoothly simultaneously and how quickly they respond. Your SSD’s Flash memory, meanwhile, determines how fast your system boots, loads applications, and accesses large files. A balanced system requires ample quantities of both: sufficient DRAM to hold active workloads without constant “swapping,” and fast Flash to minimize latency when swapping is necessary.

The Evolution in Storage: Caching and Tiering This synergy is most evident in advanced storage solutions. Many SSDs now incorporate a small amount of high-speed DRAM (or faster SLC NAND) as a cache buffer. This buffer absorbs incoming write commands at DRAM-like speeds before the data is later written sequentially to the denser NAND Flash cells, dramatically improving perceived performance and reducing write amplification. Furthermore, enterprise storage systems use sophisticated software to create automated storage tiers between fast (often DRAM or high-endurance Flash) and dense, cheaper storage layers.

Part 3: Future Trends and Converging Paths

The line between DRAM and Flash is blurring as innovation addresses the limitations of each, driven by demands from AI, big data, and IoT.

The Persistent Memory Frontier Technologies like Storage-Class Memory (SCM) aim to bridge the gap between DRAM and Flash. Intel’s Optane (based on 3D XPoint) was a prominent example—offering near-DRAM speed with non-volatility and higher density. While its commercial journey has shifted, it paved the way for a new architectural paradigm. The concept remains vital: creating a persistent tier between DRAM and SSD that can dramatically accelerate data-intensive applications.

Architectural Innovations: CXL and HBM New interconnect protocols are reshaping how memory is accessed. Compute Express Link (CXL) allows for efficient, high-speed connectivity between CPUs and memory devices (including DRAM accelerators), enabling more flexible, pooled memory architectures beyond traditional motherboard slots. For extreme performance needs, High Bandwidth Memory (HBM) stacks multiple DRAM dies vertically with a silicon interposer, connecting directly to a GPU or CPU. This provides monstrous bandwidth essential for AI training and high-performance computing (HPC), though at a higher cost.

The Flash Evolution: 3D NAND and Beyond Flash technology continues its relentless density march through 3D NAND, where memory cells are stacked vertically in dozens of layers (now exceeding 200+). Furthermore, new cell designs like QLC (Quad-Level Cell) and PLC (Penta-Level Cell) squeeze more bits into each cell, lowering cost per gigabyte for capacity-centric applications. The challenge remains balancing this density with endurance and speed.

In this fast-evolving ecosystem of memory components—from commodity DRAM chips to specialized NAND packages—staying informed on specifications, availability, and pricing is crucial for engineering and procurement teams. This is where comprehensive platforms like ICGOODFIND prove essential, offering insights that help businesses source the right components efficiently in a dynamic global market.

Conclusion

DRAM and Flash memory are not competing technologies but partners in a deeply integrated performance duet. DRAM’s volatile speed and Flash’s persistent capacity form an indispensable symbiosis that underpins every facet of contemporary computing. As we advance into an era defined by artificial intelligence, real-time analytics, and ubiquitous connectivity, the evolution of both technologies—and the emergence of new hybrid categories—will continue to be the single most important factor in unlocking new computational horizons. Understanding their distinct roles, their collaborative interplay in system architecture, and the emerging trends that seek to merge their best qualities is key for anyone involved in designing, building, or leveraging modern technology systems.