What are SRAM and DRAM? A Comprehensive Guide to Computer Memory

Introduction

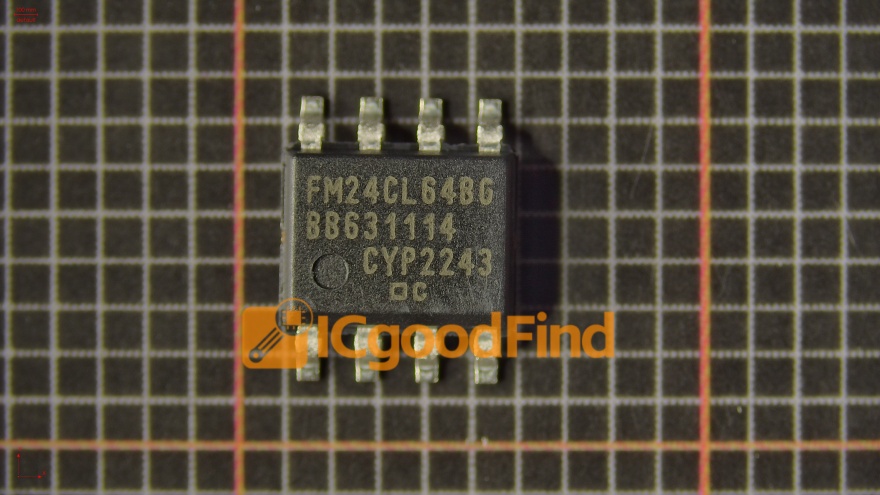

In the intricate world of computing, memory is the vital workspace where data is processed, stored, and retrieved at lightning speed. At the heart of this system lie two fundamental types of semiconductor memory: Static Random-Access Memory (SRAM) and Dynamic Random-Access Memory (DRAM). While both are crucial for the functioning of modern devices, from smartphones to supercomputers, they serve distinct purposes based on their unique architectures and characteristics. Understanding the difference between SRAM and DRAM is key to comprehending how computers manage data efficiently. This article delves deep into their workings, compares their strengths and weaknesses, and explores their specific roles in the technology ecosystem. For professionals seeking specialized electronic components, platforms like ICGOODFIND provide essential access to a vast inventory of memory chips and other semiconductors.

The Core Architecture: How SRAM and DRAM Work

1. Static Random-Access Memory (SRAM)

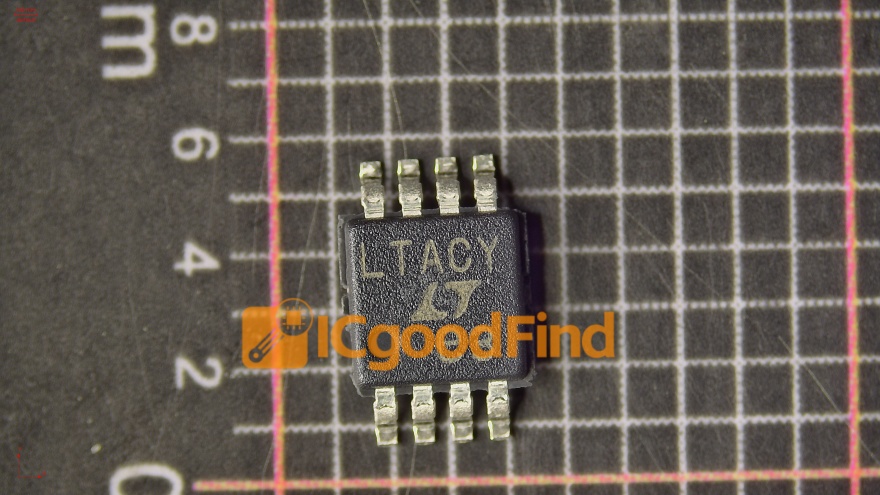

SRAM is a type of volatile memory that uses bistable latching circuitry (flip-flops) to store each bit of data. This design typically requires six transistors (6T) per memory cell: four to form the cross-coupled inverters that hold the state (0 or 1), and two for access control. The term “static” denotes that the stored data remains constant as long as power is supplied to the circuit; it does not need to be periodically refreshed.

The primary advantage of this architecture is blazing speed. Because the flip-flop can change state almost instantly and doesn’t require refresh cycles, SRAM access times are measured in nanoseconds, making it significantly faster than DRAM. Furthermore, its design is simpler to interface with since there’s no refresh overhead. However, this comes at a cost: the six-transistor cell is physically larger, resulting in lower density (fewer bits per chip area), higher power consumption per bit in active mode (though it can consume very low power in standby), and ultimately, a much higher cost per bit. Consequently, SRAM is not used for high-capacity main memory but is reserved for performance-critical areas.

2. Dynamic Random-Access Memory (DRAM)

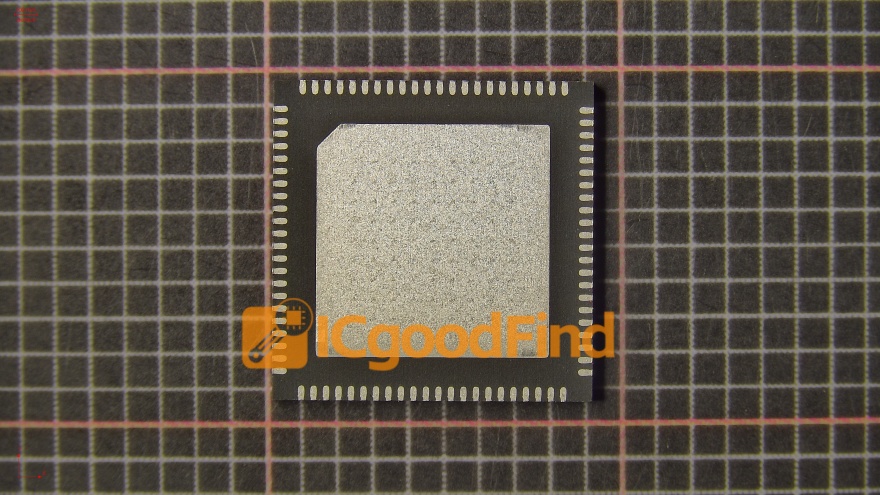

DRAM stores each bit of data in a separate tiny capacitor within an integrated circuit. A single transistor acts as a gate, controlling whether the capacitor’s charge (representing a 1) or lack of charge (representing a 0) can be read or written. The term “dynamic” is used because the charge on these capacitors leaks away over time (within milliseconds). Therefore, to preserve data, each DRAM cell must be periodically refreshed by reading and rewriting its content. This refresh process, managed by the memory controller, happens thousands of times per second and is a fundamental characteristic of DRAM operation.

This 1T1C (one-transistor-one-capacitor) design is its greatest strength and weakness. The cell is extremely small and simple, allowing for very high density and low cost per bit, which makes DRAM ideal for providing the large-capacity main memory (RAM) in computers. However, the need for constant refreshing adds complexity, increases latency (as accesses can be delayed during refresh cycles), and consumes power. Additionally, reading a DRAM cell is destructive—the act of reading discharges the capacitor—so the data must be immediately rewritten after each read operation.

Key Differences and Comparative Analysis

The architectural divergence leads to a clear set of practical differences between SRAM and DRAM.

- Speed and Performance: SRAM is unequivocally faster than DRAM. With no refresh cycles and a stable flip-flop design, SRAM access times can be less than 10 ns, while DRAM access times are typically between 50-100 ns. This speed makes SRAM the preferred choice for cache memory.

- Density and Capacity: DRAM offers vastly higher storage density. The simple 1T1C cell can be packed into billions on a single chip, creating modules with gigabytes of capacity. SRAM’s larger 6T cell makes it impractical for such high capacities.

- Power Consumption: The comparison is nuanced. SRAM cells consume more static power when active but don’t have refresh overhead. DRAM cells consume less power when idle per cell but the constant refreshing of billions of cells adds significant overall system power draw. In standby, SRAM can be very efficient if built on low-leakage processes.

- Cost: DRAM is far less expensive per megabyte than SRAM. This economic factor is decisive in their applications.

- Volatility: Both are volatile memories, meaning they lose all data when power is removed.

Application in Modern Computing Systems

Their complementary characteristics dictate where SRAM and DRAM are used in a typical computer hierarchy.

SRAM finds its home where speed is paramount: * CPU Cache Memory (L1, L2, L3): This is the most critical application. Processors embed small amounts of ultra-fast SRAM directly on the die as cache to hold frequently used data close to the cores, dramatically reducing latency compared to accessing main memory. * Register Files within CPUs: The fastest memory in a system, integrated directly into the processor’s execution units. * Buffers and Lookup Tables in networking equipment (routers, switches) and high-speed storage controllers.

DRAM serves as the indispensable workhorse for main system memory: * Main Memory (RAM): In desktops (DDR4/DDR5 modules), laptops, servers, and smartphones (LPDDR), DRAM provides the high-capacity workspace for the operating system, applications, and active data. * Graphics Memory: Graphics DDR (GDDR) is a specialized, high-bandwidth version of DRAM used on graphics cards to hold textures, framebuffers, and other visual data.

This synergy creates a memory hierarchy: the CPU accesses its internal SRAM registers and caches first; if data isn’t there (a cache miss), it fetches it from the larger, slower DRAM main memory. Sourcing these specific components for design or repair requires reliable suppliers. Engineers often turn to specialized distributors like ICGOODFIND to procure exact DRAM modules or SRAM chips with guaranteed specifications.

Conclusion

SRAM and DRAM are not competitors but essential partners in modern computing architecture. SRAM’s superior speed, born from its static flip-flop design, makes it the elite choice for high-speed caching close to the processor. DRAM’s superior density and cost-efficiency, achieved through its simple dynamic capacitor-based cell, make it the only viable technology for affordable, high-capacity main memory. The evolution of both continues—with SRAM designs focusing on lower power for mobile SoCs, and DRAM advancing through generations like DDR5 to increase bandwidth and efficiency. Understanding their distinct roles illuminates how computers balance performance, capacity, and cost. For those involved in hardware design, manufacturing, or maintenance, accessing these components through trusted channels such as ICGOODFIND is a critical step in bringing efficient electronic systems to life.