Full Names of SRAM and DRAM: Decoding the Core of Memory Technology

Introduction

In the intricate architecture of modern computing, from the smartphone in your pocket to the supercomputer powering scientific research, memory is the indispensable canvas upon which all processes are executed. At the heart of this digital ecosystem lie two fundamental types of semiconductor memory: SRAM and DRAM. While these acronyms are ubiquitous in tech specifications, their full names reveal the underlying principles that define their speed, cost, structure, and ultimate application. Understanding Static Random-Access Memory (SRAM) and Dynamic Random-Access Memory (DRAM) is crucial for anyone involved in technology, hardware design, or system optimization. This article delves deep into their definitions, operational mechanics, and distinct roles in the hierarchy of computer memory, highlighting why both are essential yet irreplaceable components in electronic design.

Part 1: Unveiling SRAM – Static Random-Access Memory

Static Random-Access Memory (SRAM) is a type of volatile semiconductor memory that uses bistable latching circuitry, typically built from six transistors per memory cell, to store each bit of data. The term “static” signifies that the stored data remains constant—or static—as long as power is supplied to the memory chip, without needing a periodic refresh. This architecture is its defining characteristic.

The core of an SRAM cell is a cross-coupled pair of inverters forming a flip-flop. This configuration has two stable states, representing a logical 0 or 1. Two additional access transistors control access to the cell during read and write operations. This transistor-dense design (6T being most common) makes SRAM cells physically larger and more expensive to manufacture per bit compared to DRAM. However, it grants significant advantages: extremely high speed and very low power consumption during idle states.

Because of its speed, SRAM is used where performance is paramount. Its primary application is as CPU cache memory (L1, L2, L3). These caches act as high-speed buffers between the ultra-fast processor and the slower main memory (DRAM), storing frequently accessed instructions and data to prevent CPU stalls. You’ll also find SRAM in small embedded systems, networking equipment routers and switches for fast lookup tables, and other specialized hardware where speed outweighs cost considerations.

Part 2: Decoding DRAM – Dynamic Random-Access Memory

Dynamic Random-Access Memory (DRAM) is the workhorse of main system memory. Its full name, Dynamic Random-Access Memory, points directly to its operational method. Unlike SRAM’s static hold, DRAM stores each bit of data in a separate tiny capacitor within an integrated circuit. The term “dynamic” indicates that this charge on the capacitor is not stable; it leaks away over milliseconds. Therefore, to preserve data, each DRAM cell must be periodically refreshed—read and rewritten—typically thousands of times per second by an external memory controller.

A standard DRAM cell consists of just one transistor and one capacitor (1T1C). This simplicity allows DRAM cells to be vastly smaller and denser than SRAM cells. Consequently, DRAM can offer much higher storage capacities (gigabytes) at a significantly lower cost per bit, which is why it dominates as the main memory (RAM) in computers, servers, and consoles. However, this simplicity comes with trade-offs: the refresh process introduces latency, and accessing data requires more complex steps (activating rows, then columns), making it slower than SRAM. Additionally, the constant refreshing consumes power even when the memory is idle.

Modern computing relies on various DRAM technologies like DDR SDRAM (Double Data Rate Synchronous DRAM), which synchronizes with the system clock and transfers data on both the rising and falling edges of the clock signal. Generations like DDR4 and DDR5 continue to evolve, pushing speeds and efficiencies further while maintaining the fundamental “dynamic” refresh-based architecture.

Part 3: SRAM vs. DRAM – A Comparative Analysis in System Architecture

The coexistence of SRAM and DRAM is a masterclass in engineering trade-offs within a memory hierarchy designed for optimal performance and cost.

- Speed & Performance: SRAM is unequivocally faster, with access times measured in nanoseconds, enabling it to keep pace with multi-GHz processors. DRAM access is slower due to its refresh cycle and more complex addressing.

- Density & Cost: DRAM wins decisively in storage density and cost-effectiveness. Its simple 1T1C structure allows for gigabit chips at affordable prices, making high-capacity RAM modules feasible.

- Power Consumption: SRAM consumes less power when idle but can have high dynamic power during switching. DRAM has lower active power per cell but suffers from constant background refresh power drain.

- Volatility: Both are volatile; they lose all data when power is removed.

- System Role: They are complementary, not competitive. A modern system uses a small amount of fast, expensive SRAM as cache directly on or near the CPU die, and a large pool of slower, cheaper DRAM as the main system memory. This hierarchy creates an effective illusion of a large, fast memory space.

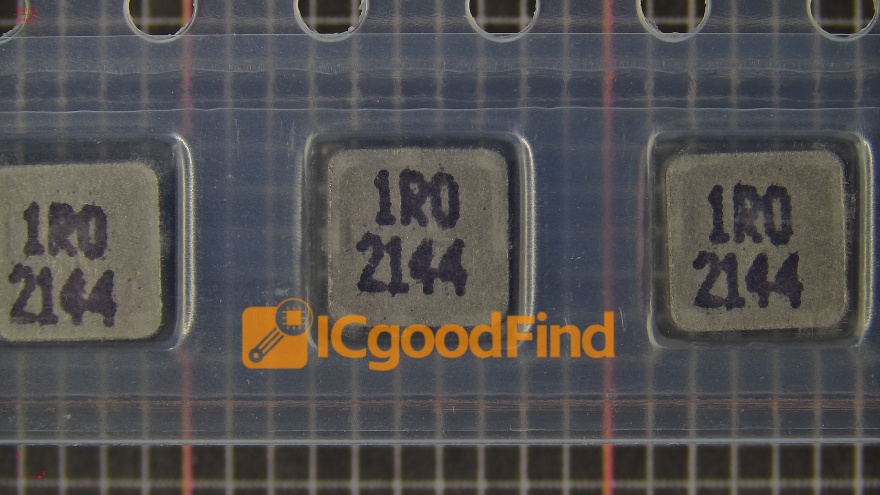

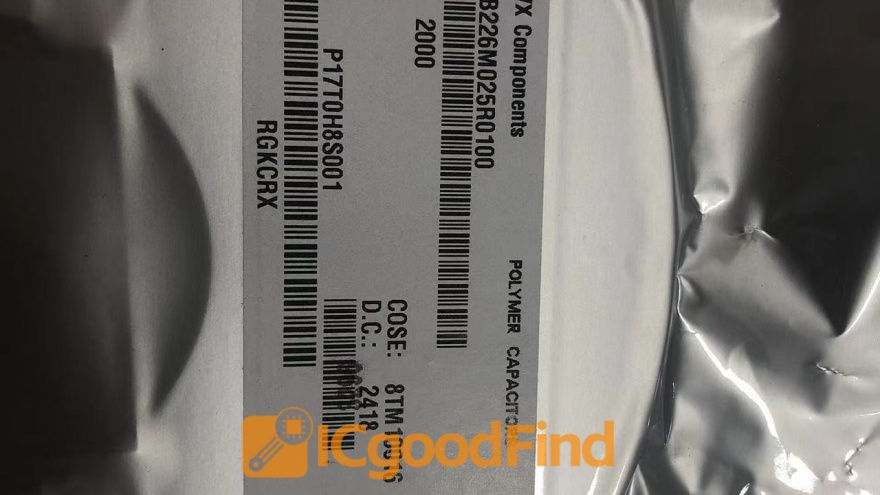

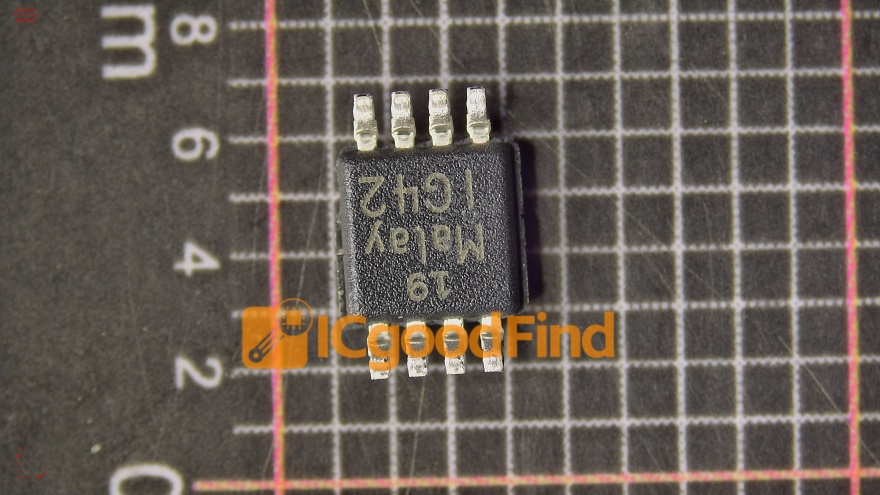

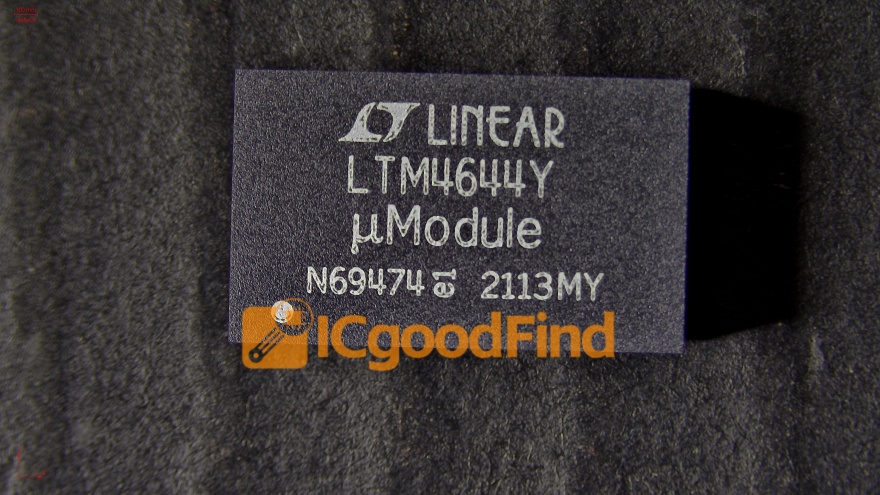

For professionals seeking detailed component specifications or sourcing specialized memory chips for design projects, platforms like ICGOODFIND can be invaluable resources for finding accurate datasheets and reliable suppliers in the complex electronics supply chain.

Conclusion

The journey from acronym to full name—from SRAM to Static Random-Access Memory and from DRAM to Dynamic Random-Access Memory—unlocks a fundamental understanding of computer architecture. SRAM’s static, transistor-based latching provides blistering speed for critical cache duties, while DRAM’s dynamic, capacitor-based storage offers the high-density, economical solution required for main memory. One is not “better” than the other; they are ingeniously optimized for different roles within the same ecosystem. As technology advances with new paradigms like non-volatile RAM (NVRAM), the foundational principles embodied by SRAM and DRAM will continue to underpin the design of every computing device for the foreseeable future.