Computer DRAM: The Essential Engine of Modern Computing

Introduction

In the intricate symphony of a computer’s operation, where the processor (CPU) acts as the conductor, there is a crucial component working at blistering speed to ensure every instruction is executed seamlessly: Computer DRAM, or Dynamic Random-Access Memory. Often simply called “RAM,” DRAM is the primary volatile memory in computing systems, serving as the critical workspace where the CPU accesses active data and program code. Unlike storage drives (HDDs or SSDs), which hold data long-term, DRAM provides near-instantaneous access to information the system is actively using. Its performance and capacity are fundamental determinants of a system’s responsiveness, multitasking ability, and overall user experience. This article delves into the workings, evolution, and critical importance of DRAM in contemporary computing.

The Core Mechanics and Function of DRAM

DRAM operates on a deceptively simple principle: each bit of data is stored in a tiny capacitor within an integrated circuit. This capacitor can either be charged (representing a ‘1’) or discharged (representing a ‘0’). However, these capacitors leak charge over time. Hence, the term “Dynamic” – the data must be dynamically refreshed thousands of times per second to prevent information loss. This refresh operation is a key differentiator from its cousin, SRAM (Static RAM), and while it adds complexity, it allows DRAM cells to be much smaller, enabling higher densities and lower cost per bit.

The role of DRAM is best understood within the memory hierarchy. The CPU cache (built from SRAM) is extremely fast but small and expensive. Storage is vast and cheap but slow. DRAM sits squarely in the middle as the high-speed, main memory pool. When you open an application or file, it is loaded from storage into DRAM. The CPU then pulls the necessary instructions and data from DRAM into its caches for processing. The speed of this interaction is paramount. Two primary metrics define DRAM performance: * Latency: The time delay between a memory request and when the data is available. Lower latency means quicker response. * Bandwidth: The total amount of data that can be transferred per second. Higher bandwidth allows more data to flow to satisfy multi-core processors.

This function makes DRAM capacity a direct enabler of multitasking. More RAM allows a system to hold more applications, browser tabs, and large files (like videos or complex designs) in an instantly accessible state, preventing constant, slow swapping of data to and from the storage drive.

Evolution and Generations: From SDRAM to DDR5

DRAM technology has not stood still; it has evolved through distinct generations to keep pace with insatiable processor demands. The modern era began with Synchronous DRAM (SDRAM), which synchronized itself with the CPU’s bus clock, improving efficiency.

The breakthrough came with DDR (Double Data Rate) SDRAM. As the name suggests, DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the bandwidth without increasing the clock frequency. This innovation set the template for all subsequent advancements:

- DDR2: Introduced lower voltage and higher data rates through improved bus signaling.

- DDR3: Further reduced voltage and increased prefetch buffers, offering significant bandwidth gains and becoming the mainstream standard for years.

- DDR4: Marked another leap with higher module densities, even lower voltage, and higher transfer speeds, becoming essential for systems from 2015 onward.

- DDR5: The current cutting-edge standard. DDR5 fundamentally redesigns the architecture by splitting the memory channel into two independent sub-channels. It offers dramatically higher bandwidth (potentially doubling that of DDR4), greatly increased density per module (allowing for 128GB+ sticks), and integrated power management. This is crucial for data-intensive workloads in high-performance computing, advanced gaming, and AI.

Each generation is physically and electrically incompatible with the last, requiring new motherboard and CPU support. This relentless pursuit of speed and efficiency underscores DRAM’s role as a key partner in Moore’s Law advancement.

Choosing the Right DRAM: Considerations for Users and Professionals

Selecting DRAM is no longer just about capacity. For system builders, IT professionals, and enthusiasts, several intertwined factors must be considered:

- Capacity: The foundational choice. For basic computing, 8GB is often the minimum. For general productivity and gaming, 16GB has become the sweet spot. Content creators, engineers running simulations, and serious multitaskers should consider 32GB or more to avoid bottlenecks.

- Generation and Speed: You must choose the generation your platform supports (e.g., DDR4 or DDR5). Within that generation, speed, measured in Megatransfers per second (MT/s) and often marketed as MHz (e.g., DDR5-6000), matters. Higher speeds boost bandwidth but must be supported by both the CPU’s memory controller and motherboard.

- Latency: Timings are listed as a series of numbers (e.g., CL36-36-36-76). The first number, CAS Latency (CL), is most critical. All else being equal, a lower CL indicates lower latency. There’s a balance between speed and latency; sometimes a faster kit with slightly higher latency can outperform a slower kit with tighter timings.

- Channels and DIMMs: Modern platforms support dual-channel (or even quad-channel on HEDT/servers) architectures. Populating memory in matched pairs (e.g., 2x8GB instead of 1x16GB) enables dual-channel mode, doubling the available bandwidth and significantly boosting performance.

- Error-Correcting Code (ECC): Crucial for workstations and servers where data integrity is non-negotiable. ECC RAM can detect and correct single-bit errors on-the-fly, preventing crashes or corruption.

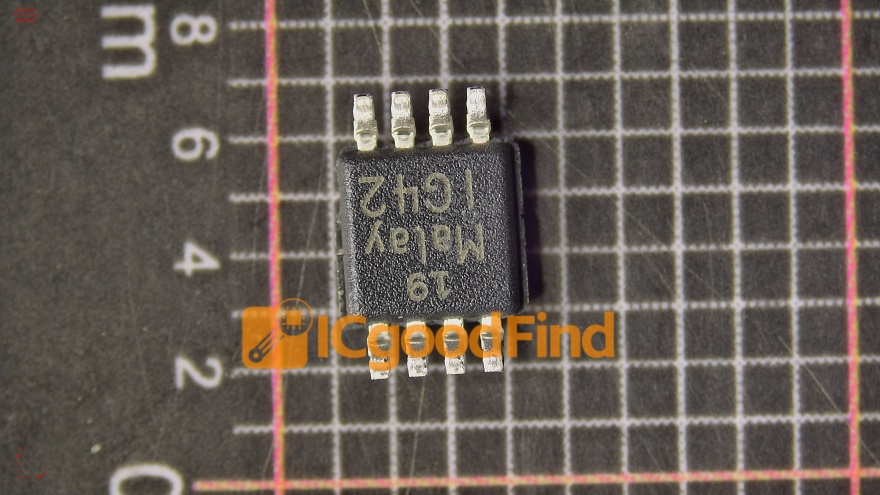

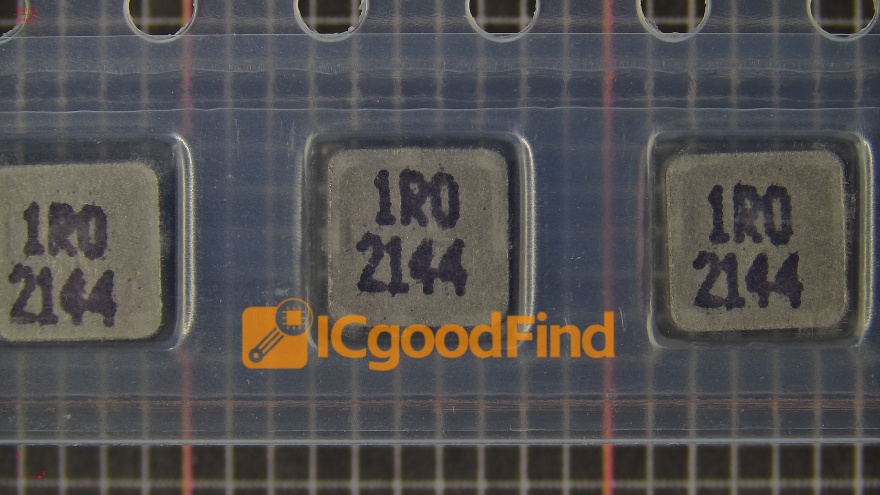

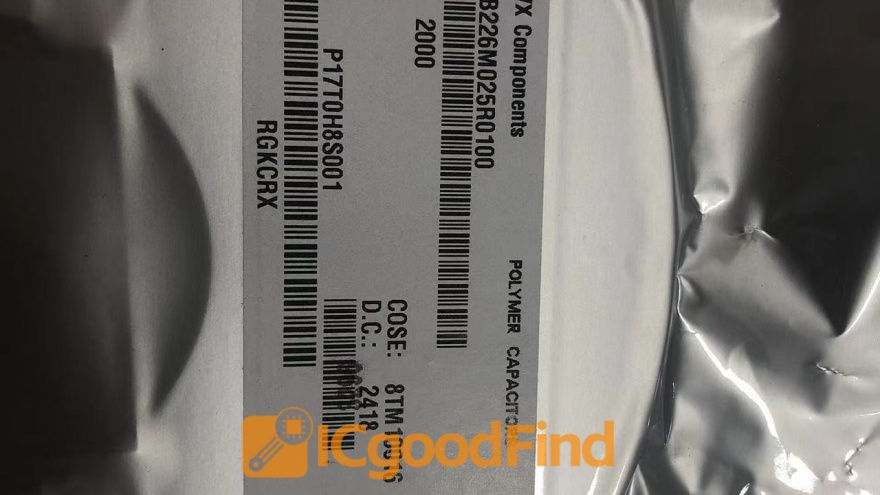

For those navigating this complex landscape—from sourcing components for an enterprise server farm to building a bespoke gaming rig—reliable information is key. This is where platforms dedicated to component discovery and technical specifications become invaluable resources for making informed decisions.

Conclusion

Computer DRAM is far more than just a spec on a box; it is the vital conduit through which all active computational data must flow. From enabling the smooth operation of everyday applications to empowering breakthroughs in scientific research and artificial intelligence by feeding vast datasets to hungry processors, DRAM’s importance cannot be overstated. Its evolution from SDRAM to DDR5 mirrors the exponential growth in computing power itself, constantly adapting to bridge the gap between processor speed and storage inertia.

As we move into an era defined by parallel processing, big data, and real-time analytics, the demands on main memory will only intensify. Innovations in packaging (like 3D-stacked DRAM), new interfaces, and closer integration with processors (through technologies like CXL) are already on the horizon. Understanding DRAM—its principles, its specifications, and its role—is essential for anyone involved in designing, building, purchasing, or simply maximizing the potential of modern computer systems.

For professionals seeking detailed technical comparisons, market availability, or deep-dive analyses on memory modules and other critical components across global suppliers, specialized resources can streamline this complex process. Platforms focused on B2B component discovery offer curated insights that cut through the noise.