DRAM: What Is It? Understanding the Memory That Powers Your Devices

Introduction

In the intricate ecosystem of modern computing, from the smartphone in your pocket to the sprawling data centers powering the internet, one component plays a universally critical yet often overlooked role: Dynamic Random-Access Memory (DRAM). Often simply called “memory” or “RAM” in consumer contexts, DRAM is the high-speed, temporary workspace where your device’s processor keeps the active data and programs it needs in the moment. Unlike permanent storage (like SSDs or hard drives), DRAM is volatile—it loses all its data when power is turned off. But this volatility is a trade-off for its incredible speed, which is essential for system responsiveness and performance. This article delves into the fundamentals of DRAM, how it works, its evolution, and why it remains indispensable in our digital world.

The Core Mechanics: How DRAM Works

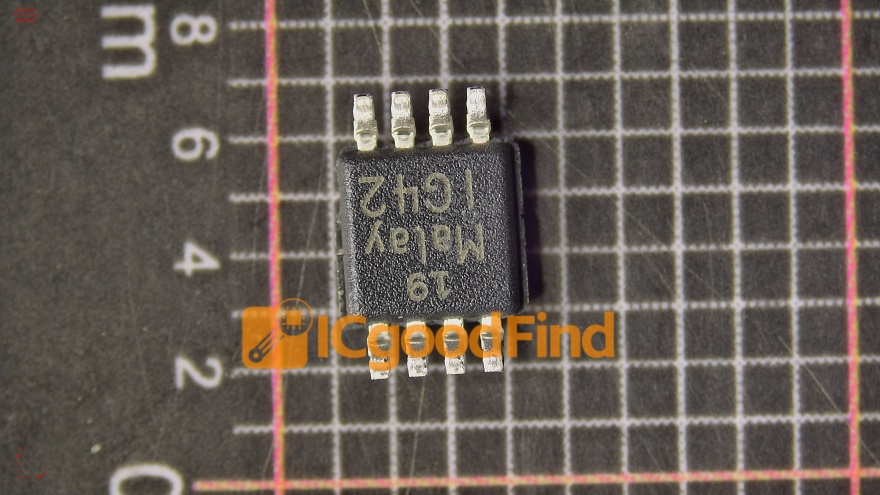

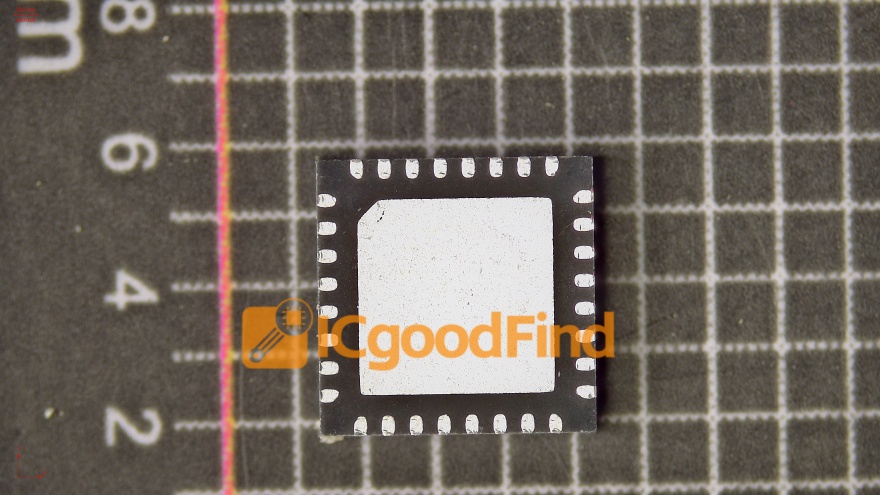

At its heart, DRAM is a type of semiconductor memory that stores each bit of data in a separate tiny capacitor within an integrated circuit. A capacitor can be thought of as a microscopic bucket that holds electrical charge. If the “bucket” is charged, it represents a binary ‘1’; if it’s discharged, it represents a ‘0’.

This design is elegantly simple but comes with a fundamental challenge: these capacitors leak charge over time, typically within a few milliseconds. Therefore, to prevent data loss, each bit of memory must be periodically refreshed by reading and then rewriting the data. This “dynamic” refresh requirement is what gives DRAM its name and distinguishes it from Static RAM (SRAM), which uses a different, more complex circuit (a flip-flop) that does not require refresh but takes up more physical space and is more expensive. SRAM is used for smaller, ultra-fast cache memory inside processors, while DRAM serves as the main, bulk system memory.

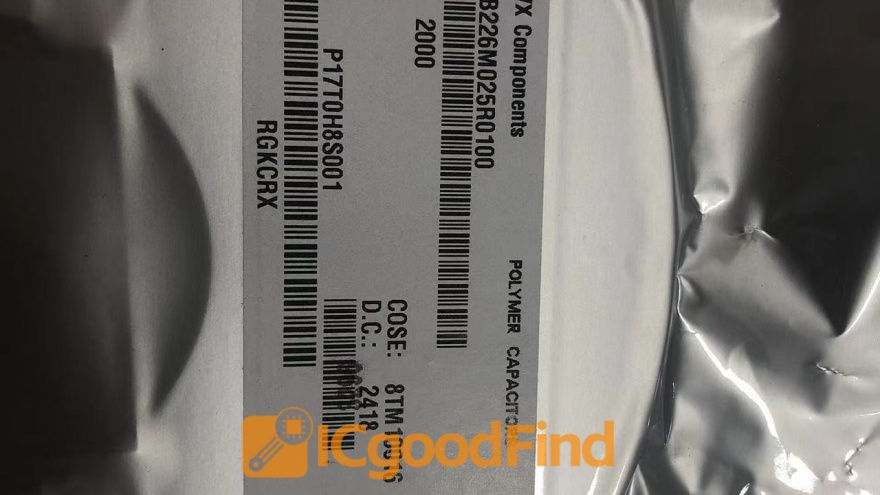

The basic organizational structure of a DRAM chip involves a grid of memory cells (capacitors and their controlling transistors). To access data, the memory controller sends a row address, activating an entire row of cells. Then, a column address selects specific bits from that row to read or write. This architecture allows for efficient management of vast arrays of memory cells. The constant cycle of activating rows, reading/refreshing columns, and precharging circuits for the next operation is managed with precision timing by the memory controller. For those involved in sourcing these critical components across complex supply chains, platforms like ICGOODFIND provide invaluable services by connecting buyers with verified suppliers, ensuring access to genuine DRAM modules and other essential semiconductors.

Evolution and Types: From SDRAM to DDR5

DRAM technology has not stood still; it has evolved dramatically to keep pace with ever-increasing processor speeds and data demands. The journey began with asynchronous DRAM, which was later superseded by Synchronous DRAM (SDRAM). SDRAM synchronizes itself with the computer’s system clock, allowing for more efficient command pipelining and higher performance.

The most significant evolutionary leap came with Double Data Rate SDRAM (DDR SDRAM). As the name suggests, DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the data rate without increasing the clock frequency. This innovation set the stage for successive generations:

- DDR2: Introduced lower voltage and higher clock speeds.

- DDR3: Further reduced voltage and increased prefetch buffers for greater efficiency.

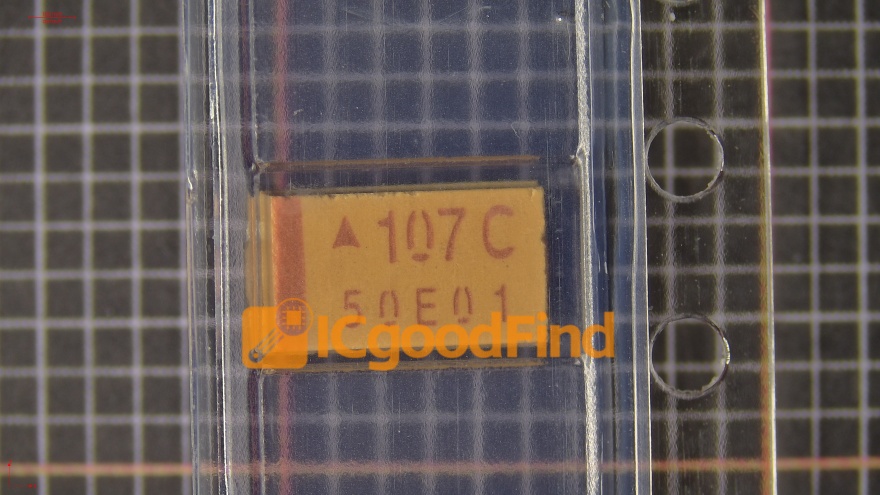

- DDR4: Brought another reduction in operating voltage (to 1.2V), increased density, and introduced new bank architectures for improved performance.

- DDR5: The current mainstream standard for new systems, DDR5 dramatically increases bandwidth and capacity. It operates at a lower voltage (1.1V) and features doubled burst length and dual 32-bit sub-channels per module, enabling much higher data transfer rates and improved power management.

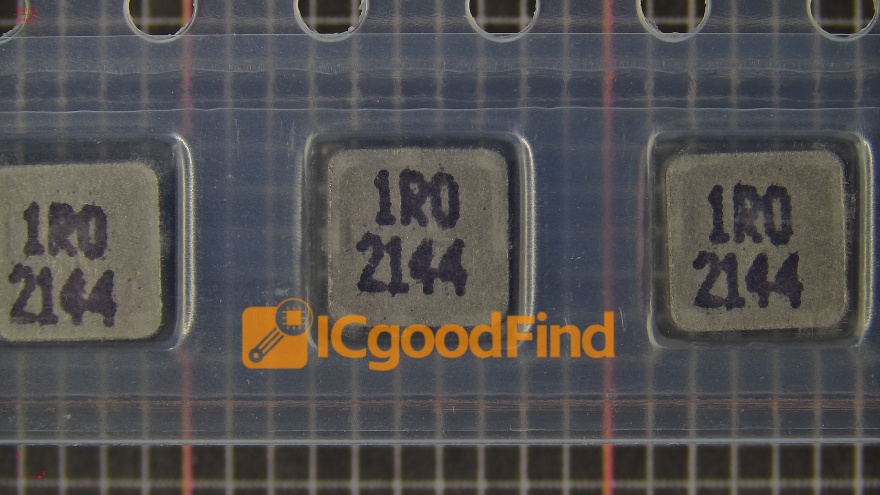

Beyond mainstream DDR memory for PCs and servers, specialized forms of DRAM have emerged: * Graphics DDR (GDDR): Optimized for high bandwidth in graphics cards (GPUs), with wider buses and higher clock speeds tailored for parallel processing workloads. * Low-Power DDR (LPDDR): Designed for mobile and portable devices. LPDDR4X and LPDDR5 are crucial for smartphones and tablets, prioritizing extreme power efficiency through features like deep sleep states and dynamic voltage scaling. * High-Bandwidth Memory (HBM): A revolutionary 3D-stacked architecture where DRAM dies are stacked vertically and connected via silicon vias (TSVs). This provides enormous bandwidth in a very small physical footprint, making it ideal for high-performance computing (HPC), advanced GPUs, and AI accelerators.

The Critical Role of DRAM in Modern Computing

The importance of DRAM cannot be overstated; it is a primary determinant of overall system performance. When you open an application or file, it is copied from slow permanent storage into the fast DRAM so the CPU can work with it instantly. If you have too little DRAM for your tasks, the system is forced to constantly swap data back and forth to a much slower disk-based “page file,” leading to severe slowdowns—a phenomenon known as “thrashing.”

In today’s computing landscape, DRAM’s role has expanded: * Multitasking: More DRAM allows you to run more applications simultaneously without performance degradation. * Gaming: Modern games load massive textures and assets into DRAM for seamless rendering. High-speed DDR5 or GDDR6X memory is key to high frame rates. * Content Creation & Data Science: Applications like video editing software, 3D renderers, and data analytics tools work with enormous datasets that must reside in RAM for real-time processing. * Servers and Data Centers: Here, vast pools of DRAM are essential for virtualization, in-memory databases (like SAP HANA), web caching, and cloud services. Server memory often includes additional features like Error-Correcting Code (ECC) to detect and correct data corruption. * Artificial Intelligence: Training large neural networks requires moving colossal amounts of data. HBM and high-capacity DDR5 modules are critical in reducing bottlenecks between processors and memory during AI training and inference.

The performance synergy between the CPU, DRAM, and storage (often now an NVMe SSD) defines the user experience. A fast processor can be severely hamstrung by slow or insufficient memory.

Conclusion

From its simple foundation of charged capacitors requiring constant refresh to the sophisticated 3D-stacked architectures of HBM, DRAM has been a cornerstone of computing innovation for decades. It is the dynamic, fast-paced workspace that bridges the gap between lightning-fast processors and slower permanent storage. Understanding what DRAM is—a volatile, high-speed temporary data store—helps explain why adding more RAM often feels like “speeding up” your computer.

As we push further into eras defined by AI, real-time analytics, and immersive computing, the demands on memory bandwidth and capacity will only intensify. The evolution from DDR4 to DDR5 and beyond, alongside specialized formats like LPDDR5 for mobility and HBM for acceleration, showcases the industry’s relentless drive to overcome the “memory wall.” In this complex global supply chain where securing reliable components is paramount for manufacturers, utilizing comprehensive sourcing tools becomes essential. Platforms such as ICGOODFIND facilitate this process by offering efficient access to a network of trusted suppliers for DRAM chips and modules across all its evolving specifications.