What is a DRAM Memory? A Comprehensive Guide

Introduction

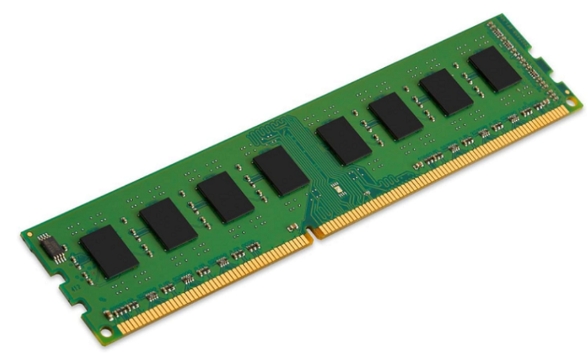

In the heart of every modern computer, smartphone, and server lies a critical component responsible for the system’s speed and responsiveness: DRAM memory. While often mentioned alongside storage drives and processors, DRAM’s specific role is frequently misunderstood. Simply put, DRAM (Dynamic Random-Access Memory) is the primary, volatile working memory of a computing device, acting as the essential workspace where your computer temporarily holds data and program code that is actively in use. Unlike permanent storage, DRAM is lightning-fast but forgets everything when the power is turned off. This article will demystify DRAM technology, exploring its fundamental principles, how it operates, its various types, and why it remains indispensable in our digital world. For those seeking in-depth technical insights or reliable component information, platforms like ICGOODFIND offer valuable resources and analysis on semiconductor components including memory technologies.

The Core Principles of DRAM

To understand DRAM’s function, one must first grasp its basic operational principles. The term “Dynamic” is the key differentiator from its cousin, SRAM (Static RAM).

At its most fundamental level, a DRAM cell stores a single bit of data (a 0 or a 1) as an electrical charge in a tiny capacitor. This capacitor is paired with a transistor that acts as a gatekeeper, controlling the flow of electricity to read or write the charge. However, this stored charge is not permanent; it leaks away over milliseconds due to the inherent properties of the capacitor. This is the “dynamic” aspect—the data fades unless it is constantly refreshed. A dedicated memory controller within the system automatically reads and then rewrites the data in each cell hundreds of times per second to maintain integrity. This refresh process is crucial but also introduces a slight overhead in performance.

This simple one-transistor-one-capacitor (1T1C) design has a monumental advantage: it allows for extremely high-density memory arrays. Because each cell is minimal in size, billions of these cells can be packed onto a single silicon chip, creating memory modules with large capacities (e.g., 8GB, 16GB, 32GB) at a relatively low cost per bit. This high density and cost-effectiveness are primary reasons why DRAM has become the universal choice for main system memory, despite requiring constant refreshment and being slower than SRAM caches found inside CPUs.

How DRAM Works in Your Computer

The operation of DRAM involves a coordinated dance between the memory modules, the memory controller, and the CPU. When you open an application or file, the necessary data is moved from your slow but permanent storage (like an SSD or HDD) into the much faster DRAM. The CPU can then access this data at speeds orders of magnitude quicker.

The process of accessing data in DRAM is address-based. DRAM is organized in a grid-like structure of rows and columns. To read a bit of data, the memory controller first activates an entire row by sending a row address strobe (RAS). Once the row is open, it can then specify a column address strobe (CAS) to access the specific bits needed. This RAS-CAS cycle is a fundamental timing parameter of DRAM. After reading, the row must be closed (precharged) before another can be accessed, leading to latency measured in nanoseconds.

Modern DRAM modules communicate with the CPU via channels. Dual-channel or quad-channel configurations increase bandwidth by allowing multiple streams of data to flow simultaneously between the memory and the controller. Furthermore, technologies like burst mode allow for transferring a block of consecutive data after a single address request, greatly improving efficiency for sequential operations. The entire subsystem’s performance is governed by key specifications: capacity (total data it can hold), frequency (speed of data transfer, e.g., DDR4-3200), and timings (latency delays, e.g., CL16). Balancing these specs is key to optimal system performance.

Evolution and Types of DRAM

DRAM technology has not stood still; it has evolved through several generations to meet escalating demands for speed and efficiency.

- SDRAM (Synchronous DRAM): This was a breakthrough that synchronized the memory’s operations with the system clock. This allowed the memory controller to know precisely when data would be ready, enabling more efficient command pipelining.

- DDR SDRAM (Double Data Rate SDRAM): This is the architecture that defines modern memory. DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the data rate without increasing the clock frequency. Successive generations have built upon this:

- DDR2: Introduced lower voltage and higher speeds.

- DDR3: Further reduced voltage and increased prefetch buffers.

- DDR4: Featured even lower voltage, higher densities, and improved bank groups for efficiency.

- DDR5: The current standard for new systems, offering dramatically higher bandwidth (over 2x DDR4), much higher densities per module, and on-die ECC (Error Correction Code) for improved reliability.

- Specialized DRAM Types:

- LPDDR (Low Power DDR): Designed for mobile devices. LPDDR memories operate at very low voltages to conserve battery life while providing necessary bandwidth for smartphones and tablets.

- GDDR (Graphics DDR): Optimized for graphics cards. GDDR prioritizes extreme bandwidth over low latency, featuring wider buses and higher clock speeds to feed massive textures and frames to the GPU.

- HBM (High Bandwidth Memory): A revolutionary 3D-stacked design. HBM stacks multiple DRAM dies vertically and connects them to a GPU or CPU via a super-wide interconnect (like an interposer), achieving unparalleled bandwidth in a very small physical footprint, used in high-end graphics and accelerators.

For professionals and enthusiasts comparing these technologies and sourcing components, specialized platforms such as ICGOODFIND provide critical specifications, availability data, and market insights that aid in making informed decisions.

Conclusion

DRAM memory is far more than just “RAM” inside your device; it is the dynamic, high-speed staging ground that enables all modern computing. From its simple yet ingenious capacitor-based cell design to its complex synchronous communication with the CPU via advanced DDR interfaces, DRAM balances speed, density, and cost like no other technology. Its evolution from SDRAM to DDR5 and into specialized forms like LPDDR for mobility and HBM for extreme performance underscores its adaptability to diverse computing needs. As software demands continue to grow with more complex applications and larger datasets, advancements in DRAM technology—increasing bandwidth, reducing power consumption, and pushing architectural boundaries—will remain fundamental to driving progress across every sector of technology. Understanding what DRAM is and how it functions provides valuable insight into the very engine of digital performance.