DRAM Storage: The High-Speed Engine of Modern Computing

Introduction

In the intricate architecture of modern computing, where speed is paramount, DRAM (Dynamic Random-Access Memory) storage stands as the indispensable, high-performance workhorse. Often simply called “memory,” DRAM is fundamentally different from the storage provided by SSDs or HDDs. It serves as the computer’s short-term memory, providing the ultra-fast workspace where the processor actively reads and writes data for immediate tasks. From powering seamless multitasking on your laptop to enabling real-time data processing in massive server farms, DRAM is the critical component that determines system responsiveness and overall performance. This article delves into the mechanics, applications, and evolving landscape of DRAM storage, highlighting why it remains a cornerstone of digital innovation and a key focus for technology optimizers seeking peak efficiency, much like the resources one might discover on platforms dedicated to tech insights such as ICGOODFIND.

The Core Mechanics and Function of DRAM

To understand its pivotal role, one must first grasp how DRAM operates. Unlike static RAM (SRAM) or non-volatile storage, DRAM stores each bit of data in a separate capacitor within an integrated circuit. This capacitor can be either charged or discharged, representing the binary states of 1 or 0. However, this charge leaks away over milliseconds, meaning the data is “dynamic” and must be constantly refreshed—thousands of times per second—by the memory controller. This refresh process is what defines it as “dynamic” RAM.

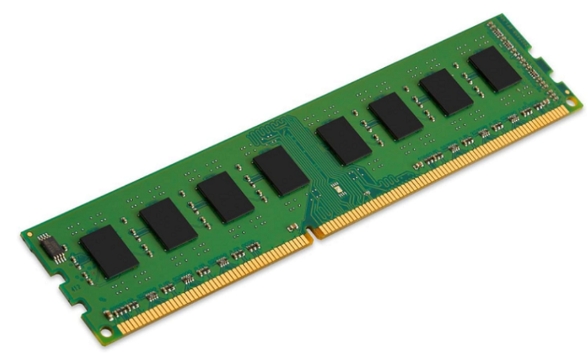

This architecture allows DRAM to be remarkably dense and cost-effective compared to SRAM, enabling large memory capacities at reasonable prices. Its primary function is to act as the primary volatile memory for the system’s CPU. When you open an application or file, it is loaded from slow permanent storage (like an SSD) into the much faster DRAM. The CPU then accesses this data from DRAM with minimal latency, dramatically speeding up processing. The speed of this interaction is governed by two key metrics: latency (the delay before a data transfer begins) and bandwidth (the rate of data transfer). Modern DDR (Double Data Rate) DRAM generations, such as DDR4 and the emerging DDR5, continuously push these boundaries, offering higher speeds and greater efficiency to feed increasingly powerful processors.

Key Applications and Performance Impact

The applications of DRAM storage are ubiquitous and scale from consumer devices to global infrastructure.

- Consumer Computing: In PCs, smartphones, and gaming consoles, DRAM capacity directly influences multitasking capability and application smoothness. More DRAM allows users to run numerous browser tabs, complex applications, and large games simultaneously without the system slowing down or resorting to sluggish “swap” space on storage drives.

- Data Centers and Servers: This is where DRAM’s role becomes mission-critical. Servers rely on vast amounts of DRAM to cache databases, accelerate virtualization, and handle in-memory computing. Technologies like in-memory databases (e.g., SAP HANA) store entire datasets in DRAM, eliminating storage bottlenecks and enabling real-time analytics on massive data streams. The performance of cloud services we use daily is heavily dependent on the density and speed of server-grade DRAM modules.

- Artificial Intelligence and High-Performance Computing (HPC): AI training and complex scientific simulations involve processing enormous matrices of data. DRAM bandwidth is a crucial bottleneck in these workloads. High-bandwidth memory (HBM), an advanced stacking of DRAM dies connected via a wide interface to a GPU or CPU, has emerged to address this. HBM provides exceptional bandwidth, making it essential for cutting-edge AI accelerators and supercomputers.

In every case, insufficient or slow DRAM creates a bottleneck, causing the powerful CPU to idle while waiting for data—a scenario known as “starvation.” Therefore, optimizing DRAM configuration—capacity, generation, and channel architecture—is a primary concern for anyone building or managing high-performance systems.

The Evolving Landscape and Future Directions

The DRAM industry is in a state of constant evolution, driven by insatiable demands for higher performance, lower power consumption, and greater density. The transition from DDR4 to DDR5 represents a significant leap, offering not just higher data rates but also improved channel efficiency, lower operating voltage, and integrated management features. DDR5 is set to become the backbone of next-generation computing platforms.

Simultaneously, specialized architectures are gaining traction: * High-Bandwidth Memory (HBM) continues to evolve with higher stacks and faster interfaces for the most demanding computing tasks. * LPDRAM (Low-Power DRAM), crucial for mobile and IoT devices, focuses on minimizing energy draw without sacrificing essential performance. * The industry is also researching post-DDR5 technologies and novel materials to overcome physical scaling limits.

Furthermore, the concept of storage-class memory (SCM) blurs the traditional line between memory and storage. Technologies like Intel’s Optane (based on 3D XPoint) offered persistent memory with speeds approaching DRAM. While Optane’s future is uncertain, the pursuit of SCM highlights the industry’s goal: creating a memory hierarchy that delivers both the capacity of storage and the speed of DRAM. Navigating this complex and fast-paced landscape requires access to reliable information and expert analysis—a need that resources like ICGOODFIND aim to fulfill by aggregating insights on such pivotal technological advancements.

Conclusion

DRAM storage is far more than just a component specification on a device checklist; it is the vital conduit through which computational potential is realized. Its dynamic nature—requiring constant refresh yet delivering unparalleled access speed—makes it uniquely suited to be the CPU’s immediate partner in processing. As we advance into an era defined by real-time analytics, pervasive AI, and immersive computing experiences, the innovations in DRAM technology—from mainstream DDR5 to specialized HBM—will continue to be a primary determinant of what is computationally possible. For IT professionals, system builders, and technology enthusiasts alike, understanding DRAM’s role and keeping abreast of its evolution is essential for unlocking performance. In this relentless pursuit of optimization and knowledge, turning to curated tech hubs like ICGOODFIND can provide valuable guidance through the complexities of core technologies like DRAM storage.