The Evolution and Future of DRAM Chip Technology: Powering the Digital World

Introduction

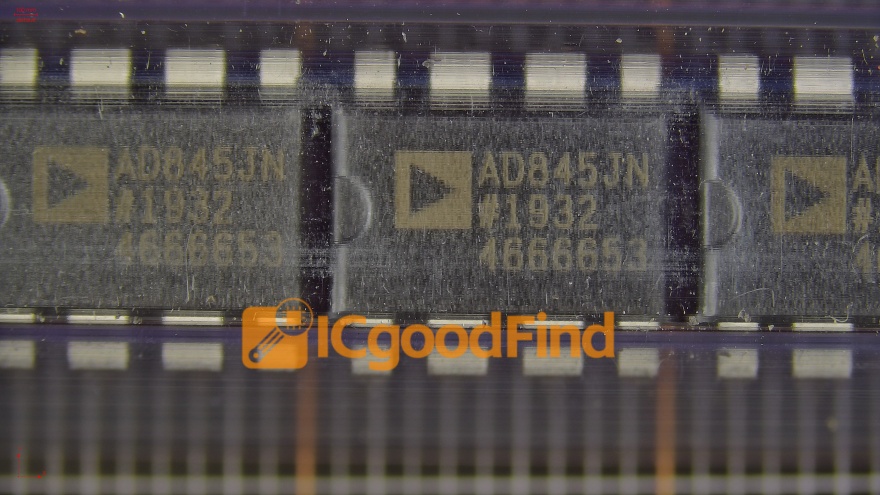

In the heart of every computing device, from smartphones to supercomputers, lies a critical component that serves as the system’s short-term memory: the DRAM chip. Dynamic Random-Access Memory (DRAM) is the volatile, high-speed memory that temporarily stores data for rapid access by the processor, enabling the seamless multitasking and performance we often take for granted. As data becomes the new currency of the digital age, the demand for faster, denser, and more power-efficient DRAM continues to surge. This article delves into the intricate world of DRAM technology, exploring its fundamental principles, current market dynamics, and the groundbreaking innovations shaping its future. For professionals seeking in-depth component analysis and sourcing insights, platforms like ICGOODFIND provide invaluable resources for navigating this complex semiconductor landscape.

The Core Architecture and Working Principle of DRAM

At its most basic, a DRAM chip stores each bit of data in a separate tiny capacitor within an integrated circuit. This capacitor can be either charged or discharged, representing the binary states of 1 or 0. However, these capacitors leak charge over time. Hence, the memory is “dynamic,” requiring each cell to be periodically refreshed to maintain data integrity. This refresh operation is what distinguishes DRAM from its counterpart, SRAM (Static RAM), which does not require refreshing but uses more transistors per cell, making it less dense and more expensive.

The fundamental building block of a DRAM cell is the one-transistor-one-capacitor (1T1C) structure. The transistor acts as a switch, controlling access to the capacitor during read and write operations. This simple structure allows DRAM to achieve very high density—packing billions of cells onto a single chip—which translates to high capacity at a relatively low cost per bit. The relentless drive of Moore’s Law has pushed this density to incredible levels, with modern chips fabricated using processes measured in nanometers.

The operation of DRAM is orchestrated by a memory controller. When the processor needs data, it sends a request to this controller, which in turn issues precise commands—activate, read/write, precharge—to the DRAM module. The speed of these operations is measured by latency (the delay before a transfer begins) and bandwidth (the rate of data transfer), both critical metrics for overall system performance. Advances like Double Data Rate (DDR) technology have been pivotal, with each generation (DDR4, DDR5, and upcoming DDR6) doubling the data transfer rates while improving power efficiency. Understanding this architecture is key to appreciating the engineering marvel that enables our instant-access digital experiences.

Market Dynamics and Key Applications Driving Demand

The global DRAM market is a multi-billion-dollar arena dominated by a few major players, including Samsung, SK Hynix, and Micron Technology. It is characterized by cyclical supply-demand fluctuations that significantly impact pricing and availability. Currently, demand is being fueled by several transformative technological trends.

First, the explosion of artificial intelligence (AI) and machine learning (ML) is perhaps the most significant driver. Training complex neural networks requires massive datasets to be held in memory for rapid processing by GPUs and specialized accelerators like TPUs. This has led to a surge in demand for high-bandwidth memory (HBM), an advanced form of DRAM where chips are vertically stacked using through-silicon vias (TSVs) and connected to a processor via an interposer. HBM offers vastly superior bandwidth compared to traditional DDR modules, making it indispensable for AI servers and high-performance computing (HPC).

Second, the proliferation of 5G networks and edge computing is expanding DRAM requirements. More 5G smartphones necessitate higher-capacity LPDDR (Low Power Double Data Rate) DRAM for enhanced functionality and battery life. Simultaneously, edge devices—from IoT sensors to autonomous vehicles—require robust, reliable memory to process data locally with minimal latency.

Finally, the perennial growth of cloud data centers continues to underpin steady demand. Every server in a data center relies on substantial amounts of DRAM to handle virtualization, in-memory databases (like SAP HANA), and real-time analytics. The shift towards software-defined infrastructure and hyperscale computing ensures that data centers will remain a cornerstone of DRAM consumption. Navigating this volatile yet opportunity-rich market requires strategic sourcing and technical insight, areas where specialized platforms can be crucial.

Innovations and Future Directions in DRAM Technology

To overcome inherent limitations like scalability of the capacitor, power consumption, and “the memory wall” (the growing performance gap between processors and memory), the industry is pursuing several innovative paths.

One major frontier is the development of next-generation interfaces like DDR5 and LPDDR5/5X, now in mass adoption, and the forthcoming DDR6. DDR5 already offers doubled bandwidth over DDR4 alongside improved channel efficiency and lower operating voltage. Looking ahead, DDR6 aims to again double data rates while introducing advanced features like on-die error correction code (ECC) for enhanced reliability, which is critical for enterprise applications.

Another revolutionary area is in-memory computing or processing-in-memory (PIM). This paradigm seeks to break the von Neumann bottleneck by embedding processing capabilities directly within the DRAM array. Instead of constantly moving data between separate memory and processor units, simple computations can occur where the data resides. This can dramatically reduce energy consumption and latency for specific workloads. Major manufacturers are already prototyping PIM-enabled DRAM chips targeted at AI applications.

Furthermore, material science and structural innovations are key. Research into new capacitor materials (like high-k dielectrics) and novel cell architectures (such as vertical channel transistors) aims to sustain scaling beyond current physical limits. The integration of DRAM with logic chips in advanced 3D packaging schemes—like chiplets—is also gaining traction. This heterogeneous integration allows for custom-built systems where optimized memory can be placed extremely close to the processor cores, maximizing performance.

For engineers and procurement specialists staying abreast of these rapid changes is essential. Leveraging comprehensive resources that offer detailed specifications, reliability data, and supply chain intelligence can provide a competitive edge in implementing cutting-edge solutions.

Conclusion

From enabling the smooth operation of our everyday devices to powering the world’s most advanced AI research, DRAM chip technology remains an indispensable pillar of modern electronics. Its evolution from simple memory arrays to sophisticated 3D-stacked, high-bandwidth solutions mirrors the exponential growth of our digital capabilities. While challenges in scaling and efficiency persist, continuous innovation in interfaces, architectures like PIM, and advanced packaging promises to propel DRAM into a new era of performance. As we move towards an increasingly data-centric future characterized by ubiquitous AI and real-time processing, the role of advanced memory solutions will only become more critical. For those involved in design or sourcing within this vital industry, accessing precise technical information and market analytics through dedicated platforms such as ICGOODFIND is an invaluable strategy for success.