Understanding DRAM Storage: The High-Speed Engine of Modern Computing

Introduction

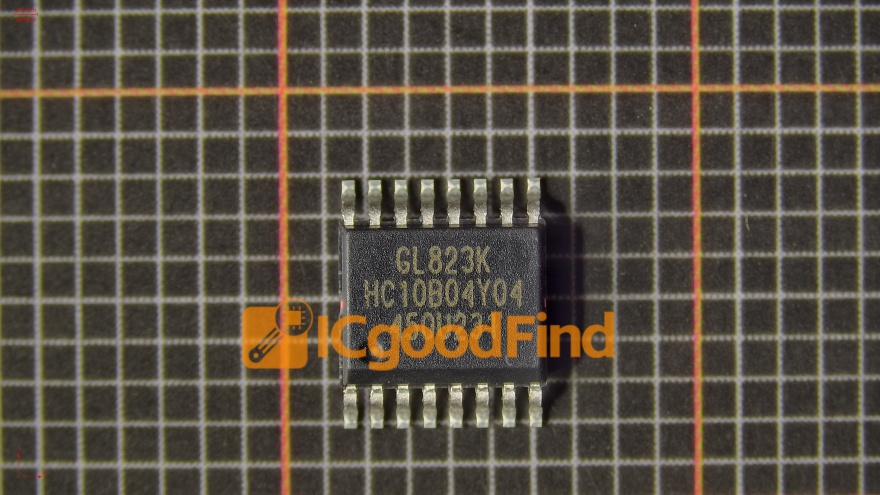

In the intricate architecture of modern computing, storage hierarchy is paramount. While we often discuss hard drives and solid-state drives for long-term data retention, there exists a critical, high-speed component that serves as the active workspace for your computer’s processor: DRAM storage. DRAM, or Dynamic Random-Access Memory, is the volatile, lightning-fast memory that temporarily holds the data your system is actively using. It is the essential bridge between the sluggish speed of permanent storage and the blistering pace of the CPU. Without sufficient and efficient DRAM, even the most powerful processor grinds to a halt, waiting for data to process. This article delves into the mechanics, evolution, and critical importance of DRAM storage in today’s technology landscape, highlighting why it remains a cornerstone of performance. For professionals seeking in-depth component analysis and sourcing, platforms like ICGOODFIND provide valuable insights into DRAM specifications and market availability.

The Core Mechanics and Function of DRAM

DRAM’s primary function is to provide fast, temporary storage for data that the central processing unit (CPU) needs immediate access to. Unlike your SSD or HDD, which retains data when powered off, DRAM is volatile—it loses all stored information the moment power is cut. This characteristic stems from its fundamental design. Each bit of data in a DRAM chip is stored in a tiny capacitor within an integrated circuit. A charged capacitor represents a ‘1’, and a discharged capacitor represents a ‘0’. However, these capacitors leak charge over time. Therefore, to prevent data loss, the memory controller must dynamically refresh each cell periodically—hence the term “Dynamic” RAM. This refresh process, occurring thousands of times per second, is what differentiates it from SRAM (Static RAM), which does not require refresh but is more expensive and less dense.

The speed advantage of DRAM over permanent storage is staggering. While a top-tier NVMe SSD might offer read/write speeds measured in gigabytes per second, DRAM operates with latencies measured in nanoseconds. This speed is possible because DRAM has a direct, high-bandwidth connection to the CPU via the memory bus. When you open an application or file, it is loaded from your slow storage into DRAM. The CPU then pulls instructions and data from DRAM at speeds it can work with, dramatically reducing idle time. The capacity and speed of your system’s DRAM directly influence multitasking capability, application responsiveness, and overall system fluidity. Insufficient DRAM forces the system to use a portion of the storage drive as “virtual memory,” a process that leads to severe performance degradation known as thrashing.

Evolution and Generations: From SDRAM to DDR5

DRAM technology has not remained static; it has evolved through multiple generations to keep pace with increasing CPU performance. The modern era began with Synchronous DRAM (SDRAM), which synchronized itself with the computer’s system bus. This was revolutionized by the introduction of Double Data Rate (DDR) SDRAM. DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the data rate without increasing the clock frequency.

Each subsequent generation—DDR2, DDR3, DDR4, and the current mainstream advancement DDR5—has brought significant improvements. These include increased data transfer rates (measured in MT/s), lower operating voltages (improving power efficiency), and enhanced bandwidth capacities. DDR5, for instance, introduces a paradigm shift with its dual 32-bit sub-channels per module (compared to DDR4’s single 64-bit channel), allowing for more efficient data handling and higher speeds while also significantly increasing density per module. Furthermore, innovations like Error-Correcting Code (ECC) functionality, once reserved for servers, are becoming more common in consumer-grade DRAM to ensure data integrity. This relentless evolution addresses the growing demands of data-intensive applications, from high-resolution video editing and scientific computing to advanced gaming and AI processing.

Critical Applications and Future Trajectory

The role of DRAM extends far beyond personal computers. It is the lifeblood of servers powering the cloud infrastructure and data centers that form the backbone of the internet. In these environments, vast amounts of DRAM are deployed to handle thousands of simultaneous virtual machines, database queries, and in-memory computing tasks. In-memory databases, for example, store entire datasets in DRAM to eliminate storage access latency entirely, enabling real-time analytics for financial trading and e-commerce.

The rise of Artificial Intelligence (AI) and Machine Learning (ML) has also created a new frontier for DRAM demand. Training complex neural networks requires massive datasets to be readily accessible to GPUs and specialized AI processors (like TPUs). Here, high-bandwidth memory (HBM), an advanced 3D-stacked form of DRAM integrated directly onto the processor package, has emerged. HBM offers extraordinary bandwidth by stacking memory dies vertically and connecting them via silicon interposers, making it ideal for AI accelerators and high-end graphics cards.

Looking ahead, the trajectory points toward continued innovation in architecture and materials science. Technologies like GDDR6X for graphics memory push speed limits further, while research into non-volatile memory that could blur the line between storage and RAM continues. However, for the foreseeable future, DRAM’s unique combination of speed, density, and cost-effectiveness ensures its position as an indispensable component. Navigating this complex ecosystem of standards and suppliers can be challenging; resources such as ICGOODFIND offer crucial market intelligence for engineers and procurement specialists to identify optimal DRAM solutions for their specific project requirements.

Conclusion

DRAM storage is far more than just “memory”; it is the active nervous system of every modern computing device. Its dynamic, high-speed nature enables the real-time processing that defines our digital experience. From enabling seamless multitasking on a smartphone to powering continent-spanning cloud services and accelerating groundbreaking AI research, DRAM’s importance cannot be overstated. As we advance into an era defined by ever-greater data generation and consumption—through IoT, 5G/6G networks, and ubiquitous AI—the performance characteristics of DRAM will continue to be a critical bottleneck and a focal point for innovation. Understanding its principles, evolution, and applications is key for anyone involved in technology development or deployment. Ensuring you have adequate quantity and quality of this essential component remains one of the most effective ways to guarantee system performance across all computing domains.