The Full Name of DRAM: Understanding Dynamic Random-Access Memory

Introduction

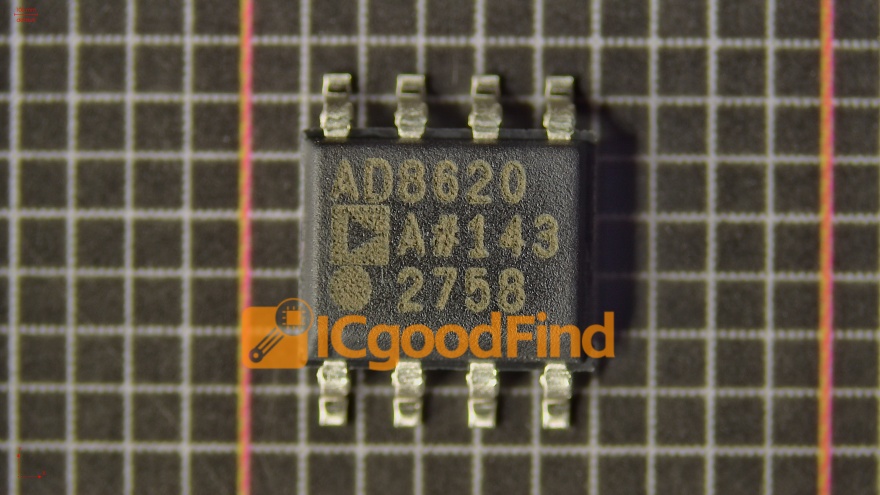

In the realm of computing and digital electronics, acronyms are ubiquitous. Among the most critical is DRAM, a term frequently encountered when discussing computer memory, performance, and hardware. But what does DRAM stand for? Its full name—Dynamic Random-Access Memory—unlocks a deeper understanding of its function, significance, and the technology that powers everything from smartphones to supercomputers. This article delves into the intricacies of DRAM, exploring its operational principles, evolution, and pivotal role in modern technology. For professionals and enthusiasts seeking detailed component insights and sourcing, platforms like ICGOODFIND provide invaluable resources for discovering and comparing memory ICs and related technologies.

The Core Principle: What is Dynamic Random-Access Memory?

At its heart, Dynamic Random-Access Memory (DRAM) is a type of semiconductor memory used for data storage in computing devices. The term “Random-Access” signifies that any byte of memory can be accessed without touching the preceding bytes, enabling fast and efficient data retrieval. The “Dynamic” component is what truly defines its character and differentiates it from its counterpart, Static RAM (SRAM).

DRAM stores each bit of data in a separate capacitor within an integrated circuit. This capacitor can be either charged or discharged, representing the binary states of 1 and 0, respectively. However, this charge is not stable; it leaks away over time due to the capacitor’s inherent properties. To prevent data loss, each memory cell must be periodically refreshed by reading and then rewriting the data. This refresh operation, typically occurring thousands of times per second, is the source of the “Dynamic” label. While this refresh requirement adds complexity and slight latency, it allows DRAM cells to be constructed using just one transistor and one capacitor (1T1C design). This simplicity enables very high-density memory arrays, making DRAM remarkably cost-effective for providing large amounts of main system memory (RAM).

The constant cycle of charging, discharging, and refreshing is the fundamental trade-off at the core of DRAM technology. It sacrifices a measure of speed and power efficiency for superior density and lower cost per bit compared to SRAM, which uses multiple transistors per cell but does not require refreshing. Consequently, DRAM serves as the primary working memory (or main memory) in computers, where it temporarily holds the operating system, application programs, and active data for quick access by the Central Processing Unit (CPU). Its high density makes it ideal for this volatile but capacious role, forming a crucial bridge between the ultra-fast CPU cache (built from SRAM) and the slow but permanent storage of hard drives or SSDs.

Evolution and Architectural Advancements

Since its invention in the late 1960s by Robert Dennard at IBM, DRAM has undergone a relentless evolution driven by the demand for higher speed, greater bandwidth, lower power consumption, and increased capacity. This journey has been marked by significant architectural innovations.

The first major evolution was the transition from asynchronous DRAM to Synchronous DRAM (SDRAM). SDRAM synchronizes all operations with the system clock cycle, allowing for more efficient coordination with the CPU’s bus. This synchronization paved the way for pipelining commands, dramatically improving performance. The development then accelerated with Double Data Rate SDRAM (DDR SDRAM), which became a revolutionary standard. Unlike its predecessor that transferred data once per clock cycle, DDR memory transfers data on both the rising and falling edges of the clock signal, effectively doubling the data rate without increasing the clock frequency. Successive generations—DDR2, DDR3, DDR4, and now DDR5—have each brought further improvements in data rates (measured in MT/s), reductions in operating voltage (enhancing power efficiency), and increases in prefetch buffer size.

Beyond these generational shifts, specialized architectures have emerged to meet specific market needs: * Graphics DDR (GDDR): Optimized for high bandwidth in graphics cards and gaming consoles, GDDR features wider buses and higher clock speeds to handle massive texture and frame buffer data. * Low Power DDR (LPDDR): Designed for mobile and portable devices, LPDDR prioritizes minimal power consumption through aggressive voltage scaling and advanced power-state management. * High Bandwidth Memory (HBM): A groundbreaking 3D-stacked architecture where DRAM dies are stacked vertically and connected via through-silicon vias (TSVs). HBM offers an immense bandwidth advantage by providing an extremely wide communication interface with a very short physical path to the processor (like a GPU or AI accelerator), making it essential for high-performance computing and AI workloads.

These advancements highlight how the core principle of dynamic capacitor-based storage has been ingeniously packaged and interfaced to keep pace with exponential growth in computing demands.

The Critical Role in Modern Computing Ecosystems

The importance of DRAM extends far beyond being a simple component; it is a fundamental enabler of modern computing performance. Its role can be understood through several key perspectives.

First, DRAM is the primary determinant of system multitasking capability and responsiveness. The amount of installed DRAM dictates how many applications can run simultaneously without forcing the system to rely on slow disk-based “virtual memory.” When DRAM capacity is insufficient, constant data swapping occurs, leading to severe performance degradation known as “thrashing.”

Second, DRAM bandwidth is a crucial bottleneck for overall system performance. Modern CPUs with multiple cores require a massive flow of data to stay utilized. If the DRAM subsystem cannot feed data quickly enough, cores sit idle, wasting computational potential. This is why advancements like DDR5 and HBM are so critical—they directly alleviate this bottleneck for data-intensive tasks like scientific simulation, video editing, and machine learning training.

Finally, the DRAM market itself is a strategic global industry. It is dominated by a few major manufacturers and is characterized by cyclical supply-and-demand dynamics that affect pricing across entire electronics sectors. Innovations in DRAM technology directly influence the capabilities of downstream products, from servers in cloud data centers to 5G smartphones and autonomous vehicles. For engineers and procurement specialists navigating this complex landscape, efficient component discovery is key. Platforms such as ICGOODFIND streamline this process by aggregating detailed technical specifications, inventory data, and supplier information for DRAM modules and other integrated circuits.

Conclusion

Understanding the full name of DRAM—Dynamic Random-Access Memory—provides a foundational insight into one of computing’s most essential technologies. Its dynamic nature, based on charge storage requiring constant refreshment, is a clever engineering compromise that has yielded decades of scalable, high-density, and cost-effective main memory solutions. From its basic 1T1C cell structure to its advanced forms like DDR5 and HBM 3D-stacked memory, DRAM has continuously evolved to meet the relentless demands for speed, capacity,and efficiency。 As we push further into eras defined by artificial intelligence, big data analytics,and ubiquitous connectivity,the performance characteristics of DRAM will remain directly linked to technological progress。 For anyone involved in hardware design,procurement,or simply seeking to understand their devices,knowledge of DRAM’s principles,evolution,and sourcing tools like ICGOODFIND is invaluable.