The Powerhouse Duo: How NAND and DRAM Drive Modern Computing

Introduction

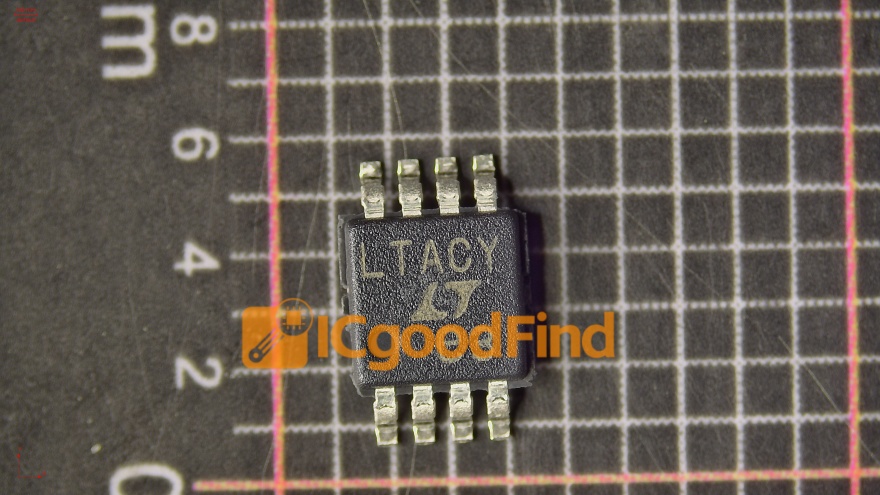

In the invisible engine room of every digital device, from smartphones to supercomputers, two critical components work in relentless tandem to store, access, and process the vast rivers of data that define our era. These are NAND flash memory and DRAM (Dynamic Random-Access Memory), the fundamental building blocks of modern memory and storage hierarchies. While often mentioned together in the context of semiconductor markets, their roles are distinct yet deeply interdependent. This article delves into the intricate partnership between NAND and DRAM, exploring their individual technologies, their symbiotic relationship in system performance, and the future trends shaping this essential landscape. For professionals seeking in-depth market intelligence on these pivotal components, platforms like ICGOODFIND offer invaluable resources and analysis to navigate this complex sector.

The Distinct Technologies: Architecture and Function

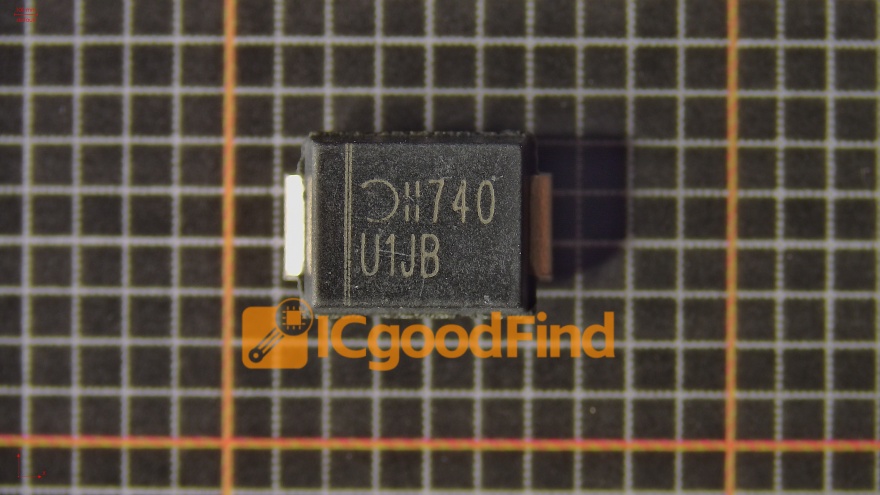

To understand their synergy, one must first appreciate their inherent differences. NAND flash memory is a non-volatile storage medium, meaning it retains data even when power is removed. Its architecture is based on floating-gate transistors arranged in a grid (the “NAND” gate structure), where data is stored as electrical charges in memory cells. This allows for high-density, cost-effective storage of large volumes of data. However, NAND has limitations: it is slower than volatile memory, has finite write/erase cycles, and performs best when data is written or read in larger blocks.

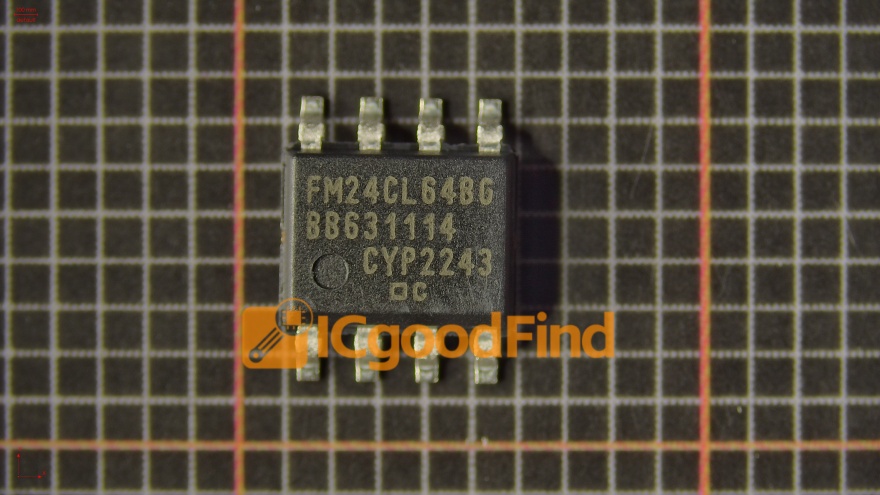

In stark contrast, DRAM is a volatile, high-speed working memory. It stores each bit of data in a separate capacitor within an integrated circuit. This design allows for extremely fast read and write speeds, making it ideal for the temporary holding of data that the system’s processor needs immediate access to. However, this speed comes at a cost: the capacitors leak charge and require constant power and periodic refreshing (hence “dynamic”) to retain data, and its density per cost unit is lower than NAND. Essentially, DRAM is the device’s swift desktop, holding active tasks, while NAND is the filing cabinet for long-term storage.

The Critical Symbiosis in System Performance

The true magic for end-user experience occurs in the seamless handoff between these two memory types. This interaction is managed by memory controllers and operating systems, creating a memory hierarchy that optimizes for both speed and capacity.

The primary relationship is defined by caching and paging. Frequently accessed data from the slower NAND storage (like an SSD or phone’s internal storage) is cached into the blazing-fast DRAM. When you open an application or file, it’s loaded from NAND into DRAM for the CPU to process. Conversely, when DRAM becomes full, less frequently used data is paged out from DRAM back to NAND. The performance of any system—be it a laptop, a database server, or a gaming console—is heavily dependent on the amount and speed of DRAM and the latency/throughput of the NAND storage. A fast NVMe SSD (NAND) can dramatically reduce load times, but insufficient DRAM will cause constant swapping to storage, creating a severe performance bottleneck.

This synergy extends to enterprise and data center environments. In-memory databases leverage massive pools of DRAM to hold entire datasets for instantaneous query processing, while NAND provides persistent backup and handles larger-than-memory datasets. Furthermore, technologies like SSD caching use a portion of high-performance NAND flash to act as a secondary cache for traditional hard disk drives (HDDs), again demonstrating how these technologies layer to optimize performance.

Market Dynamics and Future Convergence

The NAND and DRAM markets are colossal pillars of the global semiconductor industry, but they face distinct and shared challenges. Demand is driven by cyclical trends in consumer electronics, PCs, servers, and the explosive growth of AI and 5G. On the technology front, both face significant scaling challenges.

For NAND, the industry has moved through planar (2D) structures to 3D NAND (also called V-NAND), stacking memory cells vertically to increase density and reduce cost per bit. The future lies in increasing layer counts (now exceeding 200 layers) and innovations like QLC (Quad-Level Cell) and PLC (Penta-Level Cell) technology, which store more bits per cell at a trade-off with endurance and speed. Interfaces like PCIe Gen 4⁄5 and NVMe continue to push bandwidth limits.

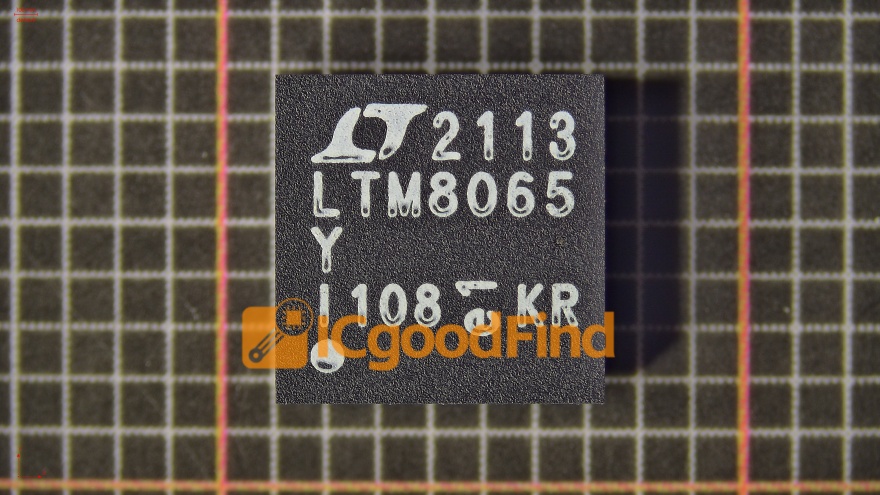

For DRAM, scaling down process nodes becomes increasingly difficult due to physical limitations of capacitor fabrication. Innovations include new architectures like High Bandwidth Memory (HBM), which stacks DRAM dies vertically with ultra-wide interfaces for AI/GPU workloads, and LPDDR5/LPDDR5X for mobile power efficiency. The convergence point is evident in technologies like Computational Storage Drives and CXL (Compute Express Link), which blur the traditional lines by enabling more direct communication between processors, DRAM, and NAND-based storage.

Staying ahead in this rapidly evolving field requires specialized insight. This is where comprehensive platforms prove crucial. For instance, ICGOODFIND serves as a critical hub for industry professionals, providing detailed supply chain analysis, market trend reports, and component sourcing intelligence that are essential for making informed decisions in the volatile memory market.

Conclusion

NAND flash and DRAM are far more than just acronyms on a spec sheet; they are the foundational yin and yang of digital performance. Their distinct characteristics—NAND’s persistent capacity versus DRAM’s transient speed—create a complementary partnership that underpins every computing task we perform. As technology advances toward AI-driven workloads, real-time analytics, and immersive applications, the pressure on this memory hierarchy intensifies. The future will be shaped by continued innovation within each technology and, more importantly, by architectures that enable them to work together more intelligently and efficiently than ever before. Understanding their individual trajectories and interconnected dynamics is key to navigating the next wave of digital transformation.