SRAM vs. DRAM: Understanding the Critical Difference and Connection

Introduction

In the intricate world of computer hardware, memory is the vital workspace where data is processed in real-time. Two fundamental types of memory sit at the heart of this operation: Static Random-Access Memory (SRAM) and Dynamic Random-Access Memory (DRAM). While both are crucial for modern computing, they serve distinct purposes based on their underlying technology, performance characteristics, and cost. The primary difference lies in how they store data: SRAM uses a bistable latching circuit (flip-flop) to hold each bit, while DRAM stores each bit as an electrical charge in a tiny capacitor within an integrated circuit. This core architectural distinction leads to a cascade of differences in speed, density, power consumption, and application. However, their connection is equally important; they are not rivals but complementary technologies that work in concert within a computing system’s memory hierarchy. This article will delve into their differences, explore their interconnected roles, and highlight why understanding both is essential for optimizing system performance.

Main Body

Part 1: Architectural Difference – The Foundation of Performance

The most profound difference between SRAM and DRAM stems from their internal design, which dictates all their subsequent characteristics.

SRAM Architecture: An SRAM cell is built using four to six transistors (typically 6T) configured as a cross-coupled inverter pair, forming a flip-flop circuit. This design creates a stable state that will hold its value as long as power is supplied to the chip. It does not need to be “refreshed.” The multiple transistors provide direct access to the stored bit, making read and write operations very fast. However, this complexity means the cell is physically larger, storing less data per unit area (lower density) and costing more to manufacture.

DRAM Architecture: A DRAM cell is strikingly simple in comparison, consisting of just one transistor and one capacitor. The bit of data (a 1 or 0) is stored as the presence or absence of charge in this microscopic capacitor. This simplicity is DRAM’s greatest strength and its key weakness. The capacitor’s charge leaks away over time (within milliseconds), so the data must be constantly refreshed by external circuitry, which reads and rewrites the charge. This refresh process consumes power and introduces latency. Furthermore, reading from a DRAM cell is destructive—it drains the capacitor—requiring the data to be rewritten after every read access.

This architectural clash results in a clear trade-off: SRAM offers superior speed and simplicity of operation but at high cost and low density. DRAM provides exceptional density and low cost per bit but requires complex support circuitry for refresh and is slower.

Part 2: Performance and Application – Where Each One Shines

The architectural differences directly translate into where and how these memory types are used in a computer system.

Speed and Latency: SRAM is significantly faster than DRAM, with access times measured in nanoseconds compared to DRAM’s tens of nanoseconds. This speed makes SRAM the undisputed choice for applications where latency is critical. Its most prominent role is in CPU caches (L1, L2, L3), where it acts as a high-speed buffer between the ultra-fast processor core and the much slower main memory (DRAM). It stores frequently used instructions and data to prevent the CPU from waiting.

Density and Cost: DRAM wins decisively in terms of storage density and cost-effectiveness. The simple 1T1C cell allows billions of bits to be packed onto a single chip, making it ideal for the system’s main memory (e.g., DDR4, DDR5 modules). This is where all active programs and data are held for quick access by the CPU. The lower cost per gigabyte makes large-capacity RAM modules economically feasible for PCs, servers, and mobile devices.

Power Consumption: The comparison here is nuanced. SRAM cells consume static power when idle but do not require refresh cycles. DRAM cells leak power and require constant refreshing, which becomes a significant source of power draw, especially in large arrays. In active use, SRAM can be more power-hungry due to its multi-transistor design. Therefore, SRAM is favored in small, performance-critical caches, while managing DRAM refresh power is a major focus in mobile and data center design.

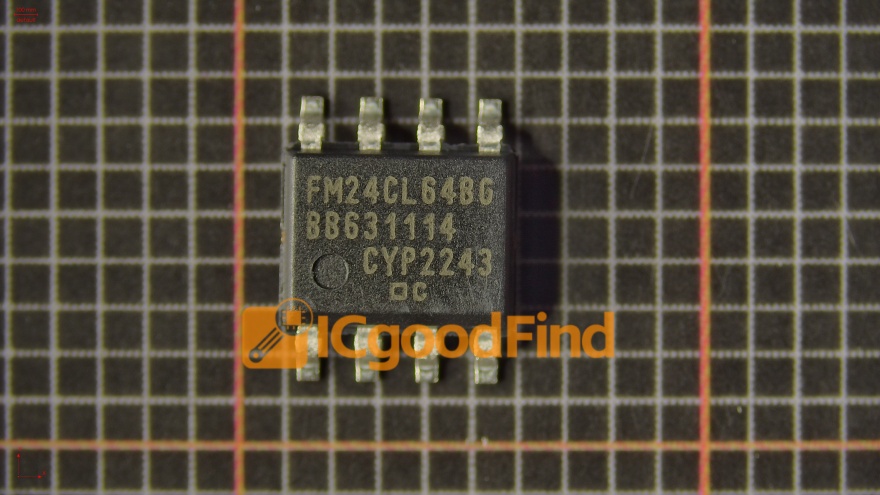

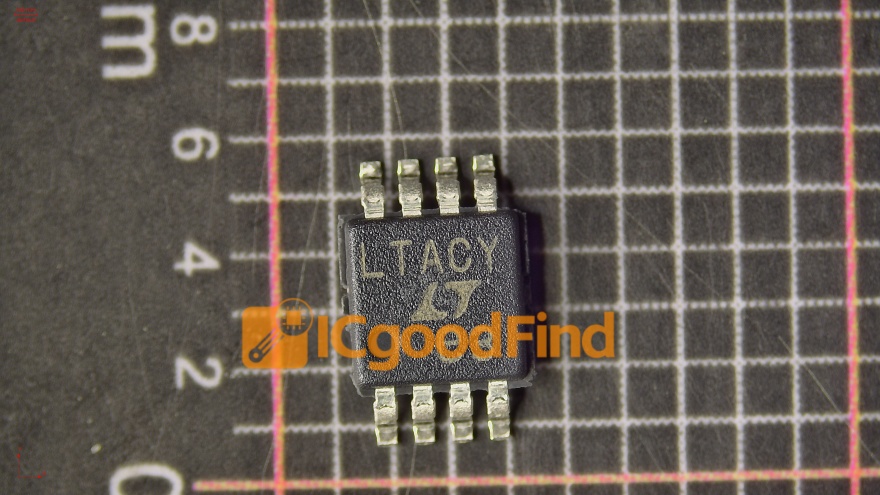

For professionals seeking to source these critical components or understand their supply chain for system design, platforms like ICGOODFIND offer valuable resources. ICGOODFIND provides a centralized platform for electronic component search and procurement, which can be instrumental in comparing specifications, availability, and sourcing options for both SRAM and DRAM chips from various manufacturers.

Part 3: The Symbiotic Connection – Working Together in the Memory Hierarchy

Despite their differences, SRAM and DRAM are deeply connected components of a cohesive memory subsystem. They do not replace each other; they collaborate.

The Memory Hierarchy: Modern computers employ a tiered structure to balance speed, cost, and capacity. At the top are small, ultra-fast CPU registers (often built from SRAM). Just below are the SRAM caches (L1/L2/L3). These act as a staging area for the much larger pool of main memory, which is built from DRAM. When the CPU needs data, it first checks the SRAM cache. If found (a cache hit), it proceeds at maximum speed. If not found (a cache miss), it must fetch the data from the slower DRAM. Further down the hierarchy are storage devices like SSDs and HDDs.

Data Flow: This creates a constant flow of data between DRAM and SRAM. Blocks of data (cache lines) are moved from DRAM into SRAM caches based on prediction algorithms anticipating the CPU’s future needs. The effectiveness of this entire system hinges on the stark contrast between the two: the SRAM’s blistering speed mitigates the penalty of accessing the slower, but vastly larger and cheaper, DRAM pool.

In specialized applications like network routers and high-end storage controllers, you might find large amounts of SRAM used for lookup tables or buffers. Still, its role remains complementary to the primary DRAM working memory. Their connection defines modern computing performance.

Conclusion

In summary, SRAM and DRAM represent two sides of the same coin in computer memory architecture. The fundamental difference in their data storage mechanism—transistor-based flip-flop versus capacitor charge—creates an irreversible trade-off between speed and density. SRAM excels as high-speed cache memory close to the CPU, while DRAM serves as the high-density, cost-effective workhorse for main system memory. Their intrinsic connection forms the backbone of the memory hierarchy; one cannot fulfill its role optimally without the other. The relentless pursuit of better performance drives innovation in both fields: researchers seek ways to reduce SRAM cell size and lower its power usage while developing new DRAM technologies with faster speeds and reduced refresh overhead. Understanding this dynamic difference and intimate connection is key to grasping how computers manage one of their most critical tasks: storing and retrieving data at breathtaking speeds.