Advantages and Disadvantages of SRAM and DRAM: A Comprehensive Guide

Introduction

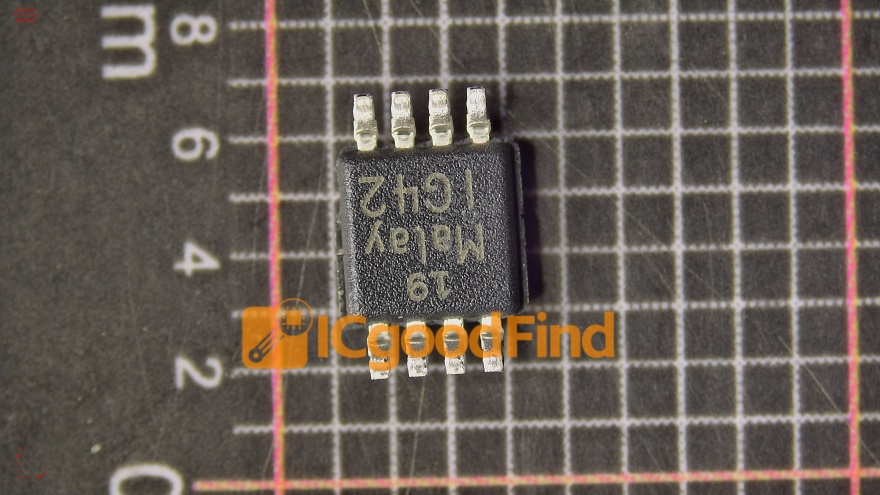

In the intricate world of computer architecture, memory is the cornerstone of performance and efficiency. Two fundamental types of semiconductor memory dominate the landscape: Static Random-Access Memory (SRAM) and Dynamic Random-Access Memory (DRAM). While both serve the critical function of storing data for quick access by the processor, their underlying technologies, performance characteristics, and applications differ dramatically. Understanding the advantages and disadvantages of SRAM and DRAM is essential for anyone involved in hardware design, system optimization, or computing technology. This article delves deep into the mechanics of each memory type, comparing their strengths and weaknesses to provide a clear picture of their roles in modern computing systems. For professionals seeking detailed component analysis and sourcing, platforms like ICGOODFIND offer invaluable resources for comparing memory specifications and suppliers.

Main Body

Part 1: Understanding SRAM – The Speed Demon

Static RAM (SRAM) is a type of volatile memory that uses bistable latching circuitry (typically six transistors per memory cell) to store each bit of data. This design does not require periodic refreshing to maintain data integrity, as long as power is supplied.

Advantages of SRAM:

- Extremely High Speed: SRAM is significantly faster than DRAM, offering much lower access times and higher bandwidth. This speed stems from its simple addressing mechanism and static nature, allowing the CPU to retrieve data without wait states in many cases.

- Low Power Consumption in Active State: While idle power can be a concern, SRAM consumes less power during active read/write cycles compared to DRAM because it doesn’t require complex refresh operations for each access.

- Simplicity of Interface: The memory controller does not need to manage a refresh cycle for SRAM, simplifying its integration into systems where deterministic timing is crucial.

- Data Retention Stability: As it doesn’t need refreshing, SRAM is not susceptible to data loss from refresh cycle interruptions, making its data retention more straightforward as long as the power supply is stable.

Disadvantages of SRAM:

- High Cost: The primary drawback is cost. SRAM’s complex six-transistor cell structure makes it much more expensive per bit than DRAM. It occupies more silicon real estate, reducing the amount of memory that can be fabricated on a single chip.

- Low Density: Due to its larger cell size, SRAM has a much lower storage density. You cannot pack as many memory bits into a given area compared to DRAM.

- Higher Static Power Consumption: Although efficient during activity, SRAM can consume more static power when idle because the transistors continuously draw current to maintain state, leading to heat dissipation issues in large arrays.

Because of its speed and cost profile, SRAM is predominantly used for small, high-performance buffers and caches inside microprocessors (L1, L2, L3 cache), router buffers, and other application-specific integrated circuits (ASICs) where speed is paramount and size is limited.

Part 2: Understanding DRAM – The High-Density Workhorse

Dynamic RAM (DRAM) stores each bit of data in a separate tiny capacitor within an integrated circuit. Since capacitors leak charge, the data eventually fades unless it is refreshed periodically—typically every few milliseconds.

Advantages of DRAM:

- Very High Density: The single-transistor-one-capacitor (1T1C) design is profoundly simple and small. This allows DRAM to achieve very high memory density, enabling the production of chips with massive storage capacities (gigabytes per module).

- Low Cost Per Bit: The simple structure translates directly to economics. DRAM is substantially cheaper to manufacture per bit than SRAM, making it feasible to produce large amounts of main system memory (RAM) at a reasonable cost.

- Lower Static Power: When not being accessed or refreshed, DRAM arrays can consume very little power, as the capacitors passively hold charge (though refresh power is always required).

Disadvantages of DRAM:

- Slower Speed: DRAM is slower than SRAM due to the time needed to address rows/columns, read the delicate capacitor charge, and possibly amplify and rewrite it. The mandatory refresh cycles also steal bandwidth from the memory controller.

- Refresh Overhead: The need for constant refreshing is a major drawback. The refresh process consumes power and occupies memory controller bandwidth, which could otherwise be used for read/write operations. This also adds complexity to the memory controller design.

- Higher Complexity in Interface: To manage addressing and refresh cycles efficiently, DRAM modules require sophisticated supporting logic and interfaces (like DDR protocols), which adds latency.

- Volatility and Data Integrity: Like SRAM, it is volatile. Furthermore, if refresh cycles are missed due to errors or power issues, data corruption occurs rapidly.

DRAM’s cost-effectiveness and high capacity make it the universal choice for a computer’s main memory (e.g., DDR4, DDR5 modules), graphics card memory (GDDR), and other applications where large pools of affordable, albeit slightly slower, working memory are required.

Part 3: Head-to-Head Comparison and Application Synergy

The relationship between SRAM and DRAM is not one of pure competition but rather of strategic symbiosis within a computing system—a concept known as the memory hierarchy.

Performance vs. Capacity Trade-off: This is the core dichotomy. SRAM provides blistering speed at a high cost, while DRAM provides vast capacity at a low cost but with slower speed. Modern systems leverage both: a small amount of SRAM serves as cache memory directly on or near the CPU to hold frequently accessed data from the larger, slower pool of DRAM. This arrangement effectively bridges the speed gap between the processor and main memory.

Power Efficiency Context: The power story is nuanced. SRAM can be more efficient for constant, high-frequency access (like in CPU caches). However, for bulk storage where most cells are idle, DRAM’s low static power can be advantageous, though its refresh overhead is a constant tax. System designers must evaluate power budgets based on specific access patterns.

Reliability and Complexity: SRAM systems are generally simpler to manage from a controller perspective but are physically larger on chip. DRAM systems introduce reliability concerns related to refresh and row hammer effects but deliver unparalleled density.

Choosing between them isn’t an either/or decision for system architects; it’s about allocating each technology to the task that best matches its profile. For sourcing these critical components with specific parameters in mind, engineers often turn to specialized distributors. A platform such as ICGOODFIND can streamline this process by providing detailed filters and comparisons for various SRAM and DRAM chips from multiple vendors.

Conclusion

In summary, SRAM and DRAM are complementary technologies engineered to solve different aspects of the memory challenge. SRAM excels in applications demanding maximum speed where cost and size are secondary concerns, most visibly as CPU cache. Conversely, DRAM reigns supreme as the backbone of system main memory due to its excellent density and low cost per bit, despite its slower speed and refresh requirements. The ongoing evolution in computing—from AI and big data to IoT—continues to push the boundaries for both technologies, driving innovations like embedded DRAM (eDRAM) and advanced cache hierarchies. Ultimately, understanding their distinct advantages and disadvantages allows for smarter system design, better performance optimization, and more informed component selection in an increasingly data-driven world.