What Does DRAM Mean? Understanding the Memory That Powers Your Devices

In the world of computing and electronics, you’ve likely encountered the term “DRAM” countless times—whether when purchasing a new laptop, smartphone, or reading about the latest advancements in technology. But what exactly does DRAM mean, and why is it so crucial to the performance of nearly every digital device we use today? At its core, DRAM stands for Dynamic Random-Access Memory, a type of semiconductor memory that serves as the primary working memory in computers and many other electronic systems. Unlike permanent storage solutions like hard drives or SSDs, DRAM is volatile, meaning it temporarily holds data that the processor needs quick access to, but loses this information when power is turned off. This article will delve into the intricacies of DRAM, exploring its fundamental principles, how it compares to other memory types, and its pivotal role in modern computing. By understanding DRAM, you’ll gain insight into one of the key components that determine the speed and efficiency of your devices. For those seeking in-depth technical resources or reliable components, platforms like ICGOODFIND offer valuable insights and access to a wide range of semiconductor products.

The Fundamentals of DRAM: How It Works

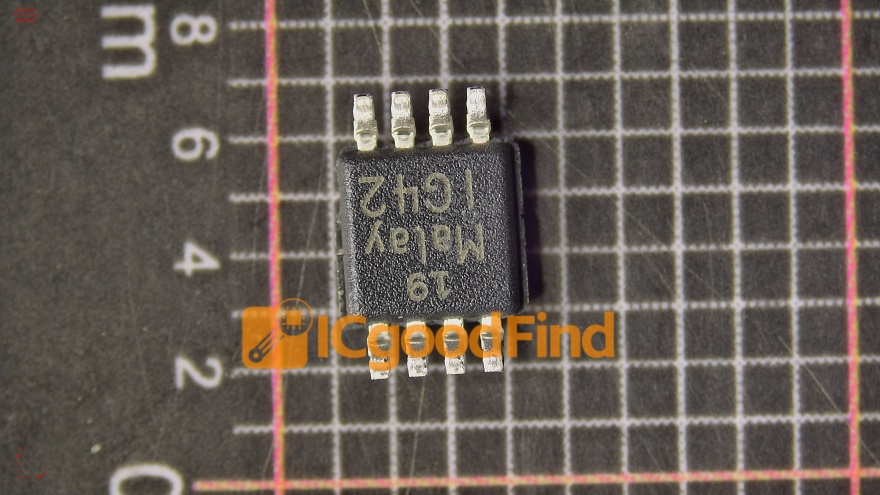

To grasp what DRAM means, it’s essential to understand its basic operation and design. DRAM stores each bit of data in a separate capacitor within an integrated circuit. These capacitors can either be charged or discharged, representing the binary states of 1 and 0, respectively. However, because capacitors leak charge over time, the data stored in DRAM cells can fade away within milliseconds. To prevent data loss, DRAM requires constant refreshing, where a memory controller periodically reads and rewrites the data in each cell. This refresh process is what makes DRAM “dynamic,” as opposed to “static” memory like SRAM (Static RAM), which does not need refreshing but uses more transistors per bit, making it larger and more expensive.

The structure of DRAM is organized in a grid of rows and columns, with each intersection containing a capacitor and a transistor. This design allows for high density and relatively low cost per bit, which is why DRAM has become the standard for main system memory (often called RAM) in personal computers, servers, and consumer electronics. When your computer runs applications, the operating system loads necessary data from slower storage (like an SSD) into DRAM so the CPU can access it rapidly. The speed of this access is measured in nanoseconds, significantly faster than even the quickest storage drives. However, the need for refreshing does introduce slight delays and power consumption, which engineers continuously work to minimize through advancements in DRAM technology.

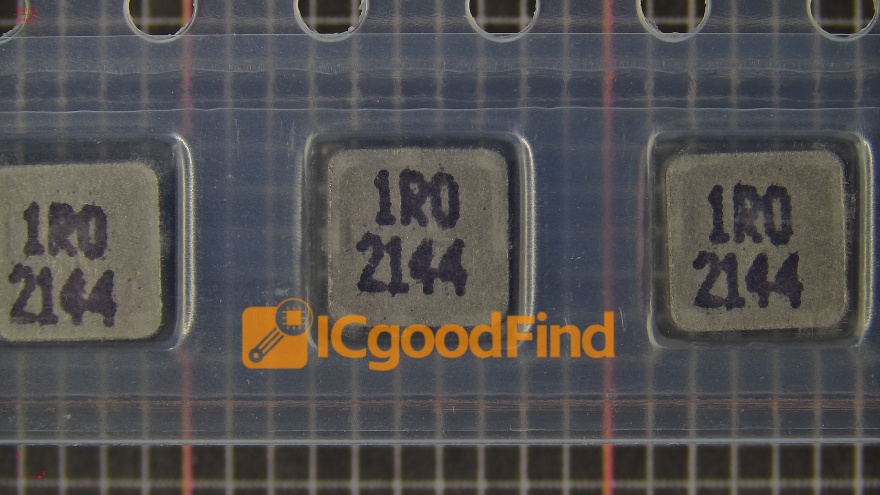

Over the decades, DRAM has evolved through various generations, from early asynchronous designs to modern synchronous types like SDRAM (Synchronous DRAM). Today’s prevalent standards include DDR (Double Data Rate) SDRAM, with iterations such as DDR4 and DDR5, which transfer data on both the rising and falling edges of the clock signal, effectively doubling the data rate. Each new generation brings improvements in speed, bandwidth, and energy efficiency, enabling more powerful computing experiences. For instance, DDR5 DRAM offers significantly higher data transfer rates and reduced power consumption compared to DDR4, supporting the demands of high-performance gaming, artificial intelligence, and data-intensive servers.

DRAM vs. Other Memory Types: Key Differences

When discussing what DRAM means, it’s helpful to contrast it with other common memory technologies to appreciate its unique role. The most frequent comparison is between DRAM and SRAM (Static RAM). As mentioned earlier, SRAM uses flip-flop circuits (typically six transistors per bit) to store data without needing refresh cycles. This makes SRAM faster and more power-efficient for frequent accesses, but its larger cell size results in lower density and higher cost. Consequently, SRAM is primarily used in small-capacity, high-speed caches within CPUs (like L1, L2, and L3 caches), while DRAM serves as the larger main memory. In essence, SRAM acts as a speedy workspace for immediate processor tasks, whereas DRAM functions as a broader temporary holding area for active programs and data.

Another critical distinction lies between DRAM and non-volatile memory types like NAND flash (used in SSDs and USB drives) or ROM (Read-Only Memory). Unlike DRAM, non-volatile memories retain data without power, making them ideal for long-term storage. However, they are much slower to write and have limited write endurance compared to DRAM. For example, writing data to a NAND flash cell involves moving electrons through an insulator—a process that wears out the cell over time. In contrast, DRAM’s capacitor-based storage allows for virtually unlimited write cycles during its operational life. This durability under constant read/write operations is why DRAM is indispensable for tasks requiring frequent data updates.

Moreover, emerging memory technologies like 3D XPoint (marketed as Intel Optane) blur the lines between DRAM and storage by offering non-volatility with speeds approaching those of DRAM. Yet, due to cost and scalability factors, DRAM remains dominant for main memory applications. It’s also worth noting specialized variants like GDDR (Graphics DDR) used in graphics cards; while based on DDR principles, GDDR is optimized for higher bandwidth to handle graphical data streams. Understanding these differences clarifies why DRAM is tailored for high-capacity, fast-access temporary storage, balancing cost, speed, and density in ways other memories cannot.

The Role of DRAM in Modern Computing Systems

The significance of DRAM extends far beyond its technical definition; it is a cornerstone of contemporary computing performance. In any computing system—from smartphones to supercomputers—the CPU relies on a hierarchy of memory to execute tasks efficiently. At the top are small SRAM caches on the processor die itself; below that sits the main DRAM pool; finally comes persistent storage like SSDs or HDDs. When you open a software application or file on your computer or phone ,the relevant code and data are moved from storage into DRAM so that the CPU can work with them swiftly . The amount and speed of DRAM directly influence how many applications you can run simultaneously without slowdowns—a phenomenon often experienced when having too many browser tabs open on a system with insufficient RAM.

In servers and data centers ,DRAM plays an even more critical role . These environments handle massive volumes of requests ,such as serving web pages ,processing transactions ,or running databases . Here ,high-capacity ,high-speed ,and reliable DRAM modules are essential for minimizing latency—the delay before a transfer of data begins . For instance ,in-memory databases like SAP HANA store entire datasets in DRAM to achieve query responses thousands of times faster than disk-based systems . Similarly ,cloud providers leverage vast amounts of DRAM to enable virtual machines and containers to operate efficiently for countless users .

Advancements in artificial intelligence (AI) and machine learning further underscore DRAM’s importance . Training complex neural networks involves processing enormous matrices of data ,which must be readily accessible to specialized processors like GPUs or TPUs . While these accelerators have their own high-bandwidth memories (e.g., HBM—High Bandwidth Memory ,a stacked form of DRAM), they still depend on system-level DDR DRAM for handling large datasets . As AI models grow ,so does the demand for faster ,higher-capacity DRAM solutions . Looking ahead ,technologies like DDR5 ,LPDDR5 (for mobile devices),and emerging standards aim to deliver bandwidth exceeding 6 Gbps per pin while improving power efficiency —key enablers for next-generation applications from autonomous vehicles to edge computing .

For engineers ,procurement specialists ,or enthusiasts seeking reliable sources for these advanced components ,platforms like ICGOODFIND provide comprehensive catalogs and technical support . Such resources help navigate the complex landscape of semiconductor parts ,ensuring optimal selection for specific project needs .

Conclusion

In summary ,understanding what DRAM means involves recognizing it as Dynamic Random-Access Memory —a volatile ,capacitor-based memory that requires periodic refreshing but offers an optimal balance of speed ,density ,and cost for use as main system memory . Its dynamic nature distinguishes it from static alternatives like SRAM ,while its volatility contrasts with non-volatile storage media . From powering everyday devices to enabling cutting-edge AI and server applications ,DRAM remains an indispensable component in modern electronics . As technology progresses toward faster data processing and greater energy efficiency ,innovations in DDR generations and specialized forms like GDDR or HBM will continue to push performance boundaries . For anyone involved in technology development or procurement ,staying informed about these trends is crucial ; resources like ICGOODFIND can be invaluable in accessing up-to-date information and components . Ultimately ,DRAM’s evolution will keep shaping how we interact with digital worlds—making our devices smarter ,faster ,and more capable than ever before .