Understanding DRAM Command Rate: A Crucial Performance Parameter

Introduction

In the intricate world of computer hardware, where every nanosecond counts, the performance of Dynamic Random-Access Memory (DRAM) is a cornerstone of overall system speed. While most enthusiasts are familiar with metrics like frequency (e.g., DDR4-3200) and timings (CL16), there exists a subtler, yet profoundly impactful setting: the DRAM Command Rate. Often abbreviated as CR or CMD, this parameter governs the latency between the memory controller issuing a command and the DRAM module beginning to execute it. It is a critical link in the chain of memory operations, directly influencing system responsiveness, stability, and bandwidth efficiency. For professionals overclocking high-performance systems, data center engineers optimizing server throughput, and even gamers seeking the last drop of frame-rate performance, a deep understanding of command rate is indispensable. This article delves into the technicalities of DRAM command rate, its performance implications, and practical considerations for configuration.

The Core Mechanics of DRAM Command Rate

At its heart, the command rate defines the number of clock cycles the memory controller must wait after selecting a DRAM module (via the Chip Select signal) before it can issue a valid command, such as Activate, Read, or Write. This delay is necessary for the electrical signals to stabilize on the memory bus and for the targeted DRAM chips to properly “latch onto” the incoming command.

The two most common settings are 1T (1 Cycle) and 2T (2 Cycles). Occasionally, on some platforms or with specific configurations, you might encounter 3T.

-

Command Rate 1T (1N): This is the tighter, more aggressive setting. The memory controller waits only one clock cycle between selecting the module and issuing the command. The primary advantage of 1T is lower latency, as every memory access sequence begins one cycle sooner. This can lead to tangibly snappier system performance in latency-sensitive applications. However, achieving stability at 1T places higher demands on the quality of the memory modules (ICs), the motherboard’s trace layout, and the strength of the integrated memory controller (IMC) within the CPU. It requires a cleaner electrical signal with less noise and reflection.

-

Command Rate 2T (2N): This is the more relaxed, conservative setting. The controller introduces a two-cycle delay. The key benefit of 2T is significantly improved system stability and compatibility. The extra clock cycle provides more time for signal settling, making the system more forgiving of lower-quality RAM, longer motherboard traces (which can cause signal degradation), or a weaker IMC. It’s often the default setting for systems with multiple dual-rank modules or all four DIMM slots populated, as the electrical load on the memory channel is higher.

Choosing between 1T and 2T is a classic engineering trade-off: speed versus stability. Running at 1T can be seen as “overclocking” the command/address bus, while 2T is its guaranteed specification.

Performance Impact and Real-World Scenarios

The effect of DRAM command rate is not always captured by synthetic bandwidth tests like AIDA64’s Memory Read/Write benchmarks. These tests often measure sustained sequential transfers, where raw bandwidth (influenced heavily by frequency) is king. The true impact of command rate lies in reducing access latency for random operations.

In practical terms: * Gaming: Many games, especially open-world titles and competitive shooters, involve rapid, unpredictable access to memory assets. A lower-latency memory subsystem facilitated by a 1T command rate can reduce frame-time inconsistencies (stuttering) and provide slightly higher average and percentile (1% low) frame rates. * Professional Applications: Tasks like code compilation, scientific simulations, financial modeling, and database transactions involve countless random memory accesses. Shaving off even a few nanoseconds per access via a 1T command rate can compound into measurable reductions in total processing time. * Server Environments: In servers running virtual machines or high-transaction databases, memory latency is a critical factor in overall throughput and response time to client requests. Optimizing command rate alongside other timings is part of fine-tuning server performance.

However, it’s crucial to understand that the performance delta between 1T and 2T is typically in the range of 1-5%, depending on the overall application sensitivity to memory latency. It is one piece of a larger puzzle that includes primary timings (CL-tRCD-tRP-tRAS), secondary/tertiary timings, and memory frequency.

Configuration Considerations and Best Practices

Configuring the DRAM command rate is done within your system’s BIOS/UEFI settings, usually under “DRAM Timing Control” or similar. Here are key considerations:

- Stability is Paramount: Always stress-test your system after changing the command rate from 2T to 1T. Use utilities like MemTest86, HCI MemTest, or OCCT’s memory test for several hours. If you encounter errors or system crashes, revert to 2T. An unstable system is worse than a slightly slower one.

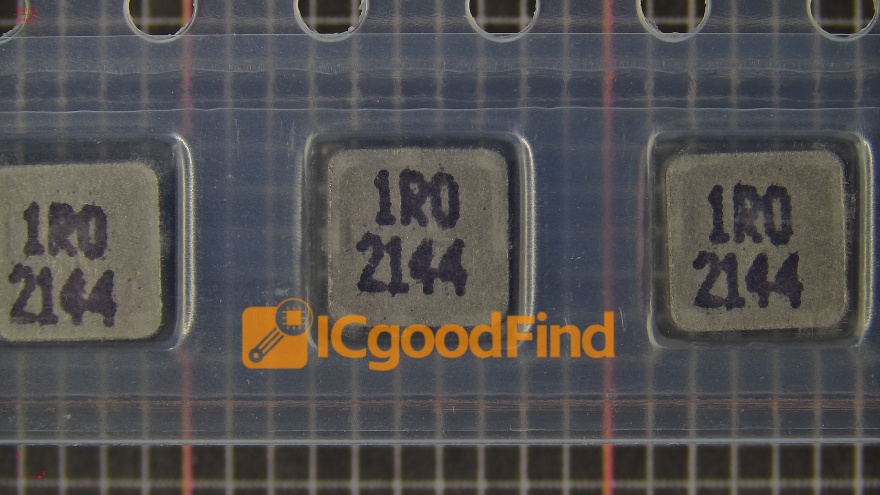

- Module Quality and Population: High-quality memory kits using premium ICs (like Samsung B-die or Micron Rev.E) are more likely to run stable at 1T. Furthermore, systems with fewer DIMMs (e.g., two slots populated) and single-rank modules will have a much easier time achieving a 1T command rate than systems with four dual-rank modules.

- Interaction with Other Timings: The command rate interacts with other advanced timings like

tRFC,tFAW, andtWR. Sometimes, relaxing one secondary timing can allow you to tighten the command rate to 1T for a net performance gain. - Platform Specifics: The capabilities of your CPU’s IMC and your motherboard’s design (layer count, trace routing) are limiting factors. Some platforms are notoriously more demanding than others when it comes to low-latency memory configurations.

For those seeking in-depth analysis and guidance on pushing their memory subsystems to the limit—including fine-tuning parameters like command rate—specialized resources are invaluable. For instance, communities and technical deep-dives found through resources like ICGOODFIND can provide granular insights into specific memory IC behaviors, motherboard capabilities, and advanced tuning methodologies that go beyond mainstream guides.

Conclusion

DRAM Command Rate is far from a trivial setting hidden in advanced BIOS menus. It represents a fundamental timing that balances raw performance against electrical stability in your computer’s memory subsystem. While adopting a 2T command rate offers robust stability for complex configurations and is often necessary for compatibility, mastering a stable 1T configuration can unlock lower latency, contributing to smoother performance in applications sensitive to memory response times. The decision should be informed by your specific hardware configuration (module quality, number of ranks), your performance needs, and a rigorous commitment to stability testing. In the relentless pursuit of optimization—whether for a bleeding-edge gaming rig or a mission-critical server—understanding and correctly applying parameters like command rate separates adequate performance from truly refined efficiency.