The Essential Guide to DRAM and Memory: Powering the Digital World

Introduction

In the invisible engine room of every computer, smartphone, and server, a relentless, high-speed exchange of data is taking place. This critical process, which underpins every click, swipe, and computation, is powered by memory technology. At the heart of this system lies Dynamic Random-Access Memory (DRAM), the volatile, high-speed workhorse that serves as your device’s primary workspace. While “memory” is a broad term encompassing various storage technologies, DRAM holds a unique and indispensable position in the computing hierarchy. Understanding the interplay between DRAM and memory systems is fundamental to grasping how modern technology achieves its speed and efficiency. This article delves into the intricacies of DRAM, its role within the broader memory landscape, and the evolving innovations that continue to push the boundaries of performance.

The Core Architecture and Function of DRAM

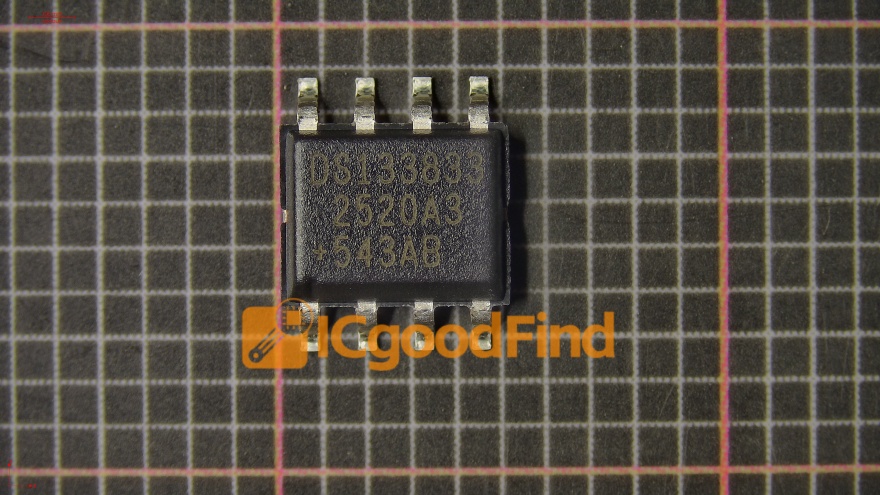

Unlike its cousin, Static RAM (SRAM), which uses a complex structure of transistors to hold data, DRAM employs a simpler, more compact design. Each bit of data in a DRAM chip is stored in a microscopic capacitor within an integrated circuit. This capacitor can either be charged (representing a ‘1’) or discharged (representing a ‘0’). The simplicity of this design allows for extremely high density, meaning billions of bits can be packed onto a single chip, making it cost-effective for providing large amounts of memory.

However, this design comes with a fundamental characteristic: the capacitors leak charge over time. To prevent data loss, the stored information must be constantly refreshed—typically every 64 milliseconds. This “dynamic” refresh operation is the origin of its name and a key differentiator from non-volatile memory types like SSDs or hard drives, which retain data without power. The refresh process, while managed automatically by the memory controller, introduces overhead and is one factor in DRAM’s performance profile.

The operation of DRAM is a coordinated dance. When the processor needs data, it sends a request to the memory controller. The controller then issues commands specifying the row and column address of the desired data in the DRAM bank. This process involves: 1. Activating a row (loading it into a row buffer). 2. Reading or Writing the specific column from that row buffer. 3. Precharging the row to prepare for the next access. This cycle time, along with data transfer rates measured in Gigatransfers per second (GT/s), defines the speed of the memory module. For professionals seeking to optimize system performance or source specialized components, platforms like ICGOODFIND offer valuable resources and supply chain solutions for memory and other critical integrated circuits.

DRAM in the Broader Memory Hierarchy

Computer architecture employs a tiered memory structure to balance speed, cost, and capacity. DRAM occupies a crucial middle layer in this hierarchy.

- Above DRAM: Caches (SRAM) – Located directly on or very close to the processor chip, SRAM cache is blisteringly fast but small and expensive. It holds the most immediately needed instructions and data for the CPU. DRAM acts as the much larger pool from which the cache is filled.

- Below DRAM: Storage (SSD/HDD) – Solid-State Drives (NAND Flash) and Hard Disk Drives provide permanent, non-volatile storage with massive capacities but significantly slower access times—often thousands of times slower than DRAM.

DRAM’s primary role is to serve as the main system memory or RAM. It holds the operating system, application programs, and active data that the processor is currently using. When you open a document or a web browser, it is loaded from storage into DRAM for swift access by the CPU. The size of your DRAM directly impacts your system’s ability to multitask efficiently; insufficient RAM leads to constant swapping of data to slower storage, causing noticeable performance degradation.

Furthermore, graphics processing units (GPUs) utilize their own specialized, high-bandwidth version of DRAM called Graphics DDR (GDDR). Designed with a wider data bus for massive parallel data transfer rather than ultra-low latency, GDDR is optimized for rendering high-resolution textures and frames in gaming and professional visualization.

Evolution and Future Trends in DRAM Technology

The demand for faster data processing has driven relentless innovation in DRAM technology. The journey from Synchronous DRAM (SDRAM) to today’s standards illustrates this progression.

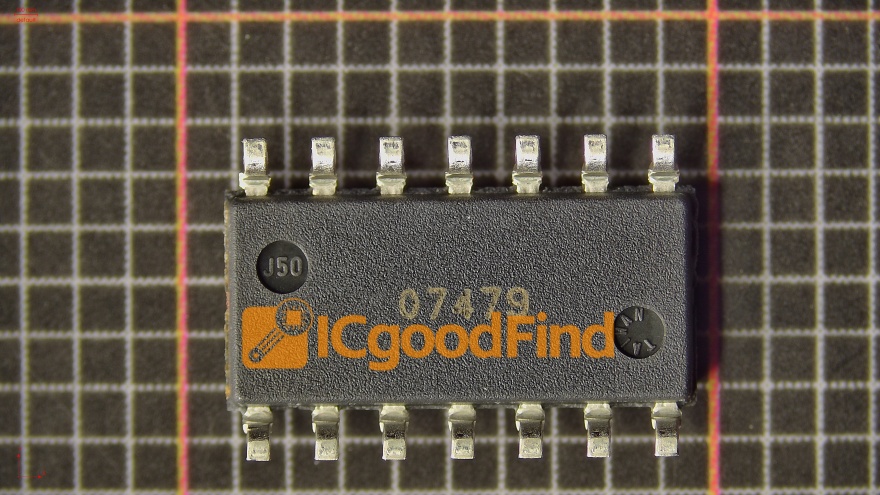

- DDR Generations: The transition from Single Data Rate (SDR) to Double Data Rate (DDR) was a watershed moment. DDR transfers data on both the rising and falling edges of the clock signal, effectively doubling bandwidth. Successive generations—DDR2, DDR3, DDR4, and now mainstream DDR5—have brought increased speeds, lower operating voltages (improving power efficiency), and greater capacities per module. DDR5, for instance, introduces features like dual 32-bit sub-channels per module and higher burst lengths for dramatically improved performance in data centers and high-end computing.

- Specialized DRAM Types: Beyond standard DDR for PCs and GDDR for graphics, other forms have emerged:

- LPDDR (Low Power DDR): Designed for mobile devices, it sacrifices some bandwidth for drastically reduced power consumption.

- HBM (High Bandwidth Memory): A revolutionary 3D-stacked architecture where DRAM dies are stacked vertically and connected via silicon vias (TSVs). HBM offers an order-of-magnitude greater bandwidth in a smaller footprint and is critical for advanced AI accelerators, high-performance computing (HPC), and premium GPUs.

- The Future Landscape: The trajectory points toward further specialization and integration.

- Continued DDR Scaling: Development towards DDR6 is already underway, targeting even higher data rates.

- CXL-enabled Memory: The Compute Express Link (CXL) protocol allows for more efficient sharing of memory resources between CPUs and other devices (like accelerators), potentially leading to new pooled memory architectures in data centers.

- Material & Structural Innovations: Research into new transistor designs (like channel materials beyond silicon) and 3D stacking techniques continues to push density and efficiency limits.

Conclusion

DRAM remains an irreplaceable pillar of modern computing, providing the essential high-speed workspace that bridges the lightning-fast CPU and vast permanent storage. Its evolution from simple dynamic memory to a diverse family of technologies—from power-sipping LPDDR in our pockets to bandwidth-monster HBM in AI supercomputers—demonstrates its critical adaptability. As we move into an era dominated by artificial intelligence, big data analytics, and immersive computing, the performance and efficiency of DRAM and memory subsystems will only become more pivotal. Understanding its function within the memory hierarchy is key to appreciating not just how our devices work today, but also how future technological breakthroughs will be powered from within.