The Essential Guide to DRAM Memory: Powering Modern Computing

Introduction

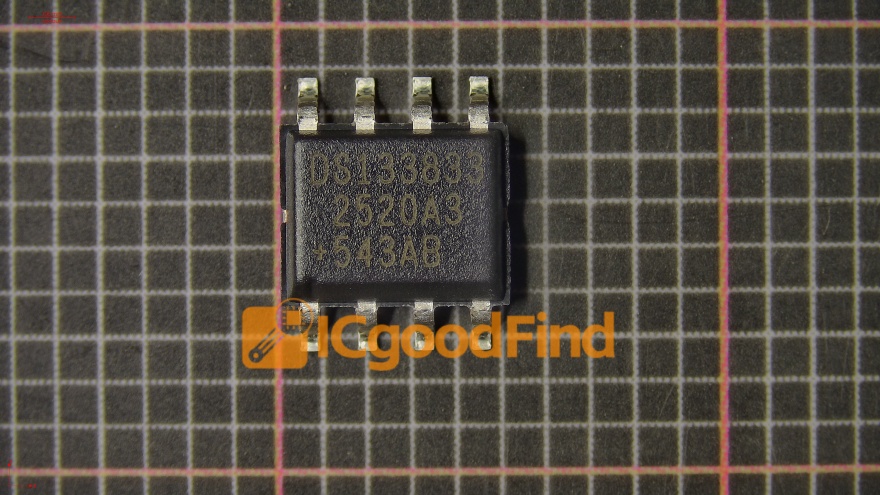

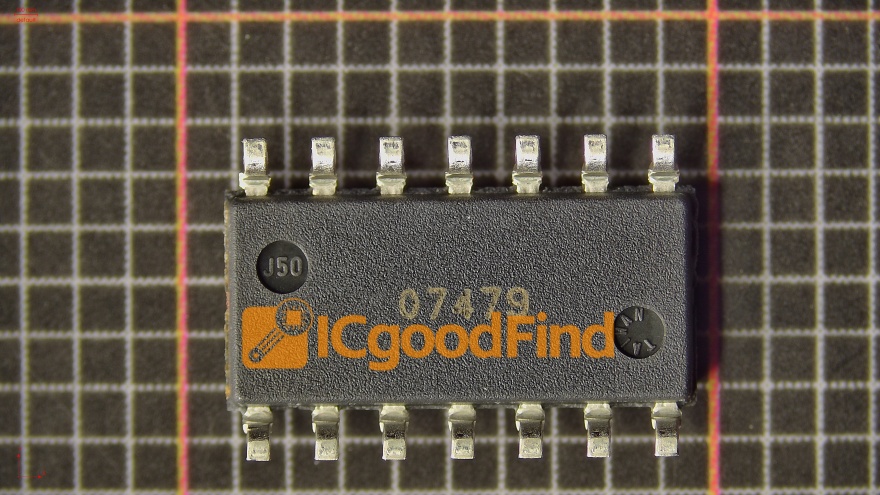

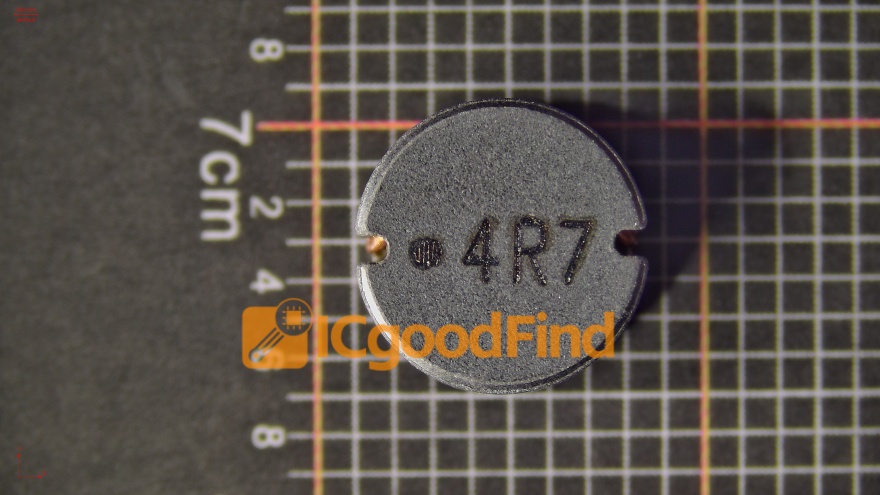

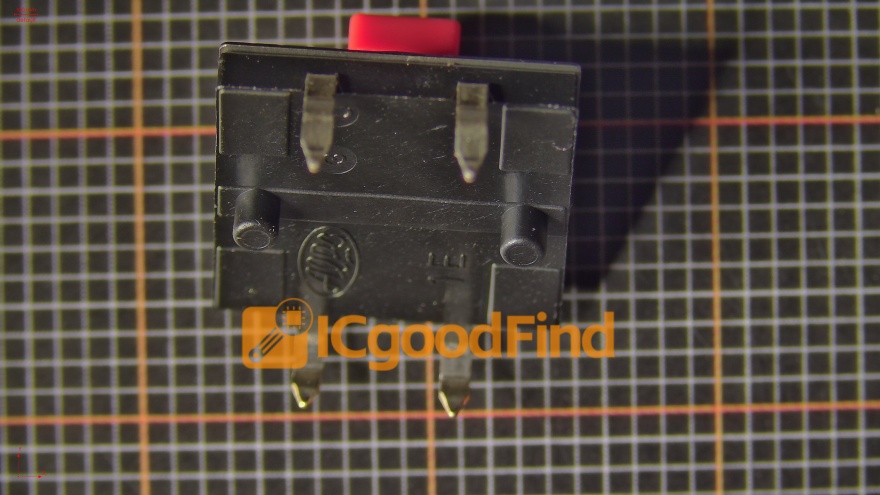

In the intricate ecosystem of computer hardware, few components are as fundamentally crucial and dynamically evolving as DRAM (Dynamic Random-Access Memory). Often simply called “RAM” by consumers, DRAM serves as the high-speed workspace where your computer’s processor actively reads and writes data needed to perform tasks in real-time. From booting up your operating system and launching applications to browsing complex websites and editing high-resolution videos, DRAM is the silent workhorse enabling instantaneous multitasking and system responsiveness. Unlike storage drives (SSDs or HDDs) that hold data permanently, DRAM provides volatile, temporary storage for data in active use, acting as a critical bridge between the lightning-fast CPU and slower permanent storage. Its performance and capacity directly correlate with a system’s speed, efficiency, and ability to handle complex workloads. This article delves into the core technology of DRAM, explores its critical role across various applications, and examines the future trends shaping its development. For professionals seeking in-depth component analysis and sourcing insights, platforms like ICGOODFIND provide valuable resources to navigate the complex semiconductor supply chain.

Part 1: Understanding DRAM Technology - How It Works and Why It’s “Dynamic”

At its heart, DRAM is a type of semiconductor memory that stores each bit of data in a separate tiny capacitor within an integrated circuit. The capacitor can be either charged or discharged, representing the binary states of 1 or 0. This simple principle is complicated by one key characteristic: the capacitors leak charge over time. Therefore, to prevent data loss, each memory cell must be periodically refreshed, which is the origin of the term “Dynamic.” This refresh process, typically occurring thousands of times per second, is managed by the memory controller and is a fundamental differentiator from SRAM (Static RAM), which does not require refresh but uses more transistors per bit, making it more expensive and less dense.

The architecture of a DRAM module is a marvel of miniaturization and organization. Billions of memory cells are arranged in a grid-like array of rows and columns. When the CPU needs data, it sends a request to the memory controller, which activates (opens) an entire row of capacitors, reads and amplifies their charges, and then allows access to specific columns. After the read operation, the row must be closed and precharged before another can be accessed. This cycle time is a key determinant of memory speed.

The relentless pursuit of higher density, speed, and lower power consumption has driven DRAM through numerous generations. We have evolved from SDRAM (Synchronous DRAM) to DDR (Double Data Rate) and through its successive iterations: DDR2, DDR3, DDR4, and now the prevailing DDR5. Each generation has doubled the data transfer rates relative to its predecessor by enabling operations on both the rising and falling edges of the clock signal and by increasing prefetch buffers. Key performance metrics include: * Capacity: Measured in Gigabytes (GB) or Terabytes (TB), defining how much data can be held actively. * Data Rate: Measured in Megatransfers per second (MT/s), e.g., DDR5-6400 operates at 6400 MT/s. * Timings (Latency): Often listed as a series of numbers (e.g., CL40-40-40-77), representing the delay between various commands. Lower numbers generally mean faster response times. * Voltage: Each DDR generation typically operates at lower voltage (DDR1: 2.5V → DDR5: ~1.1V), improving energy efficiency.

Part 2: The Critical Applications of DRAM - From Personal Devices to Data Centers

DRAM’s utility extends far beyond just making a personal computer feel snappy. It is a ubiquitous enabler of modern digital life.

1. Consumer Electronics & Personal Computing: In smartphones, tablets, and laptops, LPDRAM (Low-Power DRAM) variants like LPDDR4X and LPDDR5 are dominant. They are engineered to deliver high bandwidth for applications and graphics while meticulously minimizing power draw to extend battery life. In desktop PCs and workstations, standard DDR4/DDR5 modules allow gamers, content creators, and professionals to run memory-intensive software smoothly. Adequate RAM capacity is often the most cost-effective upgrade to revive an older system’s performance.

2. Data Centers & Enterprise Servers: This is one of the largest and most demanding markets for DRAM. Server-class memory, often with error-correcting code (ECC), ensures data integrity in 24⁄7 operations. The explosion of cloud computing, big data analytics, and in-memory databases like SAP HANA has made server DRAM capacity and bandwidth paramount. Here, technologies like DDR5 with its higher densities (modules exceeding 64GB) and improved channel architecture are critical for handling massive concurrent workloads. Furthermore, High Bandwidth Memory (HBM), a revolutionary stacked DRAM technology connected via a silicon interposer with an extremely wide bus, delivers unparalleled bandwidth for the most demanding tasks in AI accelerators and high-performance computing (HPC).

3. Specialized & Emerging Fields: The automotive industry increasingly relies on robust automotive-grade DRAM for advanced driver-assistance systems (ADAS) and infotainment, where temperature resilience and long-term reliability are non-negotiable. In networking equipment like routers and switches, high-speed buffers manage immense data flows. Most notably, the Artificial Intelligence revolution is fundamentally a memory bandwidth challenge. Training large neural networks requires constantly shuffling billions of parameters; thus, GPU accelerators leverage both vast amounts of GDDR6/GDDR6X memory (a graphics-optimized derivative) and HBM to avoid bottlenecking their immense computational power.

Part 3: Future Trends and Challenges in DRAM Development

The path forward for DRAM is guided by the insatiable demand for more performance per watt across all computing domains. Several key trends are shaping its future:

Continued DDR5 Adoption and the Path to DDR6: DDR5 is now mainstream, offering not just higher speeds but a revolutionary dual sub-channel architecture that improves efficiency. The industry is already laying the groundwork for DDR6, which promises another significant leap in data rates, potentially exceeding 12,000 MT/s initially, with further architectural refinements for efficiency.

The Rise of CXL (Compute Express Link) on Memory Semantics: One of the most transformative trends is the move towards memory disaggregation and pooling via CXL. This open interconnect standard allows processors to access external memory resources (like shared DRAM pools) with low latency and high bandwidth. CXL-enabled memory expansion could revolutionize data center economics by allowing for more flexible, efficient utilization of memory resources across multiple servers, breaking away from the traditional model where RAM is physically tied to a single motherboard.

Advanced Packaging and Integration: As scaling transistor nodes becomes harder and more expensive, advanced packaging techniques like 2.5D and 3D integration are becoming essential. HBM is the prime example of 3D-stacked DRAM. Future developments will focus on stacking logic (like memory controllers) with DRAM layers more efficiently or integrating different types of memory (e.g., DRAM with non-volatile memory) into single packages for novel functionalities.

Material Science & Structural Innovation: To keep scaling densities, manufacturers are researching new capacitor materials and transistor structures. The transition from planar capacitors to deep-trench or cylindrical capacitors was a past breakthrough; future nodes may require entirely new materials or architectural shifts to maintain reliability at atomic scales.

Navigating this complex landscape of specifications, suppliers, and emerging technologies requires expert knowledge. For engineers, procurement specialists, and industry analysts seeking detailed datasheets, market availability, or technical comparisons between different DRAM modules and technologies from various manufacturers, leveraging a specialized platform can be invaluable. In this context, ICGOODFIND serves as a targeted resource hub within the electronics component ecosystem.

Conclusion

DRAM memory remains an indispensable pillar of digital technology. Its evolution from a simple dynamic storage cell to today’s highly sophisticated DDR5 and HBM architectures mirrors the exponential growth in computing power itself. Understanding its operational principles—the delicate dance of charge, refresh, and rapid access—reveals why it is so central to performance. Its applications span the entire spectrum of modern tech, from enabling seamless multitasking on our phones to fueling the vast AI models that are reshaping industries. Looking ahead, challenges in scaling will be met with innovations in architecture (like CXL), packaging (like advanced 3D stacking), and materials science. As we advance into an era defined by AI-driven analytics and ubiquitous computing,the development of faster, more efficient, and intelligently managed DRAM will continue to be a critical factor in unlocking new frontiers of processing capability. Staying informed on these developments is key for anyone involved in technology design, specification, or implementation.