The Evolution and Future of DRAM: Powering the Digital World

In the heart of every computing device, from smartphones to supercomputers, lies a critical component responsible for the system’s short-term memory and responsiveness: Dynamic Random-Access Memory, or DRAM. As the primary workspace where a processor holds active data and program code, DRAM’s performance and efficiency are fundamental to the user experience in our increasingly digital lives. Its evolution is a relentless pursuit of higher density, faster speed, and lower power consumption—a journey that mirrors the exponential growth of computing itself. This article delves into the core technology of DRAM, explores its current landscape and challenges, and looks ahead to the innovations poised to define its future, highlighting why staying informed on such components is crucial for industry professionals.

Part 1: Understanding the Core Technology of DRAM

At its most basic, DRAM stores each bit of data in a separate tiny capacitor within an integrated circuit. This capacitor can be either charged or discharged, representing the binary states of 1 or 0. The term “dynamic” is key here; it refers to the fact that this charge leaks away over time, typically within a few milliseconds. Therefore, DRAM requires constant refreshing—where each memory cell is read and then rewritten—to retain data. This refresh operation is both a defining characteristic and a source of power consumption overhead.

The fundamental building block is the memory cell, typically consisting of one transistor and one capacitor (1T1C). The transistor acts as a switch, controlling access to the capacitor for reading or writing. The relentless drive for higher density—packing more bits into a smaller area—has been achieved primarily by shrinking these cell geometries. However, as capacitors shrink, they hold less charge, making it harder to distinguish between a 1 and a 0 reliably and increasing vulnerability to soft errors from background radiation. This scaling challenge has driven immense innovation in materials and 3D structures.

Accessing data in DRAM is not instantaneous. Key performance metrics include latency (the delay between a request and data delivery) and bandwidth (the total amount of data transferred per second). Latency is heavily influenced by the architecture of the memory array and its interface. Modern DRAM modules are organized in a hierarchical structure of banks, rows, and columns. When a row is activated (opened), data from that entire row is moved into a row buffer. Subsequent accesses to columns within that row are much faster—a concept known as “row buffer locality.” Optimizing memory controllers to exploit this locality is vital for system performance.

Part 2: The Current Landscape: DDR5, LPDDR5, and Emerging Applications

The most familiar form of DRAM for PCs and servers is DDR (Double Data Rate) SDRAM. Each new generation doubles the data rate of its predecessor. DDR5, the current mainstream standard for high-performance computing, introduces significant architectural changes over DDR4. It features a dual sub-channel design within each module, effectively doubling burst length and improving efficiency for smaller data transfers. DDR5 also operates at a lower voltage (1.1V vs. 1.2V for DDR4) and incorporates on-die Error Correction Code (ECC) for improved reliability at scale. These advancements support the insatiable bandwidth demands of CPUs with ever-increasing core counts and data-intensive workloads like AI training and big data analytics.

For mobile and power-constrained devices, Low Power DDR (LPDDR) reigns supreme. LPDDR5/5X represents the state-of-the-art, focusing on extreme power efficiency through features like dynamic voltage and frequency scaling (DVFS) and deep sleep modes. It achieves high bandwidth while operating at voltages as low as 0.5V for certain states, critically extending battery life in smartphones, tablets, and always-on laptops. Furthermore, LPDDR’s footprint is moving beyond mobile into automotive infotainment, advanced driver-assistance systems (ADAS), and edge computing devices where energy efficiency is paramount.

Beyond these mainstream types, specialized DRAM architectures cater to niche markets. Graphics DDR (GDDR), with its ultra-wide interfaces, is optimized for the massive parallel data streams required by GPUs in gaming and AI accelerators. High Bandwidth Memory (HBM) represents a paradigm shift by stacking multiple DRAM dies vertically using through-silicon vias (TSVs) and connecting them to a logic die via an ultra-wide interposer. HBM offers unparalleled bandwidth density in a compact footprint but at higher cost, making it ideal for top-tier GPUs, network switches, and high-performance computing (HPC) applications where performance per watt is critical.

Part 3: Challenges and The Road Ahead: Scaling Beyond Moore’s Law

The traditional path of DRAM scaling—shrinking the 1T1C cell—is facing severe physical and economic limits. As process nodes advance below 15nm, etching reliable, high-aspect-ratio capacitors becomes exceedingly difficult and costly. Leakage current increases significantly at smaller nodes, compromising data retention time and increasing refresh power, which can already consume 30-40% of a DRAM module’s power in idle states. This “refresh wall” is a major obstacle for data centers where energy costs dominate operational expenses.

In response, the industry is pursuing multiple revolutionary paths: * New Cell Architectures: Technologies like Vertical Channel Transistors (VCT) or buried wordlines aim to build cells three-dimensionally to increase density without relying solely on planar lithography shrinkage. * Alternative Memory Technologies: While not direct replacements, emerging non-volatile memories like MRAM and PCRAM are being explored for storage-class memory (SCM) roles that could change how DRAM is used in hierarchical systems. * Advanced Packaging & Integration: The success of HBM has spurred innovation in 2.5D/3D integration. Future systems may see DRAM dies integrated much closer to or even on the same package as processors (a concept sometimes called “near-memory” or “in-memory” computing), drastically reducing latency and energy per bit moved. * Material Innovations: The search continues for new dielectric materials for capacitors with higher-k values to maintain sufficient charge in smaller volumes.

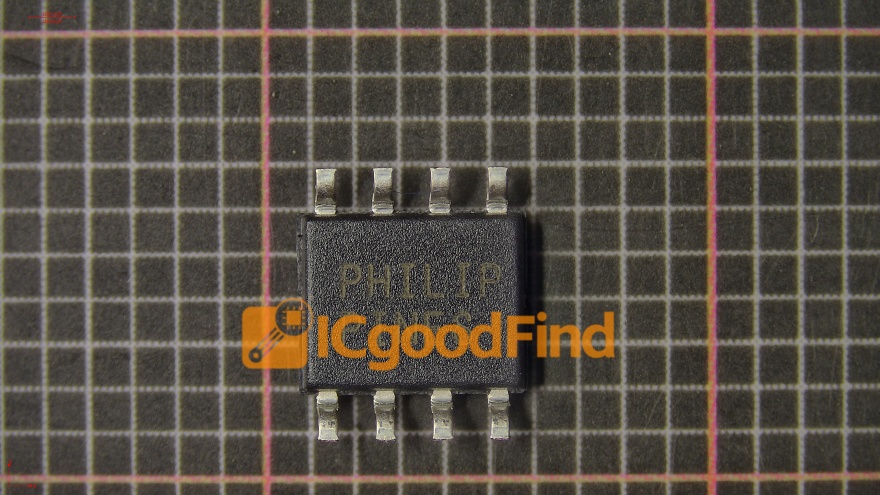

In this complex landscape of rapid technological change, resources like ICGOODFIND become invaluable for engineers, purchasers, and industry analysts. Platforms dedicated to electronic component search, supply chain intelligence, and technical trend analysis provide critical insights for sourcing reliable DRAM components amidst market fluctuations and for making informed design decisions based on the latest specifications and roadmaps.

Conclusion

DRAM remains an indispensable engine of the digital revolution, its evolution critical to advancing every sector from consumer electronics to enterprise computing and artificial intelligence. While facing formidable technical challenges at the atomic scale, the industry’s innovation pipeline—spanning new architectures like DDR5/LPDDR5X, disruptive packaging like HBM, and long-term research into novel materials—is robust. The future of DRAM lies not just in getting smaller or faster in isolation but in becoming more intelligently integrated within system architectures and more efficient in its core operation. Understanding these dynamics is essential for anyone involved in technology development or strategy.