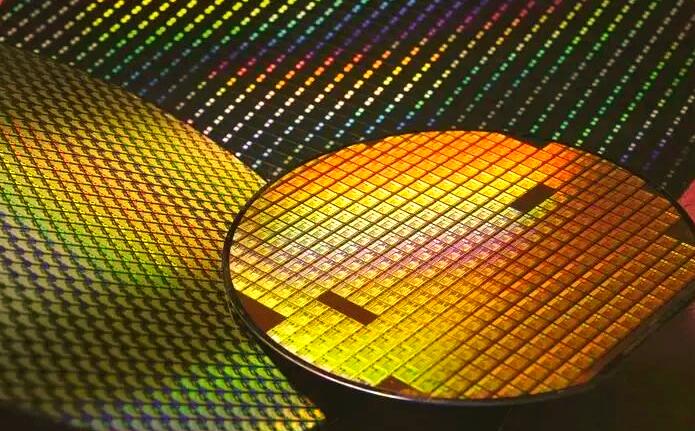

Micron CEO Sanjay Mehrotra stated during the company’s earnings call that as L4 autonomous vehicles move toward mass production, memory demand per vehicle will exceed 300GB, creating a significant new market for high-speed memory.

Micron delivered a strong second quarter, with revenue reaching $23.86 billion, a 200% jump from the same period last year. Growth was fueled by soaring demand for high-end HBM chips in AI supercomputing, combined with tight supply and strong execution across business lines.

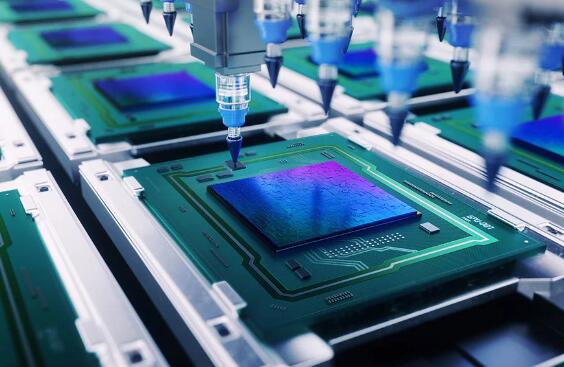

To meet AI-driven demand, Micron is aggressively expanding capacity. The company plans to build multiple fabs in Japan and Singapore, along with a mega-fab in New York, with capacity coming online between 2028 and 2029. In the near term, Micron aims to boost overall capacity by 20% in 2026.

Mehrotra emphasized that autonomous driving will become the next major growth engine for memory, following AI data centers. L4 vehicles function as AI supercomputers on wheels, relying heavily on high-speed memory—far more than traditional cars.

Current smart vehicles typically pack around 16GB of memory, while L4 systems will drive that requirement up by tens of times. NVIDIA is advancing its autonomous driving ecosystem with partners like BYD, Geely, and Nissan, with its Drive Hyperion platform providing foundational support for L4 systems. These AI-driven platforms demand large-capacity, high-speed memory.

Industry observers note that once automakers begin mass-producing L4 vehicles, the automotive memory market will see exponential growth. If millions of smart vehicles hit the road simultaneously, a memory supply crunch driven by autonomous driving could follow.

ICgoodFind: AI is accelerating autonomous driving, and L4 vehicles are opening a new frontier for automotive memory. HBM and high-speed memory demand are climbing fast—the storage supply chain is facing another wave of growth.