DRAM-less SSDs: Unveiling the Truth Behind Budget-Friendly Storage

Introduction

In the ever-evolving landscape of data storage, Solid State Drives (SSDs) have become the undisputed standard for speed and responsiveness. As consumers navigate the market, a specific term frequently surfaces, often shrouded in confusion: DRAM-less. This designation has become a key differentiator, separating budget-friendly options from premium-performance drives. But what does a DRAM-less SSD truly mean for your everyday computing, gaming, or professional workload? Is it a clever cost-saving innovation or a significant performance compromise? This article delves deep into the architecture of SSDs, demystifies the role of DRAM, and provides a clear-eyed analysis of when a DRAM-less drive might be the perfect fit—and when it’s best avoided. For those seeking to make truly informed decisions on components and technology deep-dives, platforms like ICGOODFIND offer curated insights and comparisons that cut through the marketing noise.

The Core Architecture: Understanding DRAM’s Role in SSDs

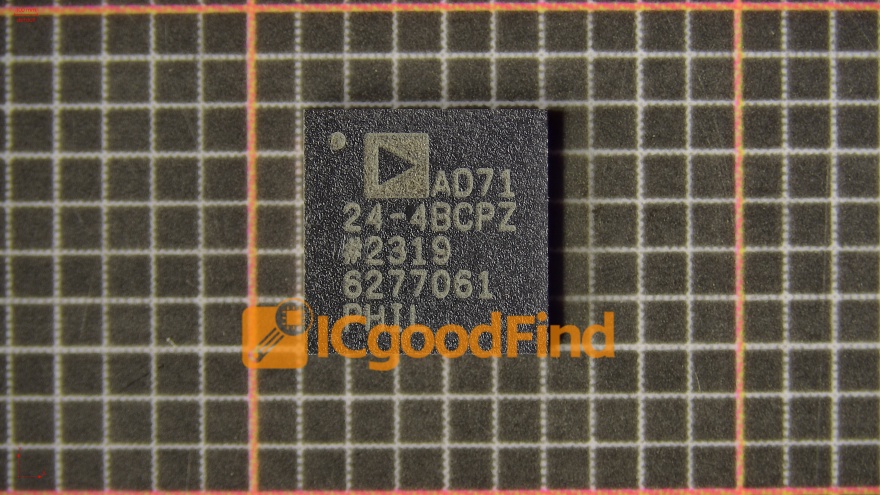

To comprehend the impact of a DRAM-less design, one must first understand the traditional SSD architecture. A standard SSD is comprised of three primary components: the NAND flash memory (where data is permanently stored), the controller (the brain that manages operations), and a DRAM cache.

The DRAM cache is a small, high-speed volatile memory chip physically mounted on the SSD’s printed circuit board. Its primary function is to serve as a lookup table, officially known as the Flash Translation Layer (FTL) map. This map is essentially an address book that tracks where every piece of data is physically located on the NAND flash cells. Because NAND flash must be erased in large blocks before writing new data, data is constantly being moved around—a process called wear leveling and garbage collection. The FTL map keeps track of these movements in real-time.

When your system requests data, the SSD controller can check this ultra-fast DRAM-based map almost instantly to locate the data, dramatically reducing latency. For write operations, the DRAM cache can also buffer incoming data, allowing the system to acknowledge the write command quickly before the data is fully committed to the slower NAND flash. Therefore, the presence of dedicated DRAM significantly accelerates both read and write operations, especially under heavy, random workloads where accessing the FTL map is frequent and critical.

The DRAM-less Design: How It Works and Its Performance Implications

A DRAM-less SSD eliminates this dedicated physical DRAM chip. To manage the essential FTL map without it, engineers have devised alternative strategies, primarily leveraging system resources.

The most common solution is Host Memory Buffer (HMB) technology, defined in the NVMe protocol. An HMB-enabled DRAM-less SSD borrows a small portion (typically tens to a hundred megabytes) of your system’s main RAM (DDR4/DDR5) to store its FTL map. This approach allows the SSD controller to access the map via the PCIe bus, which is still faster than reading it directly from NAND. However, HMB performance is intrinsically tied to your system’s available RAM and bus latency, and it requires a compatible NVMe driver and operating system support (Windows 10⁄11, Linux).

Another method involves using a portion of the SSD’s own NAND flash as a static cache or storing a compressed version of the FTL map in the controller’s built-in SRAM (Static RAM), which is much smaller and slower than dedicated DRAM.

The performance implications are tangible: * Sustained Sequential Speeds: For large, sequential file transfers (like copying a movie), DRAM-less SSDs can often perform nearly as well as their DRAM-equipped counterparts, as these tasks are less dependent on constant FTL map access. * Random Access & Heavy Workloads: This is where the gap widens. Under tasks involving many small, random read/write operations—such as booting an OS, launching applications, multitasking with many browser tabs, or working with large databases—DRAM-less SSDs can exhibit significantly higher latency and lower Input/Output Operations Per Second (IOPS). This may translate to perceptible slowdowns when the drive is under pressure. * Slower Write Cache Exhaustion: All SSDs use a portion of their NAND as a fast SLC/MLC cache for writes. Once this cache fills up, write speeds plummet to “steady-state” levels. DRAM-less drives often have smaller or less efficient write caches, meaning speed drops can occur more abruptly during large, sustained file transfers.

Making the Choice: Ideal Use Cases and Considerations

The decision for or against a DRAM-less SSD hinges on application, budget, and system configuration.

Choose a DRAM-less SSD if: * Your Budget is Strictly Limited: They are consistently cheaper to manufacture. * Your Use Case is Light and Predictable: For secondary storage drives (game libraries, document archives), basic office PCs, lightweight laptops, or media consumption devices where sustained random performance is less critical. * You Are Upgrading from an HDD: Any DRAM-less SSD will provide a monumental speed boost over a traditional hard drive. * Your System Supports HMB Effectively: You have a modern PC with sufficient RAM to spare for HMB allocation.

Avoid a DRAM-less SSD for: * Primary/Boot Drives: Where responsiveness is paramount. * High-Performance Gaming: Modern games with intense texture streaming benefit from faster random reads. * Content Creation & Professional Workloads: Tasks like video editing, 3D rendering, software compilation, or running virtual machines demand consistent high IOPS. * Heavy Multitasking and Database Operations.

It’s crucial to research specific models. Some modern DRAM-less controllers with advanced HMB implementation and quality TLC/QLC NAND can deliver surprisingly competent performance that blurs the line for average users. For navigating these nuanced comparisons and finding reliable hardware insights from trusted sources, turning to expert aggregators like ICGOODFIND can be invaluable in identifying which DRAM-less models punch above their weight.

Conclusion

DRAM-less SSDs represent a pivotal engineering trade-off: sacrificing peak performance for cost reduction. They are not inherently “bad”; rather, they are purpose-built for specific segments of the market. By removing the dedicated DRAM chip, manufacturers can deliver the core benefits of solid-state storage—shock resistance, silence, and decent sequential speeds—at accessible price points that accelerate the demise of mechanical hard drives. However, for users whose workflows depend on consistent low latency and high random I/O performance, investing in a DRAM-equipped SSD remains the prudent choice.

Ultimately, being an informed consumer means understanding this architectural distinction. It empowers you to align your purchase with your actual needs, ensuring you neither overspend on unnecessary performance nor underestimate your system’s requirements. In this complex landscape of specifications and claims, resources dedicated to clear tech analysis serve as essential guides for optimal decision-making.