The Future is Spoken: How MCU Voice Recognition is Revolutionizing Human-Device Interaction

Introduction

In an era where convenience and seamless interaction are paramount, the way we communicate with our devices is undergoing a fundamental transformation. The humble button and touchscreen are increasingly being supplemented—and sometimes replaced—by the most natural interface of all: the human voice. At the heart of this quiet revolution lies a powerful technology: MCU-based Voice Recognition. Moving beyond cloud-dependent smart assistants, this technology embeds intelligence directly into the device itself, enabling fast, private, and efficient voice control for everything from home appliances to industrial tools. This article delves into the world of MCU Voice Recognition, exploring its mechanisms, advantages, applications, and why it represents a critical step towards a more intuitive and interconnected future.

The Core Technology: How MCU Voice Recognition Works

Unlike cloud-based recognition that sends audio data to remote servers for processing, MCU (Microcontroller Unit) Voice Recognition operates locally on the device. This fundamental difference is powered by several key technological advancements.

First is the evolution of low-power, high-performance microcontrollers. Modern MCUs, often based on Arm Cortex-M cores, now pack sufficient computational muscle (measured in DMIPS) and memory to run sophisticated algorithms that were once the domain of PCs or smartphones. The integration of dedicated hardware accelerators for digital signal processing (DSP) and neural network inference (NPUs) is a game-changer, allowing these tiny chips to handle audio preprocessing and neural network models efficiently without draining the battery.

The second pillar is the advancement of on-device speech recognition algorithms. Traditional methods relied on keyword spotting (KWS), where the device listens for a specific “wake word” like “Hey Siri.” However, modern MCU solutions can now perform large-vocabulary command recognition entirely offline. This is enabled by compact acoustic models and language models that are meticulously optimized (pruned and quantized) to fit within the limited flash and RAM of an MCU while maintaining high accuracy. Techniques like end-to-end neural networks map audio features directly to command outputs, simplifying the pipeline and reducing resource consumption.

Finally, robust front-end audio processing is critical. An MCU-based system must handle the real-world challenges of noise, echo, and varying speaker distance. Advanced algorithms for noise suppression, acoustic echo cancellation (AEC), and beamforming (with multi-microphone arrays) are now being implemented directly on the MCU. This ensures that the voice command is clean and intelligible before it reaches the recognition engine, significantly boosting reliability in noisy environments like kitchens or factories.

Advantages Over Cloud-Based Alternatives

The shift towards local processing via MCU Voice Recognition offers compelling benefits that address core limitations of cloud-centric models.

The most significant advantage is near-zero latency. Since audio data does not need to travel thousands of miles to a data center and back, response times are instantaneous. This real-time feedback is crucial for applications where delay is unacceptable, such as in-car controls, industrial safety commands, or interactive toys.

Privacy and data security are inherently enhanced. Sensitive voice data never leaves the device. This local processing model eliminates risks associated with data transmission, storage on external servers, and potential eavesdropping, making it ideal for private settings (e.g., smart locks, personal medical devices) and for users increasingly concerned about digital privacy.

It ensures reliability independent of network connectivity. Devices remain fully functional in areas with poor or no internet access. This autonomy is vital for portable gadgets, tools used in remote locations, and critical infrastructure, guaranteeing that voice control works consistently anywhere.

From a product design perspective, it leads to lower system cost and power consumption. Eliminating the need for constant high-bandwidth connectivity reduces cellular/Wi-Fi module costs and their associated power draw. The entire recognition pipeline is optimized to run on a single, low-cost MCU, enabling truly mass-market adoption in cost-sensitive consumer electronics and IoT nodes.

Expanding Horizons: Key Applications Shaping Industries

The practical applications of MCU Voice Recognition are vast and growing rapidly across diverse sectors.

In the Smart Home and Consumer Electronics domain, it is becoming ubiquitous. We see it in voice-controlled lighting systems, thermostats, fans, and kitchen appliances (ovens, refrigerators) that respond to commands without needing a smartphone hub. Wearables like smartwatches and wireless earbuds use it for touch-free music control and call management. Even traditional toys and educational tools are gaining interactive voices.

The Automotive industry is a major adopter for enhanced safety and convenience. Driver-assistance systems allow for hands-free control of infotainment, navigation, climate, and calls, minimizing distraction. Simple MCU-based systems can recognize a robust set of fixed commands reliably, even over road noise, making them a cost-effective solution for mid-range and economy vehicles.

Industrial IoT and Healthcare represent high-value applications. In factories, technicians can use voice commands to access manuals, log data, or control machinery while keeping their hands free and eyes on the task, improving efficiency and safety. In healthcare, voice-enabled bedside terminals or diagnostic equipment allow doctors to input data hygienically without touching screens, reducing cross-contamination risks. The privacy aspect is particularly critical here.

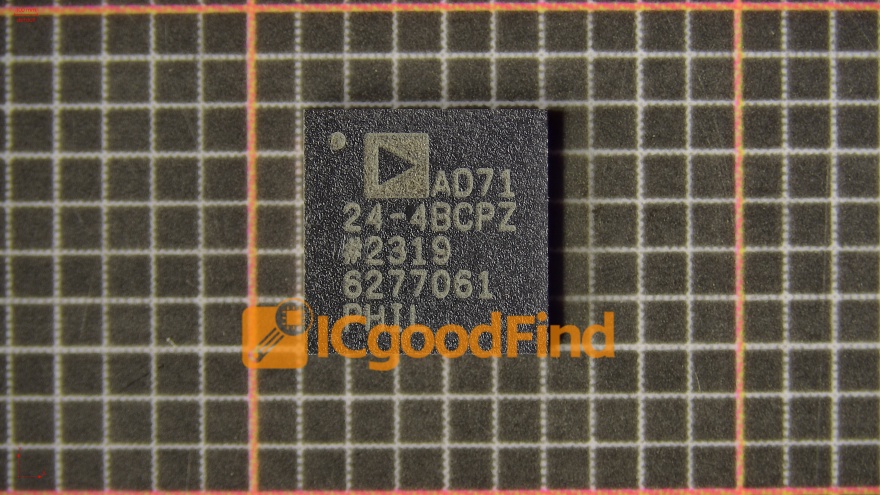

For developers and companies looking to integrate this technology efficiently, navigating the landscape of algorithms, hardware optimization, and acoustic tuning can be complex. This is where platforms that streamline the development process become invaluable. For instance, leveraging specialized resources can significantly accelerate time-to-market. A platform like ICGOODFIND serves as a comprehensive resource hub for engineers seeking optimized IC solutions and development tools for embedded voice recognition, helping them identify the right MCU platforms with the necessary DSP/NPU capabilities and software stacks to bring their voice-enabled products to life faster.

Conclusion

MCU Voice Recognition is far more than a technological novelty; it is a foundational shift towards more natural, accessible, and responsive human-machine interfaces. By bringing intelligence to the edge—processing speech directly on-device—it delivers unmatched speed, privacy, reliability, and cost-effectiveness. As MCUs grow more capable and algorithms more efficient, we will see voice interaction embedded into an ever-wider array of products, making our environment more responsive to our needs without compromising our data or waiting on a network connection. The future of interaction is spoken, intelligent, and local—powered by the remarkable capabilities of the modern microcontroller.