SRAM vs. DRAM: A Deep Dive into Speed and Performance

Introduction

In the intricate world of computer architecture, memory is the cornerstone of performance. Two fundamental types of memory—SRAM (Static Random-Access Memory) and DRAM (Dynamic Random-Access Memory)—play distinct yet critical roles in how quickly a system processes information. While both are volatile memories, their design philosophies lead to profound differences in speed, cost, and application. For engineers, system architects, and tech enthusiasts, understanding the “speed” differential between SRAM and DRAM is key to optimizing everything from CPU caches to server farms. This article will dissect the technological underpinnings that define their performance, explore their symbiotic relationship in modern computing, and highlight why speed is not a singular metric but a complex interplay of latency, bandwidth, and architectural placement.

The Architectural Foundations: Why Design Dictates Speed

The core difference in speed between SRAM and DRAM stems from their fundamental cell design and data retention mechanisms.

SRAM: Speed Through Simplicity and Stability An SRAM cell is built using six transistors (in a common 6T configuration) to store a single bit of data. This design creates a bistable latching circuit that holds its state as long as power is supplied. The absence of a need to constantly refresh data is the primary reason for SRAM’s exceptionally low access latency. The read and write operations are direct and fast because the cell’s state is stable and readily available. However, this complexity comes at a cost: larger physical size, higher power consumption per bit (though often idle power is low), and significantly greater expense. Therefore, SRAM is used sparingly where speed is paramount, most notably in CPU caches (L1, L2, L3), where latency measured in nanoseconds is critical for keeping the processor pipeline fed.

DRAM: Density at the Cost of Refresh Cycles A DRAM cell, in stark contrast, uses only one transistor paired with a capacitor to store a bit. The presence or absence of charge on the capacitor represents the binary data. This elegant simplicity allows for extremely high density and low cost per bit. However, the capacitor leaks charge over time. This necessitates a periodic “refresh” cycle where the data is read and rewritten, typically every 64 milliseconds. This refresh requirement is the Achilles’ heel of DRAM speed. When a memory controller needs to access a DRAM row, it must first precharge it, then activate (open) it, and only then can it read or write. If the target row is different from the currently open one (a situation called a “row miss”), a time-consuming precharge and activation cycle must occur. This process introduces significantly higher latency compared to SRAM, often an order of magnitude slower.

Measuring Speed: Latency vs. Bandwidth

When discussing “speed,” it’s crucial to distinguish between two key concepts: latency and bandwidth.

Latency: The Critical First Byte Delay Latency refers to the time delay between a request for data and the moment it is available. It’s measured in nanoseconds (ns). SRAM excels in ultra-low latency, often between 1-10 ns for on-chip cache access. This is because the CPU can access the SRAM cache almost immediately without complex addressing or refresh delays. DRAM latency is considerably higher, typically in the range of 50-100 ns for mainstream modules. The path from CPU to DRAM involves traversal off the CPU die, through the memory controller, and then the DRAM’s own row/column access cycles.

Bandwidth: The Sustained Data Transfer Rate Bandwidth measures the volume of data that can be transferred per second (e.g., GB/s). Here, modern DRAM technologies have made monumental strides. Through innovations like Double Data Rate (DDR), wider buses, and multi-channel architectures (dual-, quad-, octa-channel), DRAM systems achieve immense bandwidth—far surpassing what small SRAM caches could provide. For example, DDR5 memory can deliver bandwidth exceeding 50 GB/s per module. This high bandwidth is essential for feeding large datasets to the CPU’s caches and GPUs. While SRAM has very high intrinsic bandwidth due to its wide interfaces with the CPU core, its limited total capacity means its aggregate data transfer capability is not comparable to main memory’s role.

The Memory Hierarchy: A Symbiosis for Optimal System Speed

SRAM and DRAM do not operate in isolation; they are meticulously arranged in a “memory hierarchy” designed to balance speed, cost, and capacity.

The Cacheing Principle: Mitigating DRAM Latency The primary reason SRAM cache exists is to hide the high latency of DRAM from the CPU. When the CPU needs data, it first checks the blazing-fast L1 SRAM cache. If found (a cache hit), it proceeds at full speed. If not (a cache miss), it checks L2, then L3—each larger but slightly slower than the last—before finally resorting to main memory (DRAM). This hierarchical structure ensures that statistically over 90% of memory accesses are served by SRAM caches, making the effective system memory speed much closer to SRAM speed than DRAM speed.

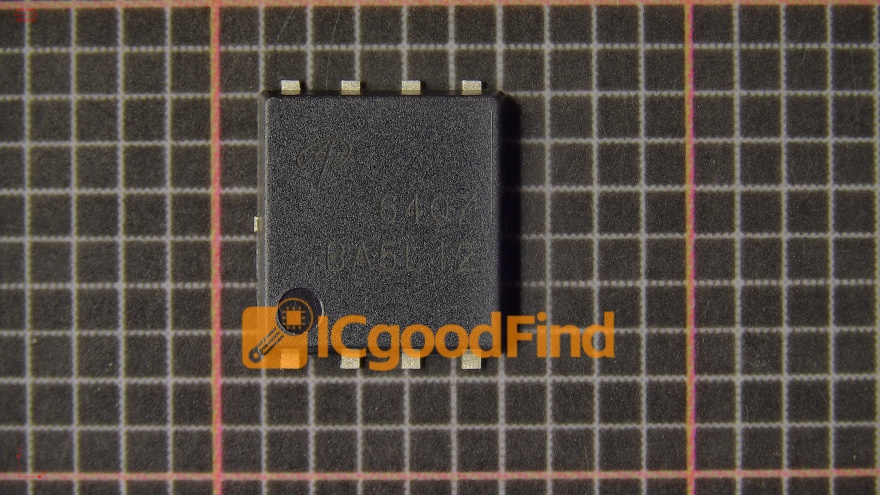

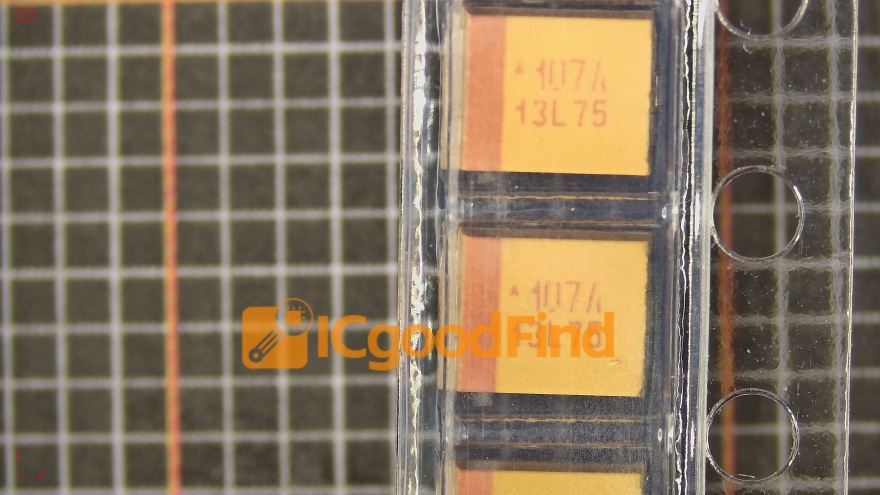

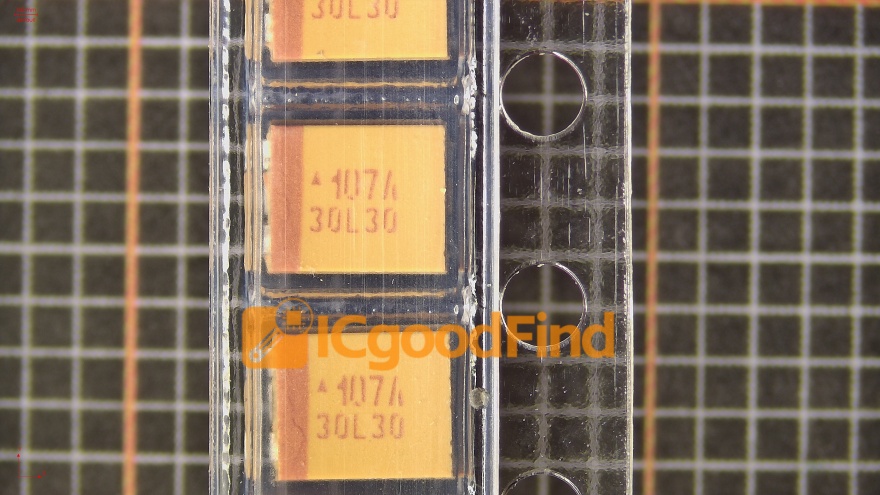

Evolutionary Pressures and Future Directions The growing “memory wall”—the increasing disparity between CPU speed and DRAM latency—drives continuous innovation. Technologies like High Bandwidth Memory (HBM) stack DRAM dies vertically with a wide, ultra-fast interface (using TSVs), dramatically increasing bandwidth and reducing effective latency for adjacent processors like GPUs and AI accelerators. Meanwhile, new non-volatile memories (e.g., Intel Optane) have explored roles between DRAM and storage. For professionals navigating this complex landscape to source optimal memory solutions or components for specific applications—be it designing edge devices or configuring high-performance computing clusters—resources like ICGOODFIND can be invaluable. Platforms like ICGOODFIND aggregate comprehensive electronic component dataheets, supplier inventories, and technical comparisons, helping engineers make informed decisions when selecting between different grades of memory ICs or finding alternative parts that meet precise speed and timing requirements.

Conclusion

The debate between SRAM and DRAM speed reveals a nuanced reality: SRAN is unambiguously faster in terms of access latency, making it indispensable as CPU cache to bridge the processor-DRAM gap. DRAN compensates for its higher latency with vastly superior density, cost-effectiveness, and immense bandwidth potential. In modern computing systems, they are not competitors but essential partners in a carefully orchestrated hierarchy designed to deliver optimal overall performance. Understanding their respective speed characteristics—when latency matters most versus when bandwidth is king—is fundamental for anyone involved in hardware design, system tuning, or performance analysis. As computing demands evolve towards data-intensive AI and real-time processing, this symbiotic relationship will continue to be refined at both architectural and component levels.