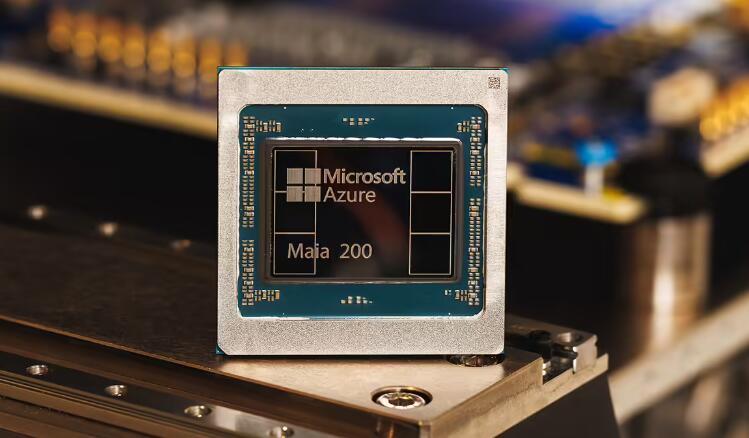

On January 27, Microsoft officially announced in a blog post the launch of its next‑generation custom AI accelerator chip, the Maia 200, designed specifically for large‑scale AI computing with a focus on both higher performance and energy efficiency to help lower the cost of AI services. Fabricated on TSMC’s 3nm process, the chip packs over 140 billion transistors and is already being deployed in Microsoft data centers, marking a new chapter in Microsoft’s in‑house AI chip development.

Microsoft’s released data shows impressive performance from the Maia 200, significantly outperforming competing solutions: its FP4 performance is three times that of Amazon’s third‑generation Trainium chip, and its FP8 performance surpasses Google’s seventh‑generation TPU. Compared to the latest hardware currently deployed in Microsoft data centers, the Maia 200 delivers roughly 30% better performance per dollar, highlighting its cost‑efficiency advantage.

As a dedicated inference accelerator, the Maia 200 is optimized for sustained computing tasks such as AI‑generated content (e.g., answering user queries), with a core goal of reducing the cost of running services like ChatGPT and Copilot. Microsoft stated that the chip will serve a wide range of AI models including OpenAI’s latest GPT‑5.2, bring significant cost advantages to Microsoft Foundry and Microsoft 365 Copilot, and also be used for synthetic data generation and reinforcement learning to aid the development of next‑generation internal models.

Beyond raw performance, the Maia 200 also stands out in sustainability. Microsoft Executive Vice President Scott Guthrie emphasized in a promotional video that the chip employs a more efficient liquid‑cooling design that enables “zero waste” operation, significantly reducing the environmental and water‑resource pressure of data centers on local communities. The Maia 200 is already in use at a data center near Des Moines, Iowa, with plans to deploy it next in the US West 3 data‑center region near Phoenix, Arizona, and to expand to further locations in the future.

Microsoft has long had a clear in‑house chip strategy, planning to primarily use its own chips in its data centers to create a closed‑loop ecosystem of “in‑house models + in‑house chips”, optimizing micro‑architecture and iterating models according to its own needs. Microsoft describes the Maia 200 as “the most efficient inference system it has ever deployed.” If its performance and efficiency meet expectations, the chip could significantly reduce the operational strain of running large language models, substantially cut costs for OpenAI and other partners, and help Microsoft solidify its advantage in the AI race.

ICgoodFind : Microsoft’s Maia 200, leveraging TSMC’s 3nm process and delivering strong performance while balancing energy efficiency and sustainability, is set to drive down AI service costs and reshape the competitive landscape for AI chips.